Serviços Personalizados

Artigo

Indicadores

Links relacionados

-

Citado por Google

Citado por Google -

Similares em Google

Similares em Google

Compartilhar

South African Journal of Industrial Engineering

versão On-line ISSN 2224-7890

versão impressa ISSN 1012-277X

S. Afr. J. Ind. Eng. vol.23 no.1 Pretoria Jan. 2012

CASE STUDIES

Development of an intelligent recognition and sorting system

IOptimisation Studies Unit, Department of Electronic Engineering Durban University of Technology, South Africa

IIOptimisation Studies Unit, Department of Electronic Engineering Durban University of Technology, South Africa poobieg@dut.ac.za

ABSTRACT

This paper presents the design of an intelligent recognition and sorting system. Intelligence is included in the system by using a multilayer feed-forward artificial neural network (ANN) for image recognition. Full duplex Bluetooth communication is used between the intelligent system and a robot-control computer. Image compression and principal component analysis (PCA) reduce the dimensionality of the data, and only the salient feature vectors of an image are used for image recognition. A control signal guides a robot arm to place an object into an allocated space. The system is relatively immune to noise, and can generalise when faced with missing data.

OPSOMMING

Hierdie artikel hou die ontwerp van 'n intelligente herkenning- en sorteringsisteem voor. Intelligensie word ingebou in die sisteem deur middel van 'n kunstmatige neurale netwerk vir beeldherkenning. Kommunikasie word bewerkstellig tussen die intelligente sisteem en 'n robot-beheerde rekenaar. Beeldkompressie en hoofkomponentanalise verminder die dimensionaliteit van die data en slegs kritiese kenvektore word aangewend vir beeldherkenning. 'n Beheersein rig die robotarm om die objek op 'n aangewese plek te plaas. Die sisteem is relatief immuun teen geraas en kan veralgemeen wanneer dit gekonfronteer word deur ontbrekende data.

1. INTRODUCTION

While human vision and template-based processing remain dominant in the manufacturing industry, there is also a strong tendency to automate inspection, assembling, sorting, and manufacturing tasks using intelligent systems [8, 9]. The design we propose employs an intelligent recognition system that uses an artificial neural network (ANN) to perform the recognition function. ANNs have been chosen for this project because they exhibit qualities that are characteristic of human intelligence [4, 11]. Following recognition, the objects are sorted into groups of identical items. The intelligent recognition and sorting system (IRASS) uses computational intelligence, data compression, feature extraction, and wireless data communication to perform its inspection, recognition, and sorting functions in a simulated plant environment.

This paper is arranged as follows: Section 2 discusses artificial intelligence (AI) systems, data pre-processing, and data communication used in this study; Section 3 describes the layout and operation of the IRASS system; Section 4 discusses the design of the ANN and how the ANN training vectors are determined; Section 5 describes the operation of the IRASS system; and Section 6 presents the summary and conclusion.

2. ARTIFICIAL INTELLIGENCE, IMAGE DATA PRE-PROCESSING, & DATA COMMUNICATION

2.1 Artificial Intelligence

AI is concerned with the design of intelligent systems - i.e., systems that exhibit characteristics normally associated with intelligence in human behaviour, such as understanding, learning, and problem-solving [1, 4, 11]. The main branches of AI consist of ANNs, genetic algorithms (GAs), swarm intelligence (SI), and fuzzy logic.

The IRASS system discussed in this research uses an ANN for image recognition. The ANN has been chosen because of its ability to provide solutions to problems that are characterised by high-dimension noise, nonlinearities, and error prone data [13]. In this study, image identification with ANNs is done by textural feature extraction using PCA, and then by training the ANN to recognise certain key textural features of an image. Textural features are characterised by the spatial distribution of gray levels within a neighbourhood, and are detected using pixels and pixel values.

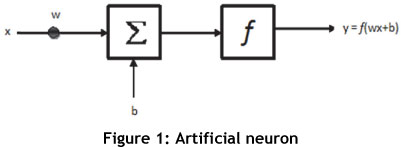

ANNs consist of massively interconnected simple parallel processing elements, called neurons, which are conceptually modelled on the biological nervous system [1]. The artificial neuron in Figure 1 is the elementary processing unit of an ANN. Each connection to the neuron is characterised by an input signal (x) and a weight (w). An activation function (f) shapes the output of the neuron (y). Neurons combine to form either feedforward ANNs (FF-ANN) or recurrent networks, and variants thereof. FF-ANNs are universally used in a variety of applications, and essentially correlate inputs with outputs. FF-ANNs consist of three or more layers: an input layer, one or more hidden layers, and an output layer. Signal propagation is unidirectional from input to output. Image recognition is done by extracting the key textural feature characteristics for training the network to recognise these characteristics. For this study, a multi-layer FF-ANN (MLFF-ANN) is designed and trained to execute intelligent recognition and placement operations in the IRASS system.

2.1.1 ANN training using PSO

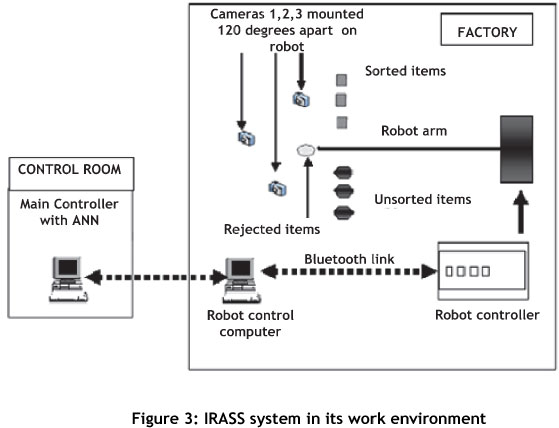

ANN training can be performed using either the standard gradient descent-based method of back-propagation training, GA training, or particle swarm optimisation (PSO) training. For this study, the PSO training method produced excellent results with regard to fastest computation time and minimal error. PSO is an evolutionary computation technique based respectively on a velocity (1) and a position (2) algorithm [2]. The algorithm is designed so that the position of each agent within the search space represents a potential solution to a specific problem [2, 14].

With regard to (1) and (2): vik is the velocity of agent i at iteration k; c, and c2 are adjustable acceleration parameters that respectively represent a particle's cognitive acceleration (agent's self-confidence), and social acceleration (swarm-confidence) factors; rand12 is a random number between 0 and 1; sik denotes the current position of agent i at iteration k; pbesti and gbesti respectively indicate the personal best of particle i and the swarm's global best position. Within this framework each agent, besides having individual intelligence, also develops certain social behaviour characteristics and coordinates its movement towards a common destination. The common point on which the swarm converges represents an optimal solution. For this study, the PSO has been used to determine the ANN's optimal weights and biases during the training phase of the ANN design.

2.2 Image data pre-processing

Image data pre-processing involves data compression with the two dimension (2D) Haar wavelet transform (HWT) and key feature extraction using principal component analysis (PCA). Image pre-processing is performed in order to:

i) Reduce bandwidth: Data compression and feature extraction reduces the size of the data transmitted over the communication channel, and frees channel bandwidth for transmitting several signals simultaneously over a common channel.

ii) Minimise ANN size: Reducing the quantity of data applied to the ANN reduces the size of the network and makes computation time faster.

iii) Reduce data dimensionality: To facilitate easy extraction of the feature vectors.

iv) Minimise computation time: To reduce the computational burden placed on the processor by ensuring a smaller data quantity and an optimally-sized ANN.

2.2.1 HWT data compression

The IRASS system uses data compression to reduce the dimensionality of the image data prior to its transmission via a Bluetooth data communication link. Data compression algorithms are designed to remove any redundant data to reduce their bandwidth requirements over a data communication channel [12, 19]. Image data compression is achieved using the wavelet transform (WT) prior to transmission over the Bluetooth channel. Wavelet compression was chosen over other traditional compression methods because of its ability to provide a multi-resolution representation of an image and yield a higher compression ratio [10, 15]. In wavelet compression, an input signal is decomposed into a summation of a series of base functions called wavelets that are generated through dilating and shifting operations from a basic or mother wavelet function [17, 18]. Several types of wavelets exist, such as the Mexican hat wavelet, the Morelett wavelet, and the Haar wavelet transform (HWT). In this paper, the HWT has been selected for image compression because of its simplicity, its excellent performance in different applications [10], its low computing demands in terms of reduced memory requirements [10, 16], and its fast computation speed.

2.2.2 Feature extraction using PCA

PCA overcomes the problem of processing large quantities of data by extracting only the key textural features from the compressed data image [7, 8, 9]. PCA is a classical statistical data reduction technique that reduces the dimensionality of a data set while retaining those characteristics of the set that contribute most to its variance [5]. Following 2D-HWT compression, the most significant elements of the compressed image data set are extracted using PCA, as follows:

(i) Determine the mean of the compressed data set, and subtract its mean from each data element to get the average. This produces a data set with a mean of zero.

(ii) Determine the covariance matrix, and then calculate the eigenvectors and eigenvalues of the covariant matrix.

Each column of the eigenvector matrix with the highest eigenvalues is the principal component of the data sets, and forms the feature vector set. Vectors with small dimensions can be ignored without compromising the integrity of the PCA representation. The PCA matrix of feature vectors is transmitted via the Bluetooth link to the main computer for recognition by the ANN system.

2.3 Data transmission

Our IRASS system uses two computers situated about 10m apart. The 'plant' computer is in the factory, and the 'control' computer housing our intelligent recognition system is in the control room. Communication between the plant computer and the control computer occurs via the Bluetooth communication link. Low cost Bluetooth operates reliably in an industrial environment, and is selected for this study due to its low power consumption, its excellent short-range communication capability, and its ability to co-exist effectively with wireless local area networks and other devices that operate in an industrial environment [6].

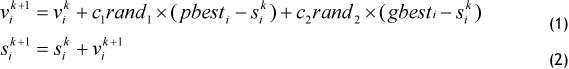

Following wavelet data compression and feature vector extraction, the image data is then transmitted from the local plant computer to the main computer containing the intelligent recognition system. The Bluetooth network (piconet) in Figure 2 was set up between the factory computer and the control computer. With regard to Figure 2, the following two Bluetooth links exist within the piconet:

Link 1 - from control computer to the robot control computer: The control room computer is configured as the 'master device' and houses the intelligent recognition system. The robot control computer behaves as a 'slave device' to the master.

Link 2 - from robot control computer to the robot arm controller: The robot control computer acts as the master for the robot, which in this application behaves as the slave, and performs wavelet compression of the image data, PCA, and execution of the control signals received from the master control computer. Communication between the control room and the factory floor is full-duplex.

3. IRASS SYSTEM LAYOUT AND OPERATION

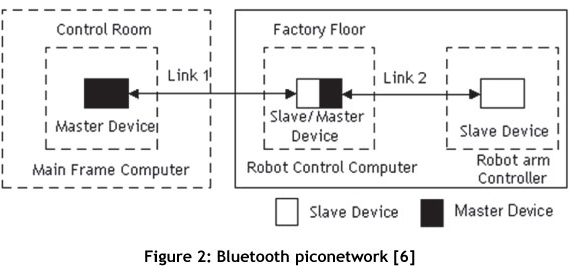

Figure 3 shows the layout of the IRASS system in the work environment. With regard to Figure 3: The factory floor control system contains the following: three 0.3 megapixel

Logitech web cameras that can capture up to thirty frames per second, positioned 1200 apart; a Lego Corporation Mindstorm robot controller and arm; and a robot control computer. The cameras simultaneously capture the image of the object surface from three different angles, enabling the creation of a comprehensive image matrix. Communication between each camera and the robot control computer is done via the three universal serial bus ports, and occurs at a maximum data transfer rate of 480 megabytes per second.

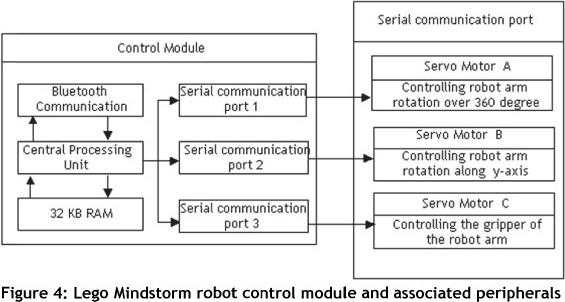

The robot control module in Figure 4 operates under direct control of the robot control computer, and controls the gripper as well as the vertical motion and angular rotation of the manipulator. The robot control computer performs the following tasks:

i) Receiving the image data from the three web cameras mounted on the robot arm.

ii) Image pre-processing (wavelet compression and PCA feature extraction).

iii) Transmitting the PCA image vectors to the remote mainframe computer.

iv) Receiving the object recognition control signals from the remote main frame computer.

v) Communicating control signals to the robot control module for guiding the robot arm.

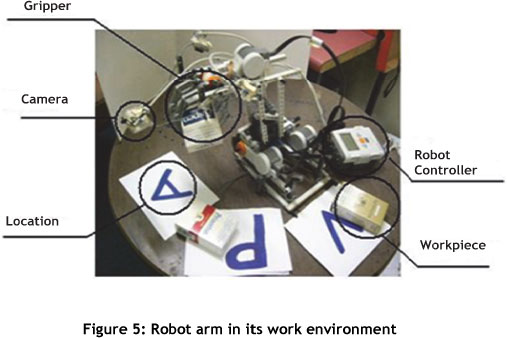

The Lego Mindstorm robot in Figure 5 has three degrees of freedom (DOF). The purpose of each DOF is:

to control the 3600 rotation of the robot manipulator;

to control the vertical motion of the arm; and

to control the gripper.

The main computer is housed in a control room 10 m from the work environment of the robot manipulator. It is programmed to perform the following tasks: receive image feature vector data from the remote robot control computer; perform image recognition using the ANN recognition system; transmit the object recognition signal to the robot control computer.

The operation of the IRASS system involves the following steps:

During the sorting operation, images of the unsorted objects are captured and pre-processed by the robot control computer using HWT image compression and PCA. The selected feature vectors are transmitted via the Bluetooth link to the main control computer for recognition by the ANN system.

Following recognition, the data corresponding to the recognised image is transmitted via the Bluetooth link to the remote robot control computer. This computer guides the robot arm to shift the objects from 'unsorted item' positions 1, 2 and 3 to the 'sorted item' positions 1, 2 and 3 (see Figure 3). The robot arm returns to its default position after executing each 'pick and place' operation.

This sequence of operations continues till all objects have been sorted and stacked into their respective locations. Figure 5 shows the robot executing its pick-and-place operations. Examples of the items used in the experiments are shown in Figure 6. These empty boxes were chosen to avoid stressing the weak construction of the Lego Mindstorm robot. The same ideas and methodology discussed in this paper can easily be implemented on a larger scale in a real world environment.

4. EXTRACTING TRAINING VECTORS AND ANN DESIGN

A comprehensive set of image vectors for each workpiece is determined as follows:

Three images of each item - at 1200, 2400, and 3600 orientation - are captured with the item in its first pose. The three images of the object in its first pose are clustered together to form a group.

The pose of each object is adjusted five times, and three images are taken of the object in each of its respective poses. We thus have five groups of images, with each group having three images of the same object positioned in a certain pose.

The process is repeated resulting in a total of 15 groups of vectors.

The above-mentioned process produces a comprehensive set of image vectors of each item being sorted. These vectors (of each object occupying different orientations and poses) ensure that accurate recognition will always take place, even under changing environmental conditions. A HWT compressed image is shown in Figure 7.

PCA is applied to the compressed image to extract the salient feature vectors given in Table 1, which shows the five columns of eigenvectors for each object, with each column corresponding to a specific pose of the object. These eigenvectors are transmitted via the Bluetooth network to the remote main computer in order to train the ANN system.

4.1 ANN recognition system

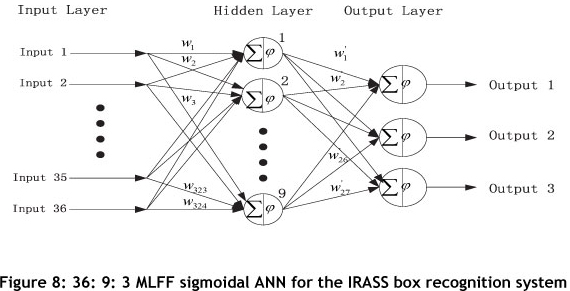

The ANN system used to perform the recognition function is given in Figure 8. The size of the input layer is determined from the number of rows in the eigenvector data matrix (in this case, 36). The decision to use a single hidden layer with 9 neurons was made following a series of experiments. The output layer consists of three neurons, and corresponds to the number of objects that must be identified for sorting. The recognition system in Figure 8 has the following characteristics:

Architecture:

The MLFF network architecture is chosen for this recognition system because of its dynamic universality and popularity over a wide range of applications [3]. The ANN has three layers and is arranged as follows: an input layer with 36 inputs; a hidden layer consisting of nine neurons; and an output layer with three neurons.

Topology:

Each neuron in the hidden layer is connected to each of the 36 inputs. The input layer therefore consists of 36 x 9 = 324 interconnections and weight matrices from the input layer to the hidden layer. Each neuron in the hidden layer is interconnected to each of the three neurons in the output layer. Therefore 9 x 3 = 27 interconnections and weights exist between the hidden layer and the output layer.

Activation function:

A sigmoidal neural network was chosen for our recognition system because of its universal suitability [3].

5. OPERATION OF THE IRASS SYSTEM

The IRASS system is designed to recognise objects, sort items into predetermined locations, and handle items that it was not trained to recognise.

5.1 Object recognition

The ANN target vectors are given in Table 2. Rows 1, 2, and 3 correspond to the recognition of the Aspen, Princeton, and Voyager boxes respectively. The results of the training are shown in Table 3. With regard to Table 3:

Row 1: Columns 1-5 show the results of the Aspen box.

The numbers in the first row of columns 1-5 are = 1. This corresponds to the target vectors for the Aspen box in Table 2, and indicates that the ANN recognises the Aspen box. Similar reasoning also applies to Row 2: Columns 6-10 and Row 3: Columns 11-15 for the Princeton and Voyager boxes respectively.

5.1.1 Testing the ANN

The dataset used to test the performance of the ANN is determined in the same way as for the training data set. The results of the test are shown in Table 4, and prove that the ANN is properly trained to recognise the three boxes.

5.1.2 Determining the robustness of the ANN recognition system

An important characteristic of ANNs is their ability to deliver a stable output even in the face of noise. We tested the robustness of our ANN recognition system by introducing artificial 'salt and pepper' noise on to the image. The results of the recognition are shown in Table 5, and prove that the network is capable of recognising the boxes even when disturbances are present. This will not be true for systems that work off an 'inflexible template'.

5.2 Location recognition: Placing an object into the sorted position

The ANN system for recognising the location where the object is to be placed also resides in the main control computer. The procedure followed to determine the eigenvectors for the three object locations indicated by 'A', 'P', and 'V' in Figure 5 is similar to that which was followed for extracting the workpiece vectors, and resulted in a 30x1 matrix of eigenvectors. A 30:9:3 ANN was trained and tested to recognise the correct location to place each of the three objects. The size of the network is determined as follows: 30 inputs for the feature vectors and three outputs for three locations where the objects are to be placed; the hidden layer consisting of nine neurons was determined experimentally. Our code counts the number of rows in the matrix and then determines which ANN to use: 36 rows indicates box recognition, and 30 rows means location recognition. Following recognition, the main control computer then transmits a control signal via the Bluetooth link to the robot control computer. The robot controller will guide the robot arm to move each box to its correct location.

5.3 Determining whether an object is desirable or not 5.3.1 Desirable objects

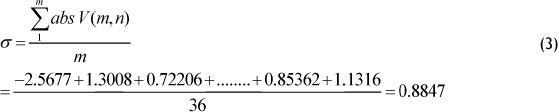

The IRASS system is designed to place any unidentified objects into an allocated area known as 'the dump'. We arbitrarily selected column 1 of the training vectors from Table 1 to determine whether an item is desirable or not. (Any other column of the eigenvector training matrix could have been selected for this.) The system differentiates between desirable and undesirable objects using (3).

With regard to (3), m > 1 and n = 1; m and n denote the row and column numbers respectively; V represents the respective training vector matrix; and σ is the average value. Desirable objects fell within the 0.8 < σ < 1 range. Since the average value falls within the desirable object range, the IRASS system will place the box at the location corresponding to position 'A' for the Aspen box.

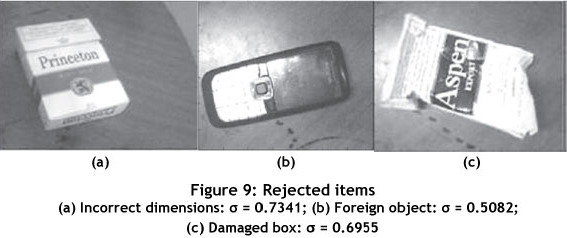

5.3.2 Rejected objects

Some undesirable objects that were used to determine the response of our IRASS system are shown in Figure 9. For these objects σ falls outside the desirable object range; the IRASS system rejects them and moves them to 'the dump'.

6. SUMMARY AND CONCLUSION

An intelligent system based on ANNs has been designed to perform recognition and sorting tasks. The IRASS control system consists of a robot control computer and a main control computer that communicate via a Bluetooth channel. The robot control computer performs image compression using the HWT, and PCA for extracting the salient feature vectors from the compressed data. A significant reduction in data dimensionality was achieved with the HWT and PCA. The salient feature vectors are transmitted to a control computer where the two ANN systems reside. The ANNs used in the IRASS system each serve a specific purpose. A 36:9:3 ANN is trained to recognise the items to be sorted, and a 30:9:3 network is designed to identify the correct location for the robot arm to place an object. The optimal size and configuration of the network was determined experimentally during the design stage of the study. Optimal training of the ANNs was achieved using the PSO algorithm. The robot control module positions an item in its correct location using the signal from the main control computer. Items not recognised by the IRASS system are moved to a 'dump'.

An attractive feature of the IRASS system is its ability to perform satisfactorily when faced with adverse operating conditions. This feature is attributed to the ANNs' ability to generalise when faced with missing data, and to remain robust in the face of disturbances. Another attractive feature of the IRASS system is the simplicity of its design, making it an attractive choice over expert systems like fuzzy logic or statistical techniques such as support vector machines.

REFERENCES

[1] Haykin, S. 1999. Neural networks: A comprehensive foundation, Prentice Hall. [ Links ]

[2] Kennedy, J. & Eberhart, R.C. 1995. Particle swarm optimization, Proceeding of the IEEE International Conference on Neural Networks, 4, 1942-1948. [ Links ]

[3] Kilian, J. & Siegelmann, H.K. 1996. The dynamic universality of sigmoidal neural networks. Information and Computation, 128(1), 48-56. [ Links ]

[4] Konar, A. 1999. Artificial intelligence and soft computing: Behavioral and cognitive modeling of the human brain, CRC Press. [ Links ]

[5] Lee, J. A. & Verleysen, M. 2007. Nonlinear dimensionality reduction, Springer. [ Links ]

[6] Li, Z., Govender, P. & Kanny, M. 2008. ANN based machine vision system for industrial sorting. Proceedings of the 2nd Robotics and Mechatronics Symposium, Bloemfontein, South Africa. [ Links ]

[7] Nixon, M.S. & Aguado, A.S. 2008. Feature extraction and image processing, Academic Press. [ Links ]

[8] Omid, M., Mahmoudi, A. & Omid, M.H. 2009. An intelligent system for sorting pistachio nut varieties. Expert Systems with Applications, (36), 11528-11535. [ Links ]

[9] Omid, M., Mahmoudi, A. & Omid, M.H. 2010. Development of pistachio sorting system using principal component analysis (PCA) assisted artificial neural network (ANN) of impact acoustics. Expert Systems with Applications (37), 7205-7212. [ Links ]

[10] Raviraj, P. & Sanavullah, M.Y. 2007. The modified 2D Haar wavelet transformation in image compression. Middle East Journal of Scientific Research, 2(2), 73-78. [ Links ]

[11] Rich, E. & Knight, K. 1996, Artificial intelligence, McGraw-Hill. [ Links ]

[12] Sayood, K. 2000. Introduction to data compression, Morgan Kaufmann. [ Links ]

[13] Seetha, M., Muralikrishna, I.V., Deekshatulu, B.L., Malleswari, B.L., Nagaratna, & Hegde, P. 2005-2008. Artificial neural networks and other methods of image classification. Journal of Theoretical and Applied Information Technology, 1039-1053. [ Links ]

[14] Shi, Y. & Eberhart, R.C. 1998. Parameter selection in particle swarm optimisation. Proceedings of the 7th Annual Conference on Evolutionary Programming, 591-601. [ Links ]

[15] Shum, H.Y., Kang S.H. & Chan S.C. 2003. Survey of image-based representations and compression techniques. IEEE Transactions on Circuits and Systems for Video Technology, 13(11), 1020-1037. [ Links ]

[16] Stankovic, R.S. & Bogdan, J.F. 2003. The Haar Wavelet: Its status and achievements. Computers and Electrical Engineering, 29, 25-44. [ Links ]

[17] Zhang, C., Zhou, Y.M. & Zhang, K.Z. 2008. Fast hybrid fractal image compression using an image feature and neural network. Chaos, Solitons & Fractals, 37(2), 623-631. [ Links ]

[18] Zhang, H., Cartwright, C.M, Ding, M.S. & Gillespie, W.A. 2000. Image feature extraction with various wavelet functions in a photorefractive joint transform correlator. Transactions on Optics Comunications, 185, 277-284, Elsevier Science. [ Links ]

[19] Ziviani, N., Moura, E., Navarro, G. & Baeza-Yates, R. 2000. Compression: A key for next-generation text retrieval systems. IEEE Computer, 33(11), 37-44. [ Links ]

* Zhi Li has completed the MTech degree in industrial engineering at the Department of Industrial Engineering, Durban University of Technology.

** Corresponding author