Services on Demand

Article

Indicators

Related links

-

Cited by Google

Cited by Google -

Similars in Google

Similars in Google

Share

Stellenbosch Papers in Linguistics Plus (SPiL Plus)

On-line version ISSN 2224-3380

Print version ISSN 1726-541X

SPiL plus (Online) vol.56 Stellenbosch 2019

http://dx.doi.org/10.5842/56-0-782

ARTICLES

Definition and design: Aligning language interventions in education

Albert Weideman

Faculty of the Humanities, University of the Free State E-mail: albert.weideman@ufs.ac.za

ABSTRACT

The management of language diversity and the level of mastery of language required by educational institutions affect those institutions from early education through to higher education. This paper deals with three dimensions of how language is managed and developed in education. The first dimension is the design of interventions for educational environments at policy level, as well as for instruction and for language development. The second concerns defining the kind of competence needed to handle the language demands of an academic institution. The interventions can be productive if reference is made throughout to the conditions or design principles that language policies and language courses must meet. The third dimension concerns meeting an important requirement: the alignment of the interventions of language policy, language assessment and language development (and the language instruction that supports the latter). This paper employs a widely-used definition of "academic literacy" to illustrate how this definition supports the design of language assessments and language courses. It is an additional critical condition for effective intervention design that assessments and language instruction (and development) work together in harmony. Misalignment among them is likely to affect the original intention of the designs negatively. Similarly, if those interventions are not supported by institutional policies, the plan will have little effect. The principle of alignment is an important, but not the only, design condition. The paper will therefore conclude with an overview of a comprehensive framework of design principles for language artefacts that may serve to enhance their responsible design.

Keywords: language interventions; language courses; language assessments; language policies; academic literacy; design principles

1. Introduction: deliberate solutions instead of remedies built on assumption

It was H.L. Mencken (1880-1956) who observed that "For every complex problem there is an answer that is clear, simple, and wrong". It cannot be denied that problems of language, and specifically the use of language within institutions, from the state to schools and universities, are indeed complex problems. For one thing, they are inevitably more than merely language problems, a reality that in itself signals their complexity. In addition, it is one of the theses of this paper that a good deal of the enduring intractability of institutional language problems can be ascribed to their solutions being built on unexamined (and usually simple) assumptions. We continue to be plagued by these problems because we avoid the effort to question our own prejudice, and, sometimes, the biases built into the fabric of the institutions we are part of, or even the way we perceive our institutional or communal status. Elsewhere (Weideman 2006, 2018, 2019), I have given examples of how language solutions that are arrived at intuitively to remedy low levels of academic literacy, however attractive or sensible they may appear to the uncritical eye, could well be tripped up by what they forget to examine: hidden assumptions that might inhibit a more defensible and potentially more effective solution. One glaring and well-known further example is the choice by some lower-middle- and middle-class parents of the language in which their children must receive education. With all the prejudice that accompanies their communal perception of their languages as ones of lower status, they refuse to see the thoroughly researched point about the benefits of instruction of their children in their first language (Lo Bianco 2017). They view arguments for that not as valid, but rather as the prejudice of others, and specifically of politically and historically untrustworthy others, who (still) do not have their best interests at heart.

The complexities of the use of language in educational institutions is the particular focus of this contribution. It is a complex problem because we are dealing here not only with "language" in general, but with a specific type and kind of language - academic discourse (Patterson and Weideman 2013a). The problem of our time that has engaged many of us professionally for more than a decade now is the doubt that surrounds the competent use of language for academic purposes in an era of massification in higher education (Read 2015, 2016). This massive broadening of access to university education - not only in South Africa, but globally - constitutes the first unexamined assumption, as The Economist (2018) pointed out recently:

Policymakers regard it as obvious that sending more young people to university will boost economic growth and social mobility. Both notions are intuitively appealing […] But comparisons between countries provide little evidence of these links […] In a comparison of the earnings of people with degrees and people without them, those who start university but do not finish are lumped in with those who never started, even though they, too, will have paid fees and missed out on earnings […] Including dropouts when calculating the returns to going to university makes a big difference.

The returns, in fact, are in the latter case often substantially less than one quarter of the expected rate, and even lower in rich countries. The question that is seldom asked is whether a country needs more people with degrees, or more skilled people. As The Economist (2018) article being referred to here goes on to point out, about half of unemployed South Koreans now have degrees. Given the reality of massification, however, we are left with students who arrive at university underprepared, also as regards the ability they have to handle the demands of the particular kind of language they must use in and for tertiary education (Cliff 2019).

In South Africa, the problem is further compounded by the mismatch between language assessment and language instruction at school, as well as the disharmony between the curriculum (Department of Basic Education 2011) and the school-leaving, exit examinations for language (Du Plessis, Steyn and Weideman 2016). There is, moreover, a lack of alignment between language preparation at school, and the expectations of universities about levels of academic literacy, the ability to use academic language. In addition, in the study referred to here, there is clear evidence of the ongoing unequal treatment of certain language groups, as if the complexity of equitably dealing with a multilingual diversity is not enough of a challenge (Weideman, Du Plessis and Steyn 2017). The problems of being competent to handle language affect education at all levels - from early on, at the emergent literacy stage that one encounters in the pre-school setting (Gruhn and Weideman 2017), right through to secondary school (Myburgh 2015) and beyond. The focus of this discussion, however, will be academic literacy, the ability to use language competently in higher education, from which most of the examples will come.

The argument of this contribution will be that, in order to propose deliberate and theoretically defensible solutions for managing both diversity and competence in language within education, it will be profitable to begin with an examination of some of the prominent conditions and requirements we (uncritically) use or abuse when making plans to solve language problems. By coming up with theoretically defensible solutions, the designers of these interventions are not, by virtue of seeking such theoretical justification, subscribers to or mere victims of modernist intentions. By seeking rational grounds for their designs, they do not ipso facto start out from the bias that "theoretical" or "scientific" is always best, always authoritative or always preferable. The history of applied linguistics, the discipline that examines the design of language interventions and plans, indicates that those theoretical defences we can make today will almost certainly no longer be fully valid tomorrow (Weideman 2017). The theoretical rationales for language plans are always open to challenge and change, as of course are their political, ethical, social, juridical or economic justifications. Such is the dynamic nature of our current reality. In tackling the language problem in an applied linguistic way, we take a detour into theory and analysis in an effort to enhance the degree of sophistication as well as the efficiency of the designed or planned solution. Such a deliberate approach should at the same time serve to raise our awareness that the other conditions and requirements for our designs are more than merely theoretical. Exactly because planned interventions are designed solutions, these requirements or conditions together constitute the design principles to which technical artefacts are subject.

Though it is not the first phase of the design, the theoretical defence of the designed language intervention should happen as early as possible in the design process, in order for the initial plan (and the uncritical assumptions that may support it) to be theoretically examined and corrected, if necessary. So the theoretical justification for the plan is an important part of the design, and its essence is the definition of the language competence in the educational environment chosen here for illustration. That definition, which is dependent on theoretical insight, will be discussed below, after we have first considered the various phases in the design of these artefacts. The discussion will serve to raise the issue of an important design principle: the alignment of language instruction and assessment, and both of those with institutional language policy or policies. Once we have considered how and whether these three kinds of applied linguistic artefacts - language policy, language course and language assessment - work together in harmony, we shall also be able to check, with reference to real-life examples, the hidden assumptions behind their mismatch in educational settings.

Finally, the technical alignment of the multiplicity of interventions that present themselves as applied linguistic solutions to the institutional language problems that will be discussed below is not the only design principle or condition at work in this complex case. The analysis will therefore present us with a framework of technically stamped design principles that attempts to be more comprehensive, and strives to provide the basis for the responsible design of the language interventions envisaged.

2. The phases of language intervention design

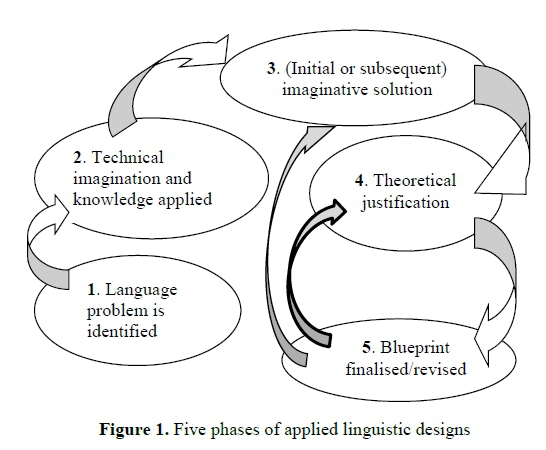

The degree of deliberation with which language interventions are designed gains more relief when we consider the five phases of such design, with their various sub-stages. In language assessment, the most well-known model of these phases is the eight-cycle one of Fulcher (2010: 94); Read (2015:176-177) discusses a five-stage process, starting with planning, which is followed by design, operationalisation, trialling and use. Yet the first of these models is unsatisfactory on at least one critical count: it places the theoretical justification of the design in initial position. It can be demonstrated, however, that in real-life language intervention design, there are at least two preceding phases before that point is reached. So for this discussion, I have chosen to adapt the three-stage model for designed artefacts proposed by Schuurman (1972: 404) by adding at least another two, and to provide, furthermore, for iteration, a cycling back between phases. The following gives a diagrammatic representation of the phases thus distinguished (adapted from Weideman 2009: 244-245):

I shall use this five-phase model, with its potential to cycle back from its fifth to either its fourth or even third phase, to illustrate the various possible combinations of policy, curriculum (instructional), and assessment intervention designs that one may find in actual institutional processes.

3. How policy, curriculum and assessment may be configured in various phases of design

Phase 1

Institutions are made up not only of individuals, but also of various communities, all of which are in interaction. Institution- or sector-specific language problems, such as low levels of academic literacy, are thus first articulated by one or more of the communities that make up the institution. In the case of a university, its institutional awareness of a language problem - for example, unacceptably low levels of language ability among students - perhaps first grows and is identified among its teaching community, the lecturers and academic staff, before being articulated by its administrative and executive decision-makers. This awareness and the identification of the language problem that characterises it are clearly the first phase of designing an intervention (or set of interventions) to deal with the problem.

One would think that academic literacy, the ability to use academic language competently, would be the first and only concern in academic communities. Such is the complexity of language problems, however, that not all such problems at university will be those that have to do with making education and study more effective: student communities may, for example, make language demands that are primarily politically inspired, and have little bearing on scholarship. When decision-makers yield to the politically expedient solution, that solution may be rationalised in many ways that might have the pretence of having to do with education, but that actually have no theoretical justification. There are at least two recent cases in South Africa where the language policies of universities were changed for reasons other than academic ones, with negative consequences that were foreseen, but ignored.

With regard to Phase 1 of the intervention design, we may observe that this phase may have up to four or more sub-stages: in the first sub-stage, the problem is (communally) identified; in the second, it is officially (administratively) acknowledged; in the third, it is further articulated; and in the fourth, a decision is made to allocate institutional resources to solve it. There is nothing scientific (yet) about any of these stages. All are part and parcel of the practical, sometimes day-to-day, work of an institution. The solution can even be arrived at simply: through what is most expedient politically, or most conventionally appealing, or perhaps least costly. That simple and apparently less costly solution can, however, in the long run come to have substantial waste (and therefore cost) associated with it.

The institutional acceptance of the use of academic literacy tests at some (then multilingual, now only nominally so) institutions of higher education in the past decade or so, and specifically the adoption of the Test of Academic Literacy Levels (TALL) and its Afrikaans counterpart, the Toets van Akademiese Geletterdheid (TAG), illustrates the sub-stages in the design of a language solution well. When, as a result of massification, students whose academic literacy levels were in doubt were being accepted by these universities, its teaching communities produced sufficient anecdotal evidence for their administrative and executive branches to respond to what was identified to be risk as regards the language ability of some students. It was only subsequently discovered that the problem was not restricted to those for whom the languages of instruction - then used institutionally - were additional, not first languages, but in fact prevailed among the student community as a whole. When eventually the academic literacy levels of all students were measured, including those of students whose first languages were the languages of instruction (English and Afrikaans), it illustrated the view that learning to use language for academic purposes is like the learning of another, initially somewhat unfamiliar language (Patterson and Weideman 2013a). In most of the universities referred to here, adopting the measurement of language ability as a first step in solving the problem was already an indication that they were making a deliberate institutional choice, rather than adopting a simple solution. What is more, institutional policy prescriptions were instituted to make the taking of the language test obligatory, and - in the case of at least one institution - a stipulation to make the enrolment on a language development course in academic literacy compulsory as well, along with another thoughtful arrangement: to make language courses a requirement for all students, though at different levels (placement on which was determined by the assessment).

What if a simple solution had been sought, or a less deliberate one? What if the articulation of the problem had never progressed beyond the familiar but imprecise academic rhetoric that would have been employed in identifying it (for example, "These students can't write a coherent sentence, let alone a paragraph" or "These students don't know the first thing about grammar")? The immediate intuitive solution might then have been: we should set up a writing centre, or we should place at-risk students on a grammar improvement course. To solve the problem of identifying those students who, according to this simple and premature diagnosis, are unable to write or are incompetent in grammar, a commercially available test might have been sought, found, and paid for by raising student fees. If the problem has not been identified with much deliberation, the selection of an inappropriate language test is more than likely to be presented as solution. In a word: the problem may never be referred for applied linguistic scrutiny. It may equally well be that the commercial test acquired and administered is inadequate and theoretically indefensible (Van Dyk and Weideman 2004), and therefore inappropriate. What is more, and relevant for the current discussion: such an externally designed test may also lack alignment with the intentions of both the institutional language policy and with the language instruction that may follow the administration of the test. The permutations of misalignment are numerous, but all are potentially debilitating.

Phase 2

Phase 2 concerns the preparation for implementing and administering the interventions selected. Here, the technical creativity and imagination of those responsible for implementation come into play, along with whatever theoretical or sometimes pseudo-intellectual knowledge seems relevant for devising a solution.

Misalignment among the interventions adopted may already begin to show. The draft institutional language policy may have been thus formulated that it distorts the actual situation, say, in respect of the diversity of languages that are deemed worthy of being accommodated institutionally (a refrain in the latest draft language policy for higher education; Department of Higher Education 2018).

Let us assume, however, that those tasked to design the interventions are applied linguists who are likely to take a deliberate route, and, even more happily, are critical of pat and conventional solutions. The scenario that may then unfold is that they are wary of selecting a commercial test, or of making one that is biased towards a view of language ability as narrowly defined grammatical competence, or of language as being made up of disparate "skills" (listening, speaking, reading, and writing). In such a case, they might rather, as was the case at one South African university in the previous decade (Weideman 2003), base their intervention design - both of assessment, and of language instruction and language development - on a communicative, functional, and interactive view of language. Not only did this allow alignment of assessment, instruction, and learning, but there was also no conflict with institutional policy. Once again, the choice will then be not for a simple, but for an admittedly complex solution, and one that would certainly be more appropriate for the problem.

Phase 3

In the third stage of language intervention design, we find the further development of the initial (preparatory) formulation of an imaginative solution to the problem. Here, the technical fantasy and imagination of the designers of the language intervention takes the lead. So strong is it, in fact, that, for the moment, it overrides analytical corrections. Other, practical considerations come into play: what kind of resources are institutionally available to mount the interventions? Would a language plan that prescribes an assessment to be obligatory be possible in terms of institutional regulations? Are there enough human resources to offer a course for the development of language ability, should the assessment identify too low levels of language ability? What will the administration of the designed interventions cost, and what would be the benefits if one set of designs, instead of another, were adopted? In short: what technical means are available to achieve the designed ends? It is this relation between means and ends that will determine whether an ambitious design may have to be modified in order to make the eventual execution of the design affordable.

Phase 4

Once again, this phase of the language intervention designs may stretch over several sub-stages. The first of these is to find a theoretical justification for the preparatory solutions suggested in the previous phase. It goes without saying that theory that is current rather than conventional is to be preferred: mainstream and conventional justifications may already have influenced the technical imagination of the intervention designers in phases two and three. If there is to be any innovation in the design, we need new theoretical stimuli to underlie the proposed interventions. Where new theoretical justifications are difficult to come by or unavailable, empirical and analytical arguments (with reference, for example, to recent case studies of planned solutions to comparable language problems) may be sought and advanced. There must be analytical deliberation in the plan. Without that, the plans do not qualify as applied linguistic artefacts.

The second sub-stage takes the definition of what kind of language is characteristic of the institution several steps further. At this stage, that definition acts as the construct of what the idea of language is that lies at the heart of the interventions that have been conceived, and might be modified in light of that idea. For academic institutions, it has been summarised as follows:

Academic discourse […] includes all lingual activities associated with academia, the output of research being perhaps the most important. The typicality of academic discourse is derived from the (unique) distinction-making activity which is associated with the analytical or logical mode of experience.

(Patterson and Weideman 2013a: 118)

In this second stage of Phase 4 of the design, that definition may well be further operationalised, which means, for language assessment, that it may be further analysed in order to identify its components. I shall return below to the steps that may then follow. The important point here, though, is that this kind of definition may have a salutary and corrective effect on the designs that have been preliminarily proposed. For the institutional language plan, it may show, for example, that extraneous factors have been allowed to confuse the issues that really need regulating, namely about how to prioritise the use of language (or several languages) in order to make learning possible. For assessments, it might show a bias towards an outdated, skills-based justification for the design, instead of a functional, cognitive, and potentially innovative one. For language development designs, it may reveal a reliance on courses based on views of language that consider it to be merely a combination of sound, form, and meaning. All of these considerations indicate that the initial solutions designed (Phase 3) cannot yet be given the final form of a blueprint (Phase 5); those solutions must be revisited so that the theoretical justifications adopted for them (Phase 4) can become credible defences of the design.

The newly adopted theoretical justification will also then ensure that the specifications of the components of the plan can be refined, and the results of a trialling of the design can be empirically gathered and analysed. Say, for example, in the case of an institutional language plan, that it is first tested out over a period of a year or six months, during which solid records are kept by reputable designers, not in-house politicians. Or a language assessment may first be piloted, or a new kind of language instruction may be given a trial run, again accompanied by noting and recording design weaknesses and strengths. All these activities will feed into the fifth phase of test design and construction.

Phase 5

The endpoint of the design process for language interventions, its fifth and final phase, comes when enough empirical and analytical information about the workings of the revised designs has become available so that the blueprints of the interventions can be finalised. Such finalisation includes taking into account results of trials and perhaps reception studies (especially in the case of language assessments and courses). In the case of policy interventions, reception studies could be equally useful, followed by an analysis of the results of wide consultations or even a trial implementation period. Conventionally, this is called the "initial validation" of the intervention. The whole intervention might now or later, depending on the urgency with which it needs to be implemented, again be systematically checked against all the principles of responsible design that we shall be returning to below.

What if these analyses have found obvious but hitherto unforeseen and unanticipated design flaws, such as too low levels of reliability in the case of language tests, or low acceptability by users of a language course, or high levels of resistance in the case of a language policy? Or unanticipated negative consequences in any of these? If that is the case, the intervention designers have little option but to revert to phase four. In reconsidering their theoretical rationale, or re-imagining the components of the intervention, they should not be surprised if it calls for making unexpected compromises (Knoch and Elder 2013: 62f.). Before the next attempt at finalising the blueprint, it will again have to be put on trial, otherwise some design principles - notably those that require the intervention to have technical reliability, adequacy, intuitive appeal, and appropriateness - will not be satisfied (for a discussion, see the section below on "A comprehensive framework…").

One must note that, in language intervention design across all these phases, it is not theory that plays a leading role, but the technical imagination and fantasy of the persons making the plan. In one particular phase, the fourth, the theoretical defensibility becomes crucial, and then it is done to support (and keep supporting) the design and its justification. That is the focus of the discussion in the next section.

4. The critical importance of a theoretically defensible definition

This paper depends primarily on experience in course and test design, but it is worthwhile at least to make some tentative claims for the kind of intervention that is known variously as a "language policy" or "language plan". With assessment and course design, producing a theoretically relevant and defensible definition of what the kind of language is that will be developed or tested should always be supremely important. Without it, the results that the intervention is claimed to have become virtually uninterpretable. So in language testing and course design, we depend on the construct of the language ability which is the focus of these interventions. In respect of academic language ability, this paper refers cursorily only to the latest rationale, but its history and how it was arrived at are discussed at length elsewhere (Patterson and Weideman 2013a, 2013b; Weideman, Patterson and Pot 2016; Cliff 2014, 2015); the paradigmatic origins of this in the work of sociolinguists such as Hymes (1972), Habermas (1970) and Halliday (1978) have also been noted in more detail in Weideman, Du Plessis and Steyn (2017).

If we start the theoretical justification with an idea of academic language as characterised by distinction-making (see above, the discussion of phase 4 of the intervention), then, at least in the case of language course and test design, we should take further this definition of academic language ability - the mastery of language that is logically stamped for the sake of making academic arguments, or what is called, in shorthand form, "academic literacy". How to take this further will depend on the typicality of the kind of intervention design - whether it is a language plan, course or test. For language tests and courses, for example, making the construct operational so that it becomes measurable, teachable and learnable may begin with articulating it in terms of several components. The latest formulation of these components below is taken and adapted from Weideman (2019); it defines the ability to use language for academic purposes as a functional ability to:

-

understand and use a range of academic vocabulary as well as content or discipline-specific vocabulary in context;

-

interpret the use of metaphor, idiom and non-literal expression in academic language, and perceive connotation, word play and ambiguity;

-

understand and use specialised or complex grammatical structures correctly, also to handle texts with high lexical diversity, containing formal prestigious expressions, and abstract/technical concepts;

-

understand relations between different parts of a text, be aware of the logical development and organisation of an academic text, via introductions to conclusions, and know how to understand and eventually use language that serves to make the different parts of a text hang together;

-

understand the communicative function of various ways of expression in academic language (such as defining, providing examples, inferring, extrapolating, arguing);

-

interpret different kinds of text type (genre) with a sensitivity for the meaning they convey, as well as the audience they are aimed at;

-

interpret, use and produce information presented in graphic or visual format in order to think creatively: devise imaginative and original solutions, methods or ideas through brainstorming, mind-mapping, visualisation, and association;

-

distinguish between essential and non-essential information, fact and opinion, propositions and arguments, cause and effect; and classify, categorise and handle data that make comparisons;

-

see sequence and order, and do simple numerical estimations and computations that express analytical information, that allow comparisons to be made, and can be applied for the purposes of an argument;

-

systematically analyse the use of theoretical paradigms, methods and arguments critically, both in respect of one's own research and that of others;

-

interact with texts both in spoken discussion and by noting down relevant information during reading: discuss, question, agree/disagree, evaluate and investigate problems, analyse;

-

make meaning of an academic text beyond the level of the sentence; link texts, synthesize and integrate information from a multiplicity of sources with one's own knowledge in order to build new assertions, draw logical conclusions from texts, with a view finally to producing new texts, with an understanding of academic integrity and the risks of plagiarism;

-

know what counts as evidence for an argument, extrapolate from information by making inferences, and apply the information or its implications to other cases than the one at hand;

-

interpret and adapt one's reading/writing for an analytical or argumentative purpose and in light of one's own experience and insight, in order to produce new academic texts that are authoritative yet appropriate for their intended audience.

For testing the language ability thus articulated, these components can then be further matched up with task types that might be used to assess the level of mastery (again taken and adapted from Weideman 2019):

Such matching is taken yet another step further when specifications are drawn up for the various subtests that have been selected from a list such as those mentioned in the second column above. The specifications may include the format of the subtests (for example, multiple choice or open-ended questions), and the weighting chosen for each. Below is an example of the design specifications of an academic literacy test for senior social sciences students about to embark on postgraduate study. Its name, the Assessment of Preparedness to Present Multimodal Information (APPMI), reflects the purpose of the test, while the chosen design and relative importance of its elements are presented in Table 2:

In the case of course design, there may similarly be such a detailed level of specification, with each new level demonstrably tied to the original construct ("academic literacy"). Is this the case in making language plans and devising language management strategies? If not, then there is perhaps something to learn in this regard from the other two kinds of language intervention design. That level of sophistication in an institutional language policy might well serve to enhance the quality of that kind of language intervention too, as would adherence to the set of language intervention design principles that we now turn to and consider below.

5. A comprehensive framework of design principles for language interventions

A critical condition for effective language intervention design is that assessments and language instruction (and development) work together in harmony. Misalignment among them is likely to affect the original intention of the designs negatively. Above, in the discussion of the various phases of language intervention design, we have noted several instances of where, on being implemented, such designs were discovered to be out of sync, in particular as regards their views of language. A purely grammatical or structural view of language that lies behind an assessment might conflict with and contradict the functional perspective that supports the instruction as well as the language development that it is intended to nurture. Similarly, if language courses and tests are not made possible and maintained by institutional policies, the combined effect of the interventions may suffer. The principle of alignment among various language interventions, at least within the same institution, is an important, but of course not the only design principle. There are more than the two principles that have been prominent in the discussion so far, namely the planned harmony among interventions, and their theoretical justification.

To articulate the other language intervention design principles, this paper will again not justify their conceptualisation in the same detail as has been done elsewhere (e.g. Weideman 2009, 2014), but will summarise them below in order to give an indication of how they apply generally across the designs for language policies, language courses, and language tests. The summary below is taken and adapted from Weideman (2017: 225), with the key concepts in italics, and each principle numbered for the sake of discussion:

1. Systematically integrate the multiplicity of components of the intervention, so that they work as a unity to achieve the purpose of the intervention; also use multiple sets of evidence in arguing for the validity of the language plan, language test or language course design.

2. Specify clearly and to the users of the design, and where possible to the public, the appropriately limited scope of the language policy, the assessment instrument or the intervention, and exercise humility in doing so. Avoid overestimating, or making inappropriate claims about what the solution proposed can in fact accomplish.

3. Ensure that the policies set out, the measurements obtained or the instructional opportunities envisaged are consistent, and obtain, if possible, empirical evidence for the reliability of the solution designed.

4. Ensure effective language strategy, measurement or instruction by using defensibly adequate policies, instruments or material.

5. Have an appropriately and adequately differentiated plan, course or test.

6. Make the plan, course or test intuitively appealing and acceptable.

7. Mount a theoretical defence of what is adopted as policy, or what is taught and tested, in the most current terms, or at least in terms of clearly articulated alternative theoretical paradigms or perspectives, or the analysis of empirical data.

8. Make sure that the policy is well-articulated and intelligible; that the test yields interpretable and meaningful results; or that the course is intelligible and clear in all respects.

9. Make accessible to as many as are affected by them not only the plan, course or test, but also additional information about them, through as many and diverse media as are appropriate and feasible.

10. Ensure utility; make the policy an efficient measure, or present the course and obtain the test results efficiently and ensure that all are useful.

11. Mutually align the policy with the test or language development that it prescribes; the test with the instruction that will either follow or precede it, harmonising both policy, test and instruction as closely as possible with the learning or language development foreseen in their design.

12. Be prepared to give account to the users as well as to the public of how policy has been arrived at, the test has been or will be used, or what the course is likely to accomplish.

13. Value the integrity of the policy, test or course; make no compromises of quality that will undermine their status as instruments that are fair to everyone, and that have been designed with care and love, with the interests of the end-users in mind.

14. Spare no effort to make the policy, course or test appropriately trustworthy and reputable.

For their conceptualisation, all of these principles depend on the assumption that design is a technically stamped activity, in the sense of having at its core the idea of shaping, influencing, forming, arranging, facilitating, or planning. In designing language interventions, the technical imagination and fantasy of the designer of the intervention leads the way. Because the technical sphere of our experience connects with other dimensions of that experience, for example, the numerical (whence we may derive principle 1 above, that refers to the designed intervention being required to be a unity within a multiplicity of components), the spatial (principle 2), the kinematic (that allows us to conceive of principle 3, the reliability of the designed intervention), the physical sphere of energy-effect (principle 4), and so on for the organic, the sensitive, logical, lingual, social, economic, aesthetic, juridical, ethical, and confessional dimensions, they are analogically reflected in the technical aspect. The analogical connections among the technical and the others allow us to conceptualise various technical principles that we can employ to design language interventions responsibly.

The language interventions that are conceived of and studied within the field of applied linguistics go further than merely being designs and plans, however. They achieve a level of deliberation and sophistication by allowing the plans that are made to be further informed, and even corrected by theory and analysis (principle 9 above). Where theory is lacking or perhaps not available for justification, then at least a set of empirical analyses - say, in the form of case studies - will support the analysis.

6. What uses do these design principles have?

The purpose of this paper has been to focus in particular on principles 7 (theoretical defensibility) and 11 (the required alignment of various interventions) of the list of language intervention design principles in the previous section. It should be clear that the application of principle 7 is an antidote to and a corrective for a number of others, but also for non-adherence to principles 1 and 11, that call on us to make our designs integrated solutions, a unity within a multiplicity of subcomponents, and then to attempt to align them with others. The intention of aiming for technical harmony here is to eliminate contradiction and conflict, and so achieve the desired effect (principle 4). So the application of the principles is mutually reinforcing, the one supporting the achievement of the other.

As regards theoretical defensibility, the claim that is made here is that if that consideration never enters into the process of design, the simple solution will trump the deliberate, the politically expedient is likely to prevail over the rational, the conventionally acceptable will triumph over the imaginative, mediocrity will score another victory over excellence, and prejudice deriving from perceptions of status will stifle innovation and creativity. There is no single component of the design process that is immune to the blight of bias, a lack of imagination, or ideologically inspired resistance to considering alternatives. Should that level of deliberation be in abeyance, the applied linguistic intervention will lack effect and utility; its absence will make it less defensible, and less trustworthy. Designed responsibly, however, our language interventions stand a better chance of satisfying the twelfth and thirteenth conditions above: serving those affected with care and love, with their best interests in mind, and transparently and openly doing so.

References

Cliff, A. 2014. Entry-level students' reading abilities and what these abilities might mean for academic readiness. Language Matters 45(3): 313-324. https://doi.org/10.1080/10228195.2014.958519h [ Links ]

Cliff, A. 2015. The National Benchmark Test in academic literacy: How might it be used to support teaching in higher education? Language Matters 46(1): 3-21. https://doi.org/10.1080/10228195.2015.1027505 [ Links ]

Cliff, A. 2019. The use of mediation and feedback in a standardised test of academic literacy: Theoretical and design considerations. Chapter submitted for L.T. du Plessis, J. Read and A. Weideman (eds.) Transformation and transition: Perspectives from the south on pre- and post-admission language assessment in institutions of higher education. Forthcoming publication from Multilingual Matters.

Department of Basic Education. 2011. Curriculum and assessment policy statement: Grades 10-12 English Home Language. Pretoria: Department of Basic Education. [ Links ]

Department of Higher Education and Training. 23 February 2018. Draft language policy for higher education. Government Gazette 632, No. 41463, Notice 147. Pretoria: Government Printing Works.

Du Plessis, C., S. Steyn and A. Weideman. 2016. Die assessering van huistale in die Suid-Afrikaanse Nasionale Seniorsertifikaateksamen - Die strewe na regverdigheid en groter geloofwaardigheid. LitNet Akademies 13(1): 425-443. [ Links ]

Economist, The. 3 February 2018. Going to university is more important than ever for young people; but the financial returns are falling. Available online: https://www.economist.com/news/international/21736151-financial-returns-are-falling-going-university-more-important-ever (Accessed 9 December 2018). https://doi.org/10.1787/0091933b-en

Fulcher, G. 2010. Practical language testing. London: Hodder Education. [ Links ]

Gruhn, C.M.S. and A. Weideman. 2017. The initial validation of a Test of Emergent Literacy (TEL). Per Linguam 33(1): 25-53. https://doi.org/10.5785/33-1-698 [ Links ]

Habermas, J. 1970. Toward a theory of communicative competence. In H.P. Dreitzel (ed.) Recent Sociology Vol. 2. London: Collier-Macmillan. pp. 115-148. [ Links ]

Halliday, M.A.K. 1978. Language as social semiotic: The social interpretation of language and meaning. London: Edward Arnold. https://doi.org/10.1017/s004740450000782x [ Links ]

Hymes, D. 1972. On communicative competence. In J.B. Pride and J. Holmes (eds.) Sociolinguistics: Selected readings. Harmondsworth: Penguin. pp. 269-293. [ Links ]

Knoch, U. and C. Elder. 2013. A framework for validating post-entry language assessments (PELAs). Papers in Language Testing and Assessment 2(2): 48-66. [ Links ]

Lo Bianco, J. 2017. Multilingualism in education: Equity and social cohesion - considerations for TESOL. Paper read at the Summit on the Future of the TESOL Profession, Athens, 9-10 February 2017. Available online: http://www.tesol.org/docs/default-source/advocacy/joseph-lo-bianco.pdf?sfvrsn=0 (Accessed 12 July 2018).

Myburgh, J. 2015. The Assessment of Academic Literacy at Pre-university Level: A Comparison of the Utility of Academic Literacy Tests and Grade 10 Home Language Results. MA dissertation, University of the Free State. Available online: http://hdl.handle.net/11660/2081 (Accessed 1 April 2019). [ Links ]

Patterson, R. and A. Weideman. 2013a. The typicality of academic discourse and its relevance for constructs of academic literacy. Journal for Language Teaching 47(1): 107-123. https://doi.org/10.4314/jlt.v47i1.5 [ Links ]

Patterson, R. and A. Weideman. 2013b. The refinement of a construct for tests of academic literacy. Journal for Language Teaching 47(1): 125-151. https://doi.org/10.4314/jlt.v47i1.6 [ Links ]

Read, J. 2015. Assessing English proficiency for university study. Basingstoke: Palgrave Macmillan. [ Links ]

Read, J. (ed.). 2016. Post-admission language assessment of university students. Cham: Springer Publishing International. [ Links ]

Schuurman, E. 1972. Techniek en toekomst: Confrontatie met wijsgerige beschouwingen. Assen: Van Gorcum. [ Links ]

Van Dyk, T. and A. Weideman. 2004. Switching constructs: On the selection of an appropriate blueprint for academic literacy assessment. SAALT Journal for Language Teaching 38(1): 1-13. https://doi.org/10.4314/jlt.v38i1.6024 [ Links ]

Weideman, A. 2003. Assessing and developing academic literacy. Per Linguam 19(1&2): 55-65. https://doi.org/10.5785/19-1-89 [ Links ]

Weideman, A. 2006. Overlapping and divergent agendas: Writing and applied linguistics research. In C. Van der Walt (ed.) Living through languages: An African tribute to Rene Dirven. Stellenbosch: African Sun Media. pp. 147-163. https://doi.org/10.18820/9781920109714 [ Links ]

Weideman, A. 2009. Constitutive and regulative conditions for the assessment of academic literacy. Southern African Linguistics and Applied Language Studies 27(3): 235-251. https://doi.org/10.2989/salals.2009.27.3.3.937 [ Links ]

Weideman, A. 2014. Innovation and reciprocity in applied linguistics. Literator 35(1):1-10. Also appeared in pre-publication format in X. Deng and R. Seow (eds.). Alternative pedagogies in the English language and communication classroom: Proceedings of the 4th CELC Symposium. Available online: http://www.nus.edu.sg/celc/research/books/4th%20Symposium%20proceedings /7).%20Albert%20Weideman.pdf (Accessed 9 December 2018). [ Links ]

Weideman, A. 2017. Responsible design and applied linguistics: Theory and practice. Cham: Springer International Publishing. [ Links ]

Weideman, A. 2018. What is academic literacy? [Introduction.] In A. Weideman Academic literacy: Five new tests. Bloemfontein: Geronimo Distribution. Available online: https://www.academia.edu/36293073/Academic_literacy_Five_new_tests_Introduction (Accessed 9 December 2018). https://doi.org/10.4314/jlt.v47i1.6 [ Links ]

Weideman, A. 2019. A skills-neutral approach to academic literacy assessment. Chapter submitted for L.T. du Plessis, J. Read and A. Weideman (eds.) Transformation and transition: perspectives from the south on pre- and post-admission language assessment in institutions of Higher Education. Forthcoming publication from Multilingual Matters.

Weideman, A., C. du Plessis and S. Steyn. 2017. Diversity, variation and fairness: Equivalence in national level language assessments. Literator 38(1): a1319. Available online: https://literator.org.za/index.php/literator/article/view/1319 (Accessed 1 April 2019). https://doi.org/10.4102/lit.v38i1.1319 [ Links ]

Weideman, A., R. Patterson and A. Pot. 2016. Construct refinement in tests of academic literacy. In J. Read (ed.). Post-admission language assessment of university students. Cham: Springer Publishing International. pp. 179-196. https://doi.org/10.1007/978-3-319-39192-2_9 [ Links ]