Services on Demand

Article

Indicators

Related links

-

Cited by Google

Cited by Google -

Similars in Google

Similars in Google

Share

SA Journal of Industrial Psychology

On-line version ISSN 2071-0763

Print version ISSN 0258-5200

SA j. ind. Psychol. vol.39 n.1 Johannesburg Jan. 2013

ORIGINAL RESEARCH

Development of the learning programme management and evaluation scale for the South African skills development context

Maelekanyo C. TshilongamulenzheI; Melinde CoetzeeII; Andries MasengeIII

IDepartment of Human Resource Management, University of South Africa, South Africa

IIDepartment of Industrial and Organisational Psychology, University of South Africa, South Africa

IIIOffice of Graduate Studies and Research, University of South Africa, South Africa

ABSTRACT

RESEARCH PURPOSE: The present study developed and tested the construct validity and reliability of the learning programme management and evaluation (LPME) scale.

MOTIVATION FOR THE STUDY: The LPME scale was developed to measure and enhance the effectiveness of the management and evaluation of occupational learning programmes in the South African skills development context. Currently no such instrument exists in the South African skills development context; hence there is a need for it.

RESEARCH DESIGN, APPROACH AND METHOD: This study followed a quantitative, non-experimental, cross-sectional design using primary data. The LPME scale was administered to a sample of 652 skills development practitioners and learners or apprentices drawn from six organisations representing at least five economic sectors in South Africa. Data were analysed using SPSS and Rasch modelling to test the validity and reliability of the new scale.

MAIN FINDINGS: The findings show that the LPME scale is a valid and reliable 11-dimensional measure comprising 81 items.

PRACTICAL/MANAGERIAL IMPLICATIONS: In view of the seriousness of the skills shortage challenge facing South Africa, this study provides a solid base upon which skills development practitioners can effectively manage and evaluate occupational learning programmes. Furthermore, the newly developed LPME scale provides a basis for further human resource development research in the quest for a solution to the skills shortage challenge.

CONTRIBUTION/VALUE-ADD: This study contributes by developing a new scale and testing its validity and reliability. As a valid and reliable measure, the LPME scale can be applied with confidence in various South African workplaces.

Introduction

Key focus of the study

South Africa faces a critical challenge of skills shortage, which is seriously threatening economic growth and employment creation (Arvanitis, 2006; Hermann, 2008; Lamont, 2001; South African Institute of Race Relations [SAIRR], 2008). Du Toit (2012) and Goga and Van der Westhuizen (2012) regard the situation as a paradox of skills shortages in the workplace and high levels of unemployment. A skilled workforce is a critical determinant of global competitiveness (Kruss et al., 2012). In a time of global economic recession, debt crises and burgeoning unemployment, skills and capabilities are even more significant. Thus, in order to advance - or simply keep up - countries have to develop their technological capabilities, to increase their share of knowledgeintensive and complex activities that require higher skills levels in general, and in relation to the technological trajectory of specific sectors (Kruss et al., 2012).

Occupational learning programmes are touted as a fundamental mechanism to address skills shortages in the South African context; hence, vocational and occupational certification via learnership and apprenticeship programmes is at the core of the new skills creation system. An occupational learning programme includes a learnership, an apprenticeship, a skills programme or any other prescribed learning programme that includes a structured work experience component (Republic of South Africa, 2008). These programmes are inserted into a complex and increasingly bureaucratised qualification and quality assurance infrastructure. Learning programmes are administered by the Sector Education and Training Authorities (SETAs), which are in effect a set of newly created institutions that have yet to develop capacity to drive skills development (Marock, Harrison-Train, Soobrayan & Gunthorpe, 2008). This study took cognizance of the fact that South Africa is not producing enough of the right levels and kinds of skills to support its global competitiveness and economic development (Janse Van Rensburg, Visser, Wildschut, Roodt & Kruss, 2012), and hence is very timely. The focus of this study is on the development and testing of the construct validity of the learning programme management and evaluation (LPME) scale that should be applied to measure and enhance the effectiveness of the management and evaluation of occupational learning programmes in the South African skills development context. There is no evidence in the literature currently of the existence of such an instrument in South Africa and hence this study is imperative.

Background to the study

A number of challenges, as briefly outlined below, have been raised regarding the coordination and management of skills development training projects in South Africa (Du Toit, 2012), including poor quality of training and lack of mentorship. An impact assessment study of the National Skills Development Strategy (NSDS) (Mummenthey, Wildschut & Kruss, 2012) revealed the prevalence of differences in standards across the different occupational learning routes, which brought about inconsistencies regarding procedures to implement training. This was found to significantly impact on the uniformity and reliability of the outcome, resulting in confusion amongst providers and workplaces. The inconsistent implementation of workplace learning demonstrates that more guidance and improved quality assurance mechanisms are required.

Further, the study by Mummenthey et al. (2012) revealed that there is a lack of structured and sufficiently monitored practical work exposure as well as full exposure to the trade, particularly in the case of apprenticeships in the workplace. Quality checks were found to be superficial: checking policies and procedures, but not thoroughly checking what is actually happening during training. The primarily paper-based checks (sometimes adding learner interviews) were found to be insufficient and 'completely missing the point' (Mummenthey et al., 2012, p. 40). A lack of subject matter expertise often reduced the process of quality assurance to a paper proof instead of actually assuring the quality of training. The overall alignment of theory and practice could be better achieved through setting and maintaining a consistent benchmark for training at institutional and workplace levels. Minimum standards in terms of learning content and workplace exposure, together with a common standard for exit level exams, can considerably strengthen consistency in outcomes, implementation and assessment (Mummenthey et al., 2012). These standards will positively affect transferability of skills between workplaces, and thus the overall employability of learners.

In the context of few post-school opportunities, learnerships and apprenticeships are potentially significant routes to vocational and occupational qualifications in South Africa (Wildschut, Kruss, Janse van Rensburg, Haupt & Visser, 2012). These programmes represent important alternative routes to enhance young people's transition to the labour market, and to meet the demand for scarce and critical skills. A 2008 review of SETAs showed that the skills development system suffers from weak reporting requirements,- underdeveloped capacity, lack of effective management and inadequate monitoring and evaluation that limit the ability of these institutions to serve as primary vehicles for skills development (Marock et al., 2008).

These shortcomings are indicative of management and evaluation weaknesses impacting the South African skills development system and they raise serious concerns about the quality of occupational learning. Therefore, this study seeks to curtail these management and evaluation weaknesses by developing a valid and reliable measure for the effective management and evaluation of occupational learning programmes in the South African skills development context, where no such instrument currently exists.

The objective of the study is to develop and test the construct validity and reliability of a LPME scale based on the theoretical framework proposed by Tshilongamulenzhe (2012) for the effective management and evaluation of occupational learning programmes in the South African skills development context. The newly developed scale was necessitated by the need for an integrated and coherent approach towards occupational learning programme management and evaluation with a view to effectively promote the alignment of skills development goals with the needs of the workplace in support of the goals of the NSDS (2011-2016).

Trends from the literature

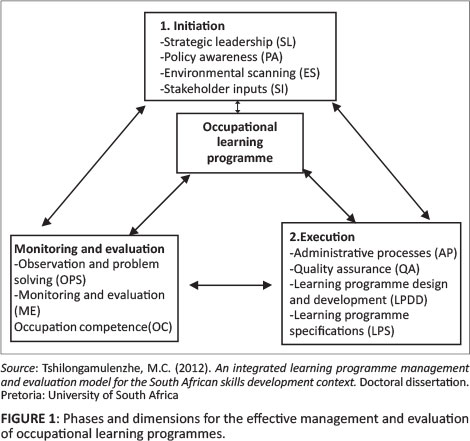

There is no literature evidence found to date of an existing valid and reliable measure of the effectiveness of management and evaluation of occupational learning programmes in the South African skills development context. Despite this paucity of literature, the theoretical framework for the management and evaluation of occupational learning programmes in the South African skills development context proposed by Tshilongamulenzhe (2012) is of relevance to the current study. Tshilongamulenzhe identified three phases relevant to the effective management and evaluation of occupational learning programmes: (1) initiation, (2) execution and (3) monitoring and evaluation. As illustrated in Figure 1, each of these three phases contains specific elements that are critical to the effective management and evaluation of a learning programme.

Phase I: Initiation

In the context of this study, initiation refers to the way an organisation scans its environment (external and internal) and uses the inputs obtained to plan and organise for the successful delivery of an occupational learning programme. The relevant inputs include legislative guidelines, needs analysis results and the resources (both human and financial) required to achieve the objectives of an occupational learning programme. The elements in this phase are strategic leadership, policy awareness, environmental scanning and stakeholder inputs (Tshilongamulenzhe, 2012).

Strategic leadership

This element focuses on how organisational leaders drive human resource development (HRD) policy and strategy in order to facilitate the achievement of the objectives of an occupational learning programme. Also examined are the organisation's governance system and how an organisation fulfils its legal, ethical and societal responsibilities and supports its key communities. Senior leaders have a central role to play in setting values and directions, communicating, creating and balancing value for all stakeholders and creating an organisational bias for action (National Institute of Standards and Technology [NIST], 2010). Strategic leadership also relates to the way leaders develop and facilitate the achievement of the mission and vision, develop values required for long-term success and implement these via appropriate actions and behaviours and how they are personally involved in ensuring that the organisation's management system is developed and implemented (European Foundation for Quality Management [EFQM], 1999). The Canadian National Quality Institute (NQI, 2001) and the South African Excellence Foundation (SAEF, 2005) describe leadership as creating the culture, values and overall direction for lasting success in an organisation. The behaviour of the executive team and all other leaders inspires, supports and drives a culture of business excellence (SAEF, 2005). It is this behaviour that creates clarity and unity of purpose in the organisation and an environment in which the organisation and its people can excel (EFQM, 1999; SAEF, 2005). Since the skills development providers take operational custodianship of occupational learning programmes, it is significant that they exercise sound leadership in order to manage these programmes successfully (Bisschoff & Govender, 2004).

Policy awareness

Policy awareness involves an organisation's analysis of relevant legislation that entrenches occupational learning programmes to inform and guide the design and implementation of occupational learning programmes. The- relevant legislations include the Skills Development Act (as amended) and the National Qualifications Framework Act. Based on the provisions of these two pieces of legislation, an organisation can clearly formulate and effectively implement its HRD policies and strategies. An organisation must implement its mission and vision via a clear stakeholder-focused strategy, supported by relevant policies, plans and objectives. A successful organisation formulates policy and strategy in collaboration with its people and this process should be based on relevant, up-to-date and comprehensive information and research (EFQM, 1999). The policy and strategy must be clearly formulated, deployed and revised and should be operationalised into plans and actions (SAEF, 2005). However, in the South African context, organisational policies for training also need to be aligned with the skills development legislation. For example, training policies should make provision for cost benefit analysis since the skills development legislation demands that a cost benefit analysis be completed to determine the benefits to annual training investments (Bisschoff & Govender, 2004). In the Indian context, however, formal apprenticeships were introduced through the Apprenticeships Act of 1961, which requires employers in notified industries to engage apprentices in specified ratios in relation to the workforce. The Central Apprenticeship Council outlines the policies and different norms and standards of apprenticeship training in the country (Palit, 2009). Hence knowledge of legislative instruments that influence organisational training policies is vital to the success of occupational learning programmes.

Environmental scanning

This element of the initiation phase entails an analysis of an organisation's external and internal environments in order to draw inputs necessary to plan and organise for the successful delivery of an occupational learning programme. This includes an analysis of the relevant legislation, facilities, relevant equipment and the availability of both the financial and human resources. The award criteria of the Malcolm Baldrige National Quality Award (MBNQA) cite environment as one of the overarching guides for an organisational performance management system (NIST, 2010). The MBNQA stresses that long-term organisational sustainability and an organisation's competitive environment are key strategic issues that need to be integral parts of an organisation's overall planning. Organisational and personal learning are necessary strategic considerations in today's fast-paced environment. Knowledge of the way an organisation determines its key strengths, weaknesses, opportunities and threats, its core competencies and its ability to execute the strategy are essential for the organisation's survival (NIST, 2010).

In the South African context, the Quality Council for Trades and Occupations (QCTO) model of quality management emphasises that workplace approval as learning sites for occupational learning programmes will be granted after evidence is produced that such workplaces have the ability to provide a work experience component (Department of Higher Education and Training [DHET], 2010). Hence environmental considerations are vital for the successful delivery of occupational learning programmes. It is imperative for skills development providers, who are the custodians of occupational learning programmes in South Africa, to define the scope of an occupational learning programme. The process of scoping could be done successfully once the environment in which these programmes are to be implemented is carefully analysed. The scope will identify the inputs, range, criteria, stakeholders and outcomes of the programme. Once the scope has been defined, the programme should be scheduled according to relevant times, dates and stakeholders (Bisschoff & Govender, 2004).

Similarly, Kirkpatrick and Kirkpatrick (2006) indicate that adequate consideration should be given to the learning environment and conditions when evaluating training. Stufflebeam and Shinkfield (2007) also focus on the importance of context when evaluating training programmes. They believe that the training context defines the relevant environment, identifies needs and assets and diagnoses specific problems that need to be addressed. Furthermore, Bushnell (1990) emphasises the importance of evaluating system performance indicators such as trainee qualifications, the availability of materials and the appropriateness of training. This view is also supported by Fitz-Enz (1994), who states that collecting pre-training data to ascertain current levels of performance in the organisation and defining a desirable level of future performance are key aspects of training evaluation. He also emphasises the need to identify the reason for the existence of a gap between the present and desirable performance in order to ascertain whether training is the solution to the problem.

Stakeholder inputs

This element focuses on the way an organisation identifies and relates to its key stakeholders, who are critical for the successful delivery of an occupational learning programme. These stakeholders include potential learners, skills development providers (including assessors and moderators), coaches and mentors (supervisors and managers). According to the EFQM (1999; SAEF, 2005), excellence in an organisation is dependent upon balancing and satisfying the needs of all relevant stakeholders (this includes the people employed, customers, suppliers and society in general as well as those with financial interests in the organisation). An organisation is seen as part of society, with key responsibilities to satisfy the expectations of its people, customers, partners, owners and other stakeholders, including exemplary concern for responsibility to society (NQI, 2007).

However, from an occupational learning programme perspective, skills development providers must integrate their activities in any organisation by working with the skills development facilitators, assessors, other skills development practitioners, managers and learners. They must employ project management skills in order to manage diverse roles and responsibilities of all key stakeholders and to- evade crisis management situations (Bisschoff & Govender, 2004). Equally significant, and from a training evaluation perspective, Kirkpatrick and Kirkpatrick (2006) suggest that along with the evaluation of learners, the programme coordinators, training managers and other qualified observers' reactions to the facilitator's presentation should also be evaluated. The success of learners during a training programme therefore also depends on the roles played by other stakeholders.

Phase II: Execution

This phase focuses on the ways in which an organisation plans, designs, implements and manages occupational learning programmes in accordance with the legislative guidelines and its policy and strategy in order to achieve the programme's objectives, and to fully satisfy and generate increasing value to its stakeholders. The elements include administrative processes, quality assurance, learning programme specifications and learning programme design and development (Tshilongamulenzhe, 2012).

Administrative processes

This element focuses on the critical activities required to support the successful delivery of an occupational learning programme. These include the recruitment, selection and placement of stakeholders. These processes also involve consultation with the successful candidates, clarification of roles and responsibilities, and, finally, the conclusion of contractual arrangements (Davies & Faraquharson, 2004) The EFQM emphasises the importance of the way in which an organisation designs, manages and plans its processes in order to support its policy and strategy and fully satisfy, and generate increasing value for, its customers and other stakeholders (EFQM, 1999). Organisations perform more effectively when all interrelated activities are understood and systematically managed, and decisions concerning current operations and planned improvements are made using reliable information that includes stakeholder perceptions (SAEF, 2005). This includes the way an organisation plans and manages its internal resources in order to support its policy and strategy and the effective operation of its processes.

An organisation's processes must be managed effectively to support its strategic direction, with a specific focus on prevention (as opposed to correction), as well as continuous improvement. Process management applies to all activities in the organisation, in particular those that are critical for success (NQI, 2007). It should be borne in mind that an organisation is a network of interdependent value-adding processes, and improvement is achieved through understanding and changing these processes in order to improve the total system. To facilitate long-term improvements, a mindset of prevention as opposed to correction should be applied to eliminate the root causes of errors and waste. Hence an organisation's resources and information should be managed and utilised effectively and efficiently and its operating processes should be constantly reviewed and improved (SAEF, 2005). These work processes and learning initiatives should be aligned with the organisation's strategic directions, thereby ensuring that improvement and learning prepare the organisation for success.

From an evaluation perspective, Stufflebeam and Shinkfield (2007) indicate that inputs should be evaluated in order to assess the system capabilities by looking into its resources and how they can best be applied to meet the programme's goal. Hence an effective and efficient management of organisational processes and resources is significant for the successful implementation of occupational learning programmes.

Quality assurance

This element relates to the way an organisation promotes and assures quality in the design and implementation of occupational learning programmes. Occupational learning programmes must be practice driven, relevant and responsive to the needs of an occupation (Department of Labour [DoL], 2008a). The Canadian NQI (2007) emphasises that the best way to keep things on track in an organisation is to apply a quality assurance method to everything that is done. This view is supported by the SAEF which based the South African Excellence Model (SAEM) on the concepts of formulating quality policies, assigning responsibility for quality to top management, managing quality procedures and control, reviewing improvement processes, delegating authority and empowering the workforce (SAEF, 2005). From an occupational learning programme perspective, however, Bisschoff and Govender, (2004) emphasise the importance of quality when stating that skills development providers, employers and learners must achieve quality standards of performance during these programmes. They contend that effective skills development providers should strive to promote excellence and quality in an occupational learning programme.

In the new occupational learning system (OLS) landscape in South Africa, the QCTO controls the quality of provision, assessment and certification by applying specified criteria in terms of the approval of regulated occupational learning programmes (DHET, 2010). The regulatory and quality assurance functions of SETAs are coordinated through the QCTO in order to use resources more effectively. In the end, quality monitoring and audits by the QCTO will be conducted constantly as required on the basis of complaints and final assessment results. The SETAs' quality assurance role involves quality monitoring of programme implementation, and programme evaluation research, including impact assessment.

Quality assurance of occupational learning programmes ensures the predictability and repeatability of processes under the organisation's control against the strategic criteria in the quality management system (Vorwerk, 2010). It is largely an issue of quality control (DHET, 2010). In the Indian context, the quality of apprenticeship training is only as good as the skills of the master and their willingness and ability to pass on those skills (Palit, 2009). To this end, quality- must permeate every aspect of an occupational learning programme, if such a programme is to succeed.

Learning programme specifications

This element focuses on the way an occupational learning programme is structured. Typically, an occupational learning programme contains three core aspects, namely knowledge and theory, practical skills and work experience (DHET, 2010; DoL, 2008b). The knowledge and theory component comprises various subject specifications (QCTO, 2011). Knowledge here refers to discipline or conceptual knowledge (including theory) from a recognised disciplinary field found on subject classification systems, such as the Classification of Educational Subject Matter (CESM), which an individual has to have in order to perform proficiently the tasks identified in the occupational profile. The knowledge identified is frequently common to a group of related occupations at the same level in the same National Occupational Pathway Framework (NOPF) family, and the level of knowledge to be covered will be built on the knowledge base held by those entering from lower level occupations within the relevant NOPF family (QCTO, 2011). The subjects specifications are developed by educationists based on inputs from expert practitioners and are packaged as standardised courses to enable providers to plan their delivery and access standardised funding.

The practical skills component derives from the roles to be performed (QCTO, 2011). It comprises various practical skill module specifications. Practical skills are defined as the ability to do something with dexterity and expertise. Skill grows with experience and practice, and can lead to unconscious and automatic actions. Practical skills are more than just the following of rule-based actions and include practical and applied knowledge (QCTO, 2011). The purpose of practical skills training is to develop the needed skills (including applied, practical and functional knowledge) to operate safely and accurately in the actual working environment (so as not to cause damage to people, equipment, systems and the business).

Practical skills are, therefore, mostly developed in a safe, simulated environment (such as a workshop) in preparation for actual work (QCTO, 2011). The module specifications are developed by expert practitioners and trainers based on the practical skills (including the applied, practical and functional knowledge) required to execute the occupational responsibilities, in terms of the tasks identified in the occupational profile (QCTO, 2011).

Work experience is defined as the exposure and interactions required to practise the integration of knowledge, skills and attitudes required in the workplace. Work experience includes the acquisition of contextual or in-depth knowledge of the specific working environment. The work experience module specifications are developed by expert practitioners, based on the work experience activities required within the specific occupational context in terms of the tasks identified in the occupational profile. Work experience modules will be reflected as work experience unit standards in the occupational qualification (QCTO, 2011). The purpose of work experience is to structure the experiences and activities (including contextual knowledge) to which the learner needs to be exposed in order to become competent in the relevant occupation.

Learning programme design and development

This element focuses on the way an organisation plans and designs its occupational learning programmes. It entails the use of relevant unit standards and logbooks, the format of presentation, the assessment scheme to be used and the outcome of the learning process (Scottish Qualifications Authority, 2009). The new OLS landscape in South Africa demands that during the development phase of occupational qualification curricula, a development facilitator should be appointed to guide and direct various working groups, which are responsible for the development of an occupational profile, the development of learning process design and the development of assessment specifications (DHET, 2010). The QCTO will have to assure quality of development and design tasks by applying nationally standardised processes and systems (DHET, 2010). The design of a learning programme determines its outcomes.

As Kirkpatrick and Kirkpatrick (2006) indicate, if a learning package is of sound design, it should help the learners to bridge a performance gap. They suggest that if a programme is carefully designed, learning can be evaluated fairly and objectively whilst the training session is being conducted. Stufflebeam and Shinkfield (2007), however, suggest that the evaluation of training programme inputs helps to determine the general programme strategy for planning and procedural design, and whether outside assistance is necessary. Bushnell (1990) suggests that evaluation should embrace the planning, design, development and delivery of training programmes. Occupational learning programmes should thus be carefully designed, taking into account the needs of all stakeholders and industry and national interests.

Phase III: Monitoring and evaluation

This phase is concerned with the systematic implementation and post-implementation monitoring and evaluation of the occupational learning programme. The elements include observation and problem solving, monitoring and evaluation, and occupational competence (Tshilongamulenzhe, 2012).

Observation and problem solving

This element entails regular observation visits by SETA representatives or designated agents to sites of delivery (classrooms, workshops, workplaces, etc.) in order to monitor learners' progress for the duration of the occupational learning programme. In Singapore (Chee, 1992), on-the-job training of apprentices is structured and backed by a comprehensive documentation and monitoring system. From the point of placement of an apprentice in a company, the Institute for Technical Education (ITE) begins a programme of monitoring the particular apprentice's progress for the full duration of their training. ITE officers visit the company regularly, at intervals of about two to three months, to ensure that the training is in accordance with the training structure and on schedule, to monitor the apprentice's progress and performance through direct observation and dialogue with their supervisor, and to attend to any matters pertaining to the performance and welfare of the apprentice. Based on the observations made, the officers initiate the necessary follow-up with the apprentice, company or ITE headquarter departments accordingly.

Monitoring and evaluation

This element focuses on the monitoring and evaluation of an occupational learning programme. The NQI emphasises the importance of monitoring and evaluation of the progress made towards meeting the goals of the organisation (NQI, 2007). In South Africa, the QCTO will conduct research to monitor the effectiveness of learning interventions in the context of the larger occupational learning system. The process of monitoring and evaluation revolves around the development and design processes, the implementation of occupational learning programmes and data analysis and impact assessment (qualitative and quantitative) (DHET, 2010). Sector Education and Training Authorities will have to focus on monitoring and evaluation of the implementation of occupational learning programmes in line with DHET regulations.

A systematic appraisal should be made of on-the-job performance on a before-and-after basis. Stufflebeam and Shinkfield (2007) also support the evaluation of actual training programme activities because this provides feedback on managing the process and recording and judging the work effort. Furthermore, Bushnell (1990) emphasises the significance of gathering data resulting from the training interventions. Fitz-Enz (1994) believes that evaluating the difference between the pre- and post-training data is vital to establish the actual value of a training intervention. The experience in Singapore, as reported by Chee (1992), is such that on-the-job training of apprentices is strictly supervised and the supervisor certifies the completion of each task in the logbook, thus closely monitoring the progress of the apprentice in following the task list.

Occupational competence

This element entails an assessment of a learner's ability to function effectively and provide products or services relating to the relevant occupation. This may include working together with others in a team in order to achieve performance improvement in the relevant occupation in an organisation. An evaluation of the post-training occupational affiliation is necessary in this dimension (Florence & Rust, 2012). The acquisition of new skills and knowledge is of no value to an organisation unless the participants actually use them in their work activities (Kirkpatrick & Kirkpatrick, 2006). Phillips (1997) also emphasises the importance of measuring change in behaviour on the job and specific application of the training material. Successful occupational learning programmes should impart the relevant skills to learners so that they can competently and effectively function in their respective occupations. In the new OLS landscape in South Africa, occupational learning programmes are evaluated, inter alia, on the appropriateness and relevance of skills that learners acquire, learners' enhanced employability and enhanced productivity and quality of work (DHET, 2010). Equally important, Kirkpatrick and Kirkpatrick (2006) indicate that it is necessary to measure learners' performance because the primary purpose of training is to improve results by having the learners acquire new skills and knowledge and then actually apply them to the learners' jobs. The purpose of the present study was to develop and test the construct validity and reliability of the LPME scale.

Research design

Research approach

A quantitative, non-experimental, cross-sectional survey design was used in order to achieve the objective of this study. The study used primary data collected from five SETAs and a human resource professional body in South Africa.

Research method

A description of the research methodology will follow.

Research participants

In this study, a sample of 900 respondents was drawn from six organisations: five SETAs and the South African Board for People Practices (SABPP), using a probabilistic simple random sampling technique. The sample was drawn from the databases of these organisations and the target participants were learning managers and employers, mentors and supervisors of learners or apprentices, skills development officers and providers, learning assessors and moderators as well as learners and apprentices. The conjecture was that all sampled participants have adequate knowledge of the South African skills development system, including occupational learning programmes. In view of this, the sample drawn was deemed representative of the research population. Only 652 usable questionnaires were received from the administration process, yielding a response rate of 72%..

The sample used in the present study comprised mainly young people in the early career stage of their lives. About 78.8% were aged younger than 35 years and only 3.3% older than 56 years. The sample was diverse in terms of gender, educational achievement, type of learning programme and occupational profile. The gender composition shows that about 52.8% of respondents were women. About 58.8% of the respondents achieved a senior certificate (Matric/N3) as their highest qualification; 4% did not completed matric. The results also show that only 13.9% of the respondents achieved a professional (four years) or honours, master's or doctorate degree. About 86.6% of the respondents were involved in learnerships compared to 13.4% who were involved in apprenticeships. Just over 65% of the respondents constituted- learners and apprentices and 9% comprising employers and managers.

Measuring instrument

The newly developed LPME scale consisted of 113 items, measuring the elemental aspects outlined in the theoretical framework proposed by Tshilongamulenzhe (2012). The instrument used a six-point likert scale with a response format ranging from 'strongly agree' to 'strongly disagree'. Construct validity and internal consistency reliabilities were examined by means of exploratory factor analysis (EFA). Unidimensionality of the refined LPME scale was assessed by means of Rasch analysis. The dimensions included in this scale were: strategic leadership, policy awareness, environmental scanning, stakeholder inputs, administrative process, quality assurance, learning programme design and development, learning programme specifications, observation and problem solving, monitoring and evaluation, and occupational competence. Sample items included (1) Occupational learning programme content must cover all aspects that are needed in the workplace and related to a specific occupation; (2) The skills development provider, mentor and supervisor must be knowledgeable about an occupation for which the learner is training; (3) The design of the practical modules must incorporate practical skills that will enable learners to fulfil relevant occupational responsibility; (4) Policies must be in place for learner entry, guidance and support system; and (5) The nomination or selection of experienced workplace mentors and supervisors must be handled carefully with the objectives of the programme in mind.

Scale development procedure: The procedure of scale development suggested by Clark and Watson (1995) was followed in the development of the LPME scale; this included the conceptualisation of the construct, item generation, item development and item evaluation and refinement.

Conceptualisation of the constructs: Learning programme management has been defined in this study as a process of planning, coordinating, controlling and activating organisational operations and processes to ensure effective and efficient use of resources (human and physical) in order to achieve the objectives of an occupational learning programme (Trewatha & Newport, 1976).

Equally important in this study, learning programme evaluation is defined as a process of collecting descriptive and judgemental information on the programme's components (e.g. context, input factors, process activities and actual outcomes) to determine whether the programme has achieved its desired outcomes (Stufflebeam, 2003).

Item generation: In item generation, the primary concern is content validity, which may be viewed as the minimum psychometric requirement for measurement adequacy. This is the first step in construct validation of a new measure (Schriesheim, Powers, Scandura, Gardiner & Lankau, 1993). Content validity must be built into the measure through the development of items. As such, any measure must adequately capture the specific domain of interest and contain no extraneous content. In this study, a clear link was established between items and their theoretical domain. This was accomplished by beginning with a strong theoretical framework regarding skills development, occupational learning systems, training management and evaluation models, and by employing a rigorous sorting process that matched items to construct definitions.

Item development: Once the scope and range of the content domain have been tentatively identified, the actual task of item writing can begin (Clark & Watson, 1995). Writing scale items is more challenging and time-consuming (Mayenga, 2009). In this study, a large pool of items were written and carefully reviewed by the researcher with the assistance of the research supervisor. The review process was aimed to determine as far as possible whether the items were clearly stated, whether the items conformed to the selected response format, whether the response options for each item were plausible, and whether the wording was familiar to the target population. An initial pool of 186 items was generated during this stage, based on review of the literature.

Item evaluation and refinement: As Benson and Clark (1982) state, an instrument is considered to be content valid when the items adequately reflect the process and content dimensions of the specified aims of the instrument as determined by expert opinion. As part of the content validation, a sample comprising 27 skills development experts and apprentices and learners reviewed the pool of 186 items with instructions to assess the face and content validity, to evaluate the relevance of the items to the dimensions they proposed to measure, to assess the importance of the items, to assess the item difficulty level (easy, medium, difficult), and to judge items for clarity. The goal was to obtain a reasonable number of items that would constitute the final draft measure. Item quality and content relevance for the final draft of the scale were determined based on the strength of the literature and expert reviewers' comments. A decision to retain items for the final draft was made based on the content validity results of expert review regarding item clarity, relevance and importance. Content validity, item importance, relevance and clarity were estimated using a Content Validity Ratio (CVR) with direct feedback that was collected from the panel of 27 skills development experts. CVR was measured using the formula after answering to three spectrums - 'item is important', 'item is relevant' and 'item is clear' - for each of the 186 items.

The expert review results showed a clean ranking of each item in terms of relevance, importance and difficulty. All items were consistently ranked using CVR and the results ranged from an average CVR of .84 to 1 overall. However, in view of the fact that CVR values less than 1 demonstrate that not all reviewers agree on the relevance, clarity and importance of some items, the researcher decided that a CVR cut-off point of .96 would be appropriate in order to eliminate items that may not be clear, relevant and important to experts in the- draft research instrument. This cut-off point of .96 is above the minimum value of .37 (p < .05) for 27 experts as suggested by Lawshe (1975, p. 568) and supported by Wilson, Pan and Schumsky (2012, p. 10).

Subsequent to this decision, the results of expert review on item importance, relevance and clarity showed that 33 items had a CVR of 1, showing agreement across the board amongst experts; 24 items had a CVR of between .986 and .987; 43 items had a CVR of between .972 and .975; only nine items had a CVR of between .960 and .963. Consequently, all items below a CVR of .96 were eliminated, except for four best-averaged items below this cut-off point in two dimensions that were included to ensure that each dimension had at least five items. Each pair of these four retained items had the highest CVR below the cut-off point (.933 and .947 respectively) in their respective theoretical dimensions. In the final analysis of the expert inputs, the revised instrument had 113 items in total, which were measured on a six-point Likert scale, ranging from 'strongly agree' to 'strongly disagree'. All items were classified into the appropriate dimension and each dimension had at least five items.

Research procedure

Permission to undertake this research was sought from all 21 SETAs and the SABPP. The researcher wrote official letters of request for permission to all Chief Executive Officers of 21 SETAs. Unfortunately, only five of the 21 SETAs gave permission for the research to be undertaken within their jurisdictions. Permission was also obtained from the SABPP. Once permission to undertake the research was granted, the researcher started the process of planning for sampling and data collection within the respective organisations. Five fieldworkers and a project administrator were appointed to render the data collection service and project fieldwork management support. The project management support included assistance to the fieldworkers and the researcher, management and capturing of data. The fieldwork took place in three of South Africa's nine provinces, that is, Mpumalanga, North West and Gauteng, over a period of three months.

The questionnaire distributed to respondents had a cover letter, which informed respondents of the purpose and significance of the research, and that their participation was voluntary at their own consent. Also included in the letter was the time required to complete the questionnaire as well as assurance that respondents could discontinue their voluntary participation at any time. The cover letter also assured respondents of their anonymity and confidentiality of their responses, which would only be used for the current research purposes.

In order to ensure a high degree of internal validity between the different fieldworkers, a number of criteria had to be met when appointing fieldworkers (Leedy & Ormrod, 2001, p. 103).

Fieldworkers were required to at least have a bachelor's degree in Human Resource Management (HRM) and knowledge of research methodology. A qualification in HRM provides a broader understanding of training, learning and human resource development issues and this knowledge was important to address questions that respondents may raise. The project administrator was required to have some experience with the research process, including logistics management, project management, data management and data capturing.

A briefing session in which fieldworkers and an administrator were trained on various aspects pertaining to this research was also arranged. In addition, several demonstrations of the data collection procedure and data management were performed with the fieldworkers and the administrator respectively to ensure that they understood the process and complied with the ethical principles. Both the fieldworkers and an administrator demonstrated high level of knowledge and competence, as observed during interactions with the researcher before data collection began.

The reason for conducting physical fieldwork was to try to mitigate the low response rate commonly experienced with web surveys. The researcher decided to exclude the other six provinces from the survey as they were already represented in the electronic distribution of the questionnaire. The electronic distribution was carried out concurrently with the drop-in and pick-up approach in order to reach target participants who were located or deployed in other provinces. Each of the six organisations that participated in the study had members in all nine provinces of South Africa. Respondents were informed of the research and its purpose by their organisations using online newsletters, email and the website. An active web link to the questionnaire was sent to respondents by their organisations along with a cover letter on the organisation's letterhead. The cover letter also stipulated the time frame for the survey, and informed the respondents of their rights to participate and provided assurance of anonymity and confidentiality.

Statistical analysis

In order to achieve the objective of this research, data were analysed using the Statistical Package for Social Sciences (SPSS, Version 20) (IBM, 2011) and Winsteps (Version 3.70.0) (Linacre, 2010). SPSS was used for EFA, whilst Winsteps was used for the Rasch analysis. EFA included the diagnostics tests (Kaiser-Meyer-Oklin and Bartlett's test of sphericity) and principal component analysis (PCA). Rasch analysis included person or item separation indices, measure order and PCA.

Results

Exploratory factor analysis

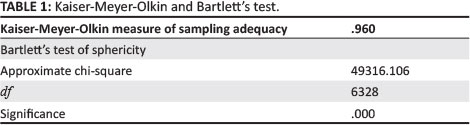

Exploratory factor analysis is based on the correlation matrix of the variables involved. Tabachnick and Fidell's (2001, p. 588) advice regarding sample size for EFA: 50 is very poor, 100 is poor, 200 is fair, 300 is good, 500 is very good, and 1000 or more is excellent. In the present study, a sample size- of about 652 (response rate of 72.4%) cases was considered appropriate for factor analysis. Two initial tests (the KaiserMeyer-Olkin measure of sampling adequacy and Bartlett's test of sphericity) were performed to establish adequacy of the sample and the appropriateness of the correlation matrix for factoring. The results are shown in Table 1.

The Kaiser-Meyer-Olkin (KMO) index of .96 in the present study indicates that the items in the new LPME scale were suitable for factor analysis (Kline, 1994), and, therefore, the factorial structure to be obtained from the PCA will be acceptable. The Kaiser-Meyer-Olkin index is a measure indicating how much the items have in common. A KMO value closer to 1 indicates that the variables have a lot in common. Bartlett's test of sphericity was also conducted to test the null hypothesis that 'the correlation matrix is an identity matrix'. An identity matrix is a matrix in which all the diagonal elements are 1 and off-diagonal elements are 0. Bartlett's test of sphericity was statistically significant (df = 6328; p ≤ .000) and rejects the null hypothesis that 'the correlation matrix is an identity matrix'. The determinant of the correlation matrix between the factors was set to zero due to orthogonal rotation restriction which imposes that the factors cannot be correlated. Taken together, the results of these tests meet a minimum standard that should be passed before a PCA is conducted.

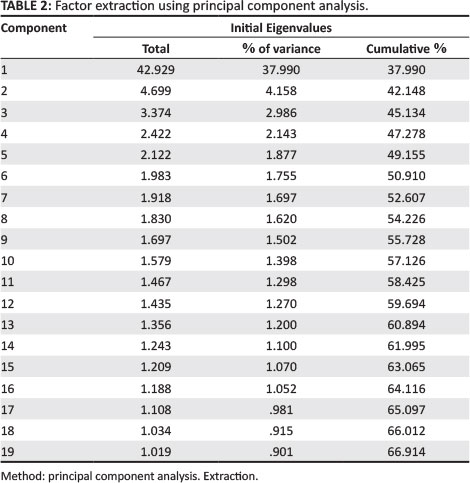

Principal component analysis

Nineteen strong factors with an eigenvalue greater than 1 were extracted from the PCA. The first residual factor accounted for the most unexplained variance (42.92 eigenvalue units). These are shown in Table 2. Whilst an eigenvalue of 1 represents the norm in the literature (and often the default in most statistical software packages), a cut-off point of 1.45 eigenvalue units was used to extract the factors in the present study. Previous simulation studies have shown that random data (i.e. noise) can have eigenvalues of 1.4 (Burg, 2008). Winsteps and PCA analysis use 1.4 as a cutoff value (Linacre, 2005), that is, a residual factor with an eigenvalue greater than 1.4 could potentially be a valid factor (i.e. enduring or repeatable structure), but if its eigenvalue is less than 1.4 then it is most likely to have noise or random error. Consequently, this study used a cutoff point of 1.45 in order to extract only the factors that would yield the most interpretable results with less probability of random error. Furthermore, an additional criterion used to extract the factors was the number of items loading at .4 and higher using varimax rotation. The criterion applied during factor rotation is slightly higher than the .3 rule of thumb for the minimum loading of an item as cited by Tabachnick andFidell (2001).

Thus, all factors with a total eigenvalue above 1.45 and a minimum of four items loading at .4 and higher were considered for further analysis. As Costello and Osborne (2005) suggest, a factor with fewer than three items is generally weak and unstable, hence the researchers' decision to consider factors with a minimum of four items loading at .4 and higher. Consequently, only the first 11 factors extracted were considered useful for further statistical analysis in the present study. The determination of the number of factors for inclusion was guided by theory and informed by the research objective, and the need to extract only the factors that could yield the most interpretable results with less probability of random error.

The PCA of standardised residuals has an advantage over fit statistics in detecting departures from unidimensionality when (1) the level of common variance between components in multidimensional data increases and (2) there are approximately an equal number of items contributing to each component (Smith, 2004). To judge whether a residual component adequately constitutes a separate dimension, the researchers looked at the size of the first eigenvalue (<2) of unexplained variance that is attributable to this residual- contrast. According to Reckase (1979), the variance explained by the first factor should be greater than 20% to indicate dimensionality.

A range of variance explained by the sub-scales is depicted in Table 3. The variance explained by the 11 sub-scales ranged between 44.7% and 61.1 %. The unexplained variance explained by the first contrast for all 11 dimensions had eigenvalue units ranging from 1.4 to 1.9, which were below the chance value of 2.0 (Smith, 2002). These findings show that all sub-scales were unidimensional as there was no noticeable evidence of a secondary dimension emerging in the items.

As shown in Table 4, the final LPME scale consisted of 81 items that were clustered into 11 sub-scales. These sub-scales were labelled as: Administrative Processes (AP), Learning Programme Design and Development (LPDD), Policy Awareness (PA), Observation and Problem Solving (OPS), Quality Assurance (QA), Stakeholder Inputs (SI), Monitoring and Evaluation (ME), Environmental Scanning (ES), Strategic Leadership (SL), Learning Programme Specifications (LPS) and Occupational Competence (OC).

Rasch analysis

Subsequent to the PCA factor extraction process, a Rasch analysis was conducted on the 11 LPME sub-scales to further examine the psychometric properties of the LPME scale. A Rasch model is a probabilistic mathematical model which provides estimates of person ability and item difficulty along a common measurement continuum, expressed in log-odd units (logits). It focuses on constructing the measurement instrument with accurateness rather than fitting the data to suit a measurement model (Hamzah, Khoiry, Osman, Hamid, Jaafar & Arshad, 2009).

The Rasch model results for all 11 sub-scales of the LPME scale are reported in Table 5. The results include a summary of person or item separation indices and reliability coefficients, measure order and PCA.

The person/item separation indices examine the extent to which the new measure distinguishes the different levels of responses and respondents' abilities. The reliability coefficient assesses the internal consistency of the measure. Measure order assesses the goodness of item fit to the Rasch model as well as unidimensionality. It is evident in Table 5 that the person infit and outfit values for all 11 sub-scales range from .73 to 1.04 respectively. These findings show that respondents were less able to respond to the items of the sub-scales. The sub-scale Learning Programme Specifications (LPS) showed the lowest infit and outfit values (.73) relative to other sub-scales and this is attributable to the limited number of items (n = 3) constituting this sub-scale.

Further, the results show that the item infit values ranged from .99 to 1.01, whilst the outfit values ranged from .97 to 1.04 respectively. These findings show that the items- for each of the 11 sub-scales were well designed and work together in defining each underlying construct. These findings support the unidimensionality of each sub-scale. The person separation indices ranged from .99 (one stratum distinction) to 2.17 (three strata distinction: low, medium and high ability). Overall, respondents' ability to answer the items fell below the average mean score on all 11 sub-scales. However, in view of the high item separation indices and good reliability coefficients for all the sub-scales, the chances that the difficulty ordering of the items will be repeated if the measure were given to another group of respondents are extremely high. The Cronbach's reliability coefficients for the 11 sub-scales as shown in Table 5 ranged from .79 to .94 and are acceptable. Overall, the Cronbach' alpha for the LPME scale is .87.

Inter-correlations between the sub-scales of the learning programme management and evaluation scale

Correlations between the sub-scales of the LPME scale were computed by means of Pearson product-moment correlations. The results are shown in Table 6. It is clear from Table 6 that the inter-correlations amongst the variables were found to be within the acceptable range because none is ≥ .85 (Almost, 2010) or ≥ .9 (Maiyaki, 2012). Therefore, this is an indication of the absence of multicolinearity problems amongst the constructs under investigation. As depicted in Table 6, all variables showed a positive and statistically significant correlation with one another. The strongest correlation was found between the variables learning programme design and development and policy awareness (r = .73; p ≤ .01, larger practical effect size), learning programme design and development and stakeholder inputs (r = .72; p ≤ .01, larger practical effect size), learning programme design and development and occupational competence (r = .73; p ≤ .01, larger practical effect size), and stakeholder inputs and observation and problem solving (r = .70; p ≤ .01, larger practical effect size).

Discussion

This study sought to operationalise the elements of the LPME theoretical framework, developed by Tshilongamulenzhe (2012), into a LPME scale and thereafter test the construct validity and reliability of this newly developed scale. This is an important task in addressing the management and evaluation weaknesses causing ineffectiveness in the management and evaluation of occupational learning programmes in the South African skills development context. The construct learning programme management was conceptualised in this study as a process of planning, coordinating, controlling and activating organisational operations and processes to ensure effective and efficient use of resources (human and physical) in order to achieve the objectives of an occupational learning programme (Trewatha & Newport, 1976), whilst learning programme evaluation was conceptualised as a process of collecting descriptive and judgemental information on the programme's components (e.g. context, input factors, process activities and actual outcomes) to determine whether the programme has achieved its desired outcomes (Stufflebeam, 2003).

The item generation stage was guided by the elements outlined in the theoretical framework developed by Tshilongamulenzhe (2012). The process of evaluating the items of the newly developed LPME scale was done using a pool of experts in the area of inquiry. The rationale to engage experts at this stage was to ensure that the content of the scale was valid and all items were clear and unambiguous. As Benson and Clark (1982) state, an instrument is considered to be content valid when the items adequately reflect the process and content dimensions of the specified aims of the instrument as determined by expert opinion.

Feedback from the experts was distilled and some items from the initial pool were deleted. The remaining items (113 items remained) were subjected to an exploratory factor analysis in order to establish the factorial structure of the draft scale. The factorial structure was established through a varimax rotation technique using a PCA. The goal of rotation is to simplify and clarify the data structure (Costello & Osborne, 2005). A PCA revealed an initial total of 19 factors, which was reduced to 11 factors. The 11 remaining factors were considered to be sub-scales of the newly developed LPME scale. A PCA of the residuals (observed minus expected scores) was also performed to assess sub-scale dimensionality (Linacre, 2009; Smith, 2002) and the findings of this study show that all sub-scales of the LPME scale were unidimensional. A Rasch analysis process was undertaken in order to test the unidimensionality, reliability and validity of the LPME sub-scales and their associated items. The findings of this study show that the LPME sub-scales and their associated items were valid and reliable and fit the Rasch model.

Conclusion

A conclusion drawn from the findings of this study is that the LPME scale and its sub-scales are a valid and reliable measure that can be used in practice to monitor and evaluate the effectiveness of occupational learning programmes in the South African skills development context. Empirically, this study contributes by developing and testing a valid and reliable LPME scale measure for the effective management and evaluation of occupational learning programmes. As a valid and reliable measure, the LPME scale can be applied with confidence in South African workplaces. Practically, the findings of this study will enable skills development stakeholders involved in occupational learning programmes to identify gaps in the system and develop interventions for improvement by means of a reliable and valid measure. The LPME scale will help SETAs, skills development practitioners and providers to manage and evaluate occupational learning programmes effectively.

This study sought to contribute to the field of HRD and in particular to skills development in the South African workplace context. Given the seriousness of the skills shortage challenge South Africa faces, the present study provides a solid base upon which skills development practitioners could effectively manage and evaluate occupational learning programmes, and upon which HRD scholars could further seek a lasting solution to the skills shortage challenge. The LPME scale is a valid and reliable measure that can be applied in any workplace in South Africa, and its sub-scales can be applied autonomously depending on the needs of the users.

Irrespective of the contributions made by the study, several limitations need to be pointed out. Firstly, the literature review was constrained due to the limited amount of previous research regarding occupational learning programmes in South Africa. The concept of an occupational learning programme is still new in South Africa and very limited research has been conducted. Secondly, this study focused only on two types of learning programmes, that is, learnerships and apprenticeships. So, the interpretation or application of the findings of this study should be limited to these two types of learning programmes. Thirdly, the sample was not analysed in terms of racial composition and, therefore, the results are limited with regard to the diagnosis of racial differences.

Fourthly, this study took place during a period of transition from the old dispensation of the repealed SAQA Act (Act No. 53 of 1995) into the new dispensation brought about by the NQF Act (Act No. 67 of 2008). At the time this study was in progress, the Skills Development Amendment Act (Act No. 37 of 2008) was being implemented including the new definition of a learning programme. A new vocabulary was being phased-in as part of the third NSDS (2011-2016). During the same period, the skills development unit migrated from DoL to DHET. Consequently, the wording of the items in the new measure captured the new vocabulary which may have not been clearly understood by some of the respondents during the data collection phase. This limitation was prompted by a low person separation index and poor person or item targeting in most dimensions of the LPME measure as was observed when the researcher was conducting Rasch analysis during EFA phase of this research. The item separation index was consistently high and acceptable, and the other fit statistics (MNSQ infit or outfit values, point measure correlation) also confirmed that the items were well developed and measured the construct under enquiry. Therefore, the findings of this study may have to be interpreted with caution taking cognisance of this limitation. The fifth and final limitation- relates to the scope of application of the findings of this study. It must be noted that the LPME scale developed in this study is not the sole panacea to the learning programme challenges currently being experienced by organisations, and therefore should not be interpreted as such. Although a valid and reliable tool, the LPME scale should be seen as an outcome of a scientific enquiry that may require further scrutiny. There may be other factors not examined in this study such as the size of the organisation, the nature of its HRD policy framework and the business imperatives, which may also require careful consideration to augment the successful application of this newly developed tool.

Acknowledgments

Competing interest

The authors declare that they have no financial or personal relationship(s) that may have inappropriately influenced them in writing this article.

Authors' contributions

M.C.T (University of South Africa) is the first author of the article and it is based on his Doctorate of Commerce research. M.C. (University of South Africa) was the supervisor of the study and approved all the various stages of the research. A.M. (University of South Africa) was fundamental in conducting the statistical analysis for the research.

References

Almost, J.M. (2010). Antecedents and consequences of intragroup conflict among nurses in acute care settings. Doctoral dissertation. Toronto: University of Toronto. [ Links ]

Arvanitis, A. (2006). Foreign direct investment in South Africa: Why has it been so slow? Washington DC: International Monetary Fund. [ Links ]

Benson, J., & Clark, F. (1982). A guide for instrument development and validation. The American Journal of Occupational Therapy, 36(12), 789-800. http://dx.doi.org/10.5014/ajot.36.12.789, PMid:6927442 [ Links ]

Bisschoff, T., & Govender, C.M. (2004). A management framework for training providers to improve skills development in the workplace. South African Journal of Education, 24(1), 70-79. [ Links ]

Burg, S.S. (2008). An investigation of dimensionality across Grade Levels and effects on vertical linking of Elementary Grade Mathematics Achievement Tests. New York: NCME. [ Links ]

Bushnell, D.S. (1990). Input, process, output: A model for evaluating training. Training and Development Journal, 44(3), 41-43. [ Links ]

Chee, S.S. (1992). Training through apprenticeship - The Singapore system. In The Network Conference of the Asia Pacific Economic Cooperation - Human Resource Development for Industrial Technology on 'Quality Workforce through On-the-Job Training', 24-26 November 1992. Singapore. [ Links ]

Clark, L.A., & Watson, D. (1995). Constructing validity: Basic issues in objective scale development. Psychological Assessment, 7(3), 309-319. http://dx.doi.org/10.1037/1040-3590.7.3.309 [ Links ]

Costello, A.B., & Osborne, J.W. (2005). Best practices in exploratory factor analysis: Four recommendations for getting the most from your analysis. Practical Assessment, Research & Evaluation, 10(7), 1-9. [ Links ]

Davies, T., & Faraquharson, F. (2004). The learnership model of workplace training and its effective management: Lessons learnt from a Southern African case study. Journal of Vocational Education and Training, 56(2), 181-203. http://dx.doi.org/10.1080/13636820400200253 [ Links ]

Department of Higher Education and Training (DHET). (2010). Project scoping meeting: Curriculum development process. Pretoria. [ Links ]

Department of Labour (DoL). (2008a). Concept document for discussion Communities of Expert Practice. Pretoria. [ Links ]

DoL. (2008b). Occupational Qualifications Framework: Draft Policy for the Quality Council for Trades and Occupations. Pretoria. [ Links ]

Du Toit, R. (2012). The NSF as a mechanism to address skills development of the unemployed in South Africa. Pretoria: HSRC. [ Links ]

European Foundation for Quality Management (EFQM). (1999). Excellence model. Retrieved March 12, 2011, from http://www.efqm.org/award.html [ Links ]

Fitz-Enz, J. (1994). Yes...you can weigh training's value. Training, 31(7), 54-58. [ Links ]

Florence, T.M., & Rust, A.A. (2012). Multi-skilling at a training institute (Western Cape Provincial Training Institute) of the Provincial Government of Western Cape, South Africa: Post training evaluation. African Journal of Business Management, 6(19), 6028-6036. [ Links ]

Goga, S., & Van der Westhuizen, C. (2012). Scarce skills information dissemination: A study of the SETAs in South Africa. Pretoria: HSRC. [ Links ]

Hamzah, N., Khoiry, M.A., Osman, S.A., Hamid, R., & Arshad, I. (2009). Poor student performance in project management course: Was the exam wrongly set? Engineering Education, 154-160. [ Links ]

Hermann, D. (2008). The current position of affirmative action. Johannesburg: Solidarity Research Institute. [ Links ]

IBM. (2011). IBM SPSS statistics 20 brief guide [Computer Software]. New York: IBM Corporation. [ Links ]

Janse Van Rensburg, D., Visser, M., Wildschut, A., Roodt, J., & Kruss, G. (2012). A technical report on learnership and apprenticeship population databases in South Africa: Patterns and shifts in skills formation. Pretoria: HSRC. [ Links ]

Kirkpatrick, D.L., & Kirkpatrick J.D. (2006). Evaluating training programs. (3rd edn.). San Francisco: Berret-Koehler Publishers. [ Links ]

Kline, R.B. (1994). An easy guide to factor analysis. London: Routledge. [ Links ]

Kruss, G., Wildschut, A., Janse Van Rensburg, D., Visser, M., Haupt, G., & Roodt, J. (2012). Developing skills and capabilities through the learnership and apprenticeship pathway systems. Project synthesis report. Assessing the impact of learnerships and apprenticeships under NSDSII. Pretoria: HSRC. [ Links ]

Lamont, J. (2001, 3 November). Shortage of skills will curb growth in South Africa. Financial Times. [ Links ]

Lawshe, C.H. (1975). A quantitative approach to content validity. Personnel Psychology, 28, 563-575. http://dx.doi.org/10.1111/j.1744-6570.1975.tb01393.x [ Links ]

Leedy, P.D., & Ormrod, J.E. (2001). Practical research planning and design. New Jersey: Merrill Prentice Hall. [ Links ]

Linacre, J.M. (2005). A user's guide to WINSTEPS Rasch-model computer programs. Chicago: Winsteps. [ Links ]

Linacre, J.M. (2009). A user's guide to WINSTEPS/MINISTEPS: Rasch-model computer programs. Chicago: MESA Press. [ Links ]

Linacre, J.M. (2010). Winsteps® (Version 3.70.0) [Computer Software]. Beaverton, Oregon: Winsteps.com. [ Links ]

Maiyaki, A.A. (2012). Influence of service quality, corporate image and perceived value on customer behavioural response: CFA and measurement model. International Journal of Academic Research in Business and Social Sciences, 2(1), 403-414. [ Links ]

Marock, C., Harrison-Train, C., Soobrayan, B., & Gunthorpe, J. (2008). SETA review. Cape Town: Development Policy Research Unit, University of Cape Town. [ Links ]

Mayenga, C. (2009). Mapping item writing tasks on the item writing ability scale. In CSSE - XXXVIIth Annual Conference, 25 May. Ottawa, Canada: Carleton University. [ Links ]

Mummenthey, C., Wildschut, A., & Kruss, G. (2012). Assessing the impact of learnerships and apprenticeships under NSDSII: Three case studies: MERSETA, FASSET & HWSETA. Pretoria: HSRC. [ Links ]

National Institute of Standards and Technology (NIST). (2010). Malcolm Baldrige National Quality Award criteria. Washington, DC: United States Department of Commerce. [ Links ]

National Quality Institute (NQI). (2001). NQI- PEP (Progressive excellence program) healthy workplace criteria guide. Retrieved April 16, 2011, from http://www.nqi.ca [ Links ]

NQI. (2007). Canadian framework for business excellence: Overview document. Canada: Author. [ Links ]

Palit, A. (2009). Skills development in India: Challenges and strategies, ISAS Working Paper no. 89. Singapore: National University of Singapore. [ Links ]

Phillips, J.J. (1997). Handbook of training evaluation and measurement methods. (3rd edn.). Texas: Houston Gulf. [ Links ]

Quality Council for Trades and Occupations (QCTO). (2011). QCTO policy on delegation of qualification design and assessment to Development Quality Partners (DQP) and Assessment Quality Partners (AQPs). Pretoria: Author. [ Links ]

Reckase, M.D. (1979). Unifactor Latent Trait Models applied to Multi-Factor Tests: Results and implications. Journal of Educational Statistics, 4, 207-230. http://dx.doi.org/10.2307/1164671, http://dx.doi.org/10.3102/10769986004003207 [ Links ]

Republic of South Africa. (2008). Skills Development Amendment Act, No. 37 of 2008. Pretoria: Government Printers. [ Links ]

South African Excellence Foundation (SAEF). (2005). The South African Excellence Awards Programme. Retrieved April 16, 2011, from http://www.saef.co.za/asp/about [ Links ]

South African Institute of Race Relations (SAIRR). (2008). Why not 8%? Fast Facts, No. 6. Johannesburg: Author. [ Links ]

Schriesheim, C.A., Powers, K.J., Scandura, T.A., Gardiner, C.C., & Lankau, M.J. (1993). Improving construct measurement in management research: Comments and a quantitative approach for assessing the theoretical content adequacy of paper-and-pencil survey-type instruments. Journal of Management, 19, 385-417. http://dx.doi.org/10.1016/0149-2063(93)90058-U, http://dx.doi.org/10.1177/014920639301900208 [ Links ]

Smith E.V. Jr. (2002). Detecting and evaluating the impact of multidimensionality using item fit statistics and principal component analysis of residuals. Journal of Applied Measurement, 3, 205-231. [ Links ]

Smith, E.V. Jr. (2004). Evidence for the reliability of measures and validity of measure interpretation: A Rasch measurement perspective. In E.V. Smith Jr & R.M. Smith (Eds.), Introduction to Rasch measurement (pp. 93-122). Maple Grove, Minnesota: JAM Press. [ Links ]

Scottish Qualifications Authority (SQA). (2009). Guide to assessment. Glasgow. [ Links ]

Stufflebeam, D.L. (2003). The CIPP model for evaluation. In T. Kellaghan, D.L. Stufflebeam & L.A. Wingate (Eds.), International handbook of educational evaluation (pp. 31-62). Dordrecht: Kluwer Academic Publishers. [ Links ]

Stufflebeam, D.L., & Shinkfield, A.J. (2007). Evaluation theory, models and applications. San Francisco: Jossey-Bass. [ Links ]

Tabachnick, B.G., & Fidell, L.S. (2001). Using multivariate statistics. (4th edn.). Needham Heights, MA: Allyn & Bacon. [ Links ]

Trewatha, R.G., & Newport, M.G. (1976). Management: functions and behaviour. Dallas: Business Publications. [ Links ]

Tshilongamulenzhe, M.C. (2012). An integrated learning programme management and evaluation model for the South African skills development context. Doctoral dissertation. Pretoria: University of South Africa. [ Links ]

Vorwerk, C. (2010). Quality Council for Trades and Occupations: Draft policy. Pretoria: Department of Higher Education and Training. [ Links ]

Wildschut, A., Kruss, G., Janse van Rensburg, D., Haupt, G., & Visser, M. (2012). Learnerships and apprenticeships survey 2010 technical report: Identifying transitions and trajectories through the learnership and apprenticeship systems. Pretoria: HSRC. [ Links ]

Wilson, F.R., Pan, W., & Schumsky, D.A. (2012). Recalculation of the critical values for Lawshe's Content Validity Ratio. Measurement and Evaluation in Counseling and Development, XX(X), 1-14. [ Links ]

Correspondence:

Correspondence:

Maelekanyo Tshilongamulenzhe

PO Box 392

Pretoria 0003

South Africa

Email:tshilmc@unisa.ac.za

Received: 22 Oct. 2012

Accepted: 26 July 2013

Published: 08 Oct. 2013