Services on Demand

Article

Indicators

Related links

-

Cited by Google

Cited by Google -

Similars in Google

Similars in Google

Share

South African Journal of Education

On-line version ISSN 2076-3433

Print version ISSN 0256-0100

S. Afr. j. educ. vol.35 n.2 Pretoria May. 2015

http://dx.doi.org/10.15700/saje.v35n2a1045

From challenging assumptions to measuring effect: Researching the Nokia Mobile Mathematics Service in South Africa

Nicky RobertsI; Garth Spencer-SmithII; Riitta VänskäIII; Sanna EskelinenIII

IDepartment of Mathematics Education, School of Education, University of the Witwatersrand, South Africa nickyroberts@icon.co.za

IIKelello Consulting associate and Ukufunda Educational Consulting, Cape Town, South Africa

IIIMicrosoft Oy, Helsinki, Finland

ABSTRACT

This paper reports on the analysis of the voluntary uptake and use of the Nokia Mobile Mathematics service by 3,957 Grade 10 learners. It measures the effect of the service on the school Mathematics attainment of 1,950 of these learners over one academic year. The study reveals that 21% of Grade 10 Mathematics learners voluntarily and regularly made use of this mobile learning resource outside of school time, with little involvement from their teachers. We found that across the group of 1,950 learners, there was an average decline in Mathematics attainment from Grade 9 to Grade 10 of 15 percentage points. We further found that there was a significant difference in the percentage point shifts of the group of non-users (zero questions), where there was a mean decline of 19 percentage points compared to the group of regular/extensive users (151+ questions), where the mean decline was 11.5 percentage points. This difference in means was significant (t (1344) = 8.0, p = 2.2 x 10-15), with a small to medium effect size (d = 0.45). Research limitations and directions for future research are discussed in light of these findings.

Keywords: mathematics education; measuring effort; measuring impact; mobile learning (m-learning); secondary school; South Africa; technology-enhanced learning

Introduction

The numerous challenges facing the South African education system in general, and mathematics education in particular, are relatively well documented. This is evident in academic research (Carnoy, Chisholm & Chilisa, 2012), in large scale systemic evaluations of the South African educational landscape (Taylor, Draper, Muller & Sithole, 2013), as well as in government planning documents (National Planning Commission, The Presidency, Republic of South Africa, 2011). In response to these acknowledged challenges, there have been numerous initiatives (at government, civil society and private sector levels) to intervene, innovate and attempt to redirect the current poor performance trajectory, with particular interest in supporting mathematics and language interventions using various technologies.i

The technological interventions to improve mathematics and language outcomes in South African schools are not unique to this country. There is much investment from various role players in technological innovations that might improve the quality of education in developing countries (Trucano, 2005). An indication of this global interest is the United Nations Millennium Development Goal Eight which has as its sub-goal: "in cooperation with the private sector, make available benefits of new technologies, especially information and communications" (United Nations, n.d.).

Much hope is placed in the innovation possible in improving education quality through wide scale access and use of mobile devices. Mobile phone penetrationii in South Africa has increased significantly, and mobile telephones are the most widespread and accessible Information and Communication Technology (ICT) devices in South Africa today, particularly amongst the youth (Ford & Botha, 2010). Recent statistics show that mobile penetration in South Africa is at 123%iii (Deloitte & GSMA, 2012), and that "mobile technology has permeated into all levels of society - into rural areas, classrooms and boardrooms" (Ford & Botha, 2010:4). This is supported by research that has shown that the uptake of mobile phones amongst youth in urban low-income areas in the Western Cape is almost ubiquitous (Kreutzer, 2009).

To explore the efficacy of using mobile learning to support Mathematics performance at secondary school level, a development and research intervention was implemented in 30 public schools, targeting 4,000 Grade 10 Mathematics learners in South Africa. Research was focused on measuring the effect of providing a free Nokia mobile mathematics service (hereafter referred to as 'the service') for the Grade 10 Mathematics learners in these schools. However, measuring the effect that any intervention (technological or otherwise) has, is challenging in the complex interacting education system of classrooms, schools and schooling systems. When considering efficacy at the classroom level, much attention is placed on improved learning outcomes: did the learners learn what was intended? Evidence of learning is typically gathered through various kinds of learning assessments. However, the results of assessments reveal what can be attained in an assessment, and are not necessarily a measure of what was actually learnt. Further, what is attained by any particular individual depends on what they already knew prior to an intervention.iv

Given the complexities in measuring effect, a broad evaluation study was designed. This attended to learner and teacher attitudes and beliefs about mathematics, their views on the service, recommendations for pedagogical and technological improvements of the service, as well as uptake, use, and shifts in Mathematics attainment. In this paper, we focus only on the findings relating to shifts in learner attainment using school assessment results. However, in interpreting the findings, we draw on our broader knowledge of the evaluation study to conjecture a few possible reasons for the observed shifts.

Research Focus

This paper reports on the analysis of the voluntary uptake and use of the service in 30 township and rural schools, and its effect on Mathematics attainment over one academic year. Six of the participating schools were in the Eastern Cape, 13 in the North West, and 11 in the Western Cape.v The schools were selected by the provincial departments of education using the guidelines developed by the project team (Roberts & Vänskä, 2011).

The study set out to answer the following research questions:

In the context of a free m-learning offering, where the voluntary uptake and use of the service in 30 diverse public schools is encouraged:

- what was the magnitude of the uptake and use of the service amongst the learners?

- were there any shifts in school Mathematics attainment (from Grade Nine to Grade 10) of learners who used the service regularly, compared to those who did not use it regularly?

Situating the Study

Mobile learning (or m-learning) can be defined as "learning across multiple contexts, through social and content interactions, using personal electronic devicesvi" (Crompton, 2013:4). M-learning is considered by some technology advocates to be part of the range of innovations, which may improve mathematics education. Some of these advocates seem to view m-learning as a panacea for educational improvement. For example, Wagner (2005:44) is particularly enthusiastic about the promise of m-learning:

Whether we like it or not, whether we are ready for it or not, mobile learning represents the next step in a long tradition of technology-mediated learning. It will feature new strategies, practices, tools, applications, and resources to realize [sic] the promise of ubiquitous, pervasive, personal, and connected learning. It responds to the on-demand learning interests of connected citizens in an information-centric world.

These claims tend to made by technology enthusiasts, and to be driven by corporate interests. This more 'technology-focused approach' may be questioned in favour of a more 'learning-focused approach' (see Liu, Han & Li, 2010), where the former stresses the technology, while the latter focuses on the learning needs for particular mathematics topics and/or particular individuals.

New technology should not simply be introduced into education for the sake of keeping abreast with technology developments or to create young markets for technology vendors. However, particular technologies may well be "a catalyst for change in teaching and learning styles, and access to information" (Chigona, Chigona & Davids. 2014:1). In recent years, a number of studies on m-learning have lent weight to the view that mobile phones open up new ways of extending the scope, scale and quality of education (Mishra, 2009). This view is based on two main factors: the drop in the price of mobile handsets and usage costs, which makes mobile phones increasingly common, even in poorer communities; and on the highly flexible nature of mobile phones (Traxler, 2009). This remains a contested research terrain, however, and the particular affordances of mobile devices learning (for particular mathematics topics and age groups, and for specific pedagogic shifts) is only just starting to be considered.vii

A growing literature investigating the instructional benefits of mobile devices (Daher, 2010; Johnson, Adams & Cummins, 2012; Thomas & McGee, 2012; Thomas & Orthober, 2011) supports the claims of the potentials of m-learning devices to improve learning outcomes. Whether or not m-learning appears to hold promise in improving learning outcomes (particularly for Mathematics) is only recently being investigated.

While there appears to be some progress being made in terms of researching the efficacy of m-learning in developed contexts, Koszalka and Ntloedibe-Kuswani (2010) assert that research into the efficacy of m-learning in the developing world is in its infancy.viii They explain that evidence is lacking as to whether it is the m-learning that facilitates learning, or some other factor (e.g. other changes in pedagogical options related to the technology). These concerns are supported by a report by United Nations Educational, Scientific and Cultural Organization (UNESCO) (2012) noting that much of the documentation from the developing world is located in grey literature (such as project reports, presentations, websites and blogs), which are descriptive and promotional, rather than analytic and evaluative.

We remain open to the possibility that the introduction of an m-learning tool may effect some changes in the complex education system. In particular, we note the growing evidence that introduction of technology seems to facilitate shifts in motivation amongst learners and teachers (Chig-ona et al, 2014; Trucano, 2005). We are, however, more cautious about contesting evidence relating to shifts in learning outcomes as a result of m-learning. Strigel and Pouezevara (2012), while exploring evidence for the effect of m-learning on primary mathematics interventions, critique a metaanalysis review (Cheung & Slavin 2011, cited in Strigel & Pouezevara, 2012:16) of 74 studies in computer-aided-instruction for mathematics interventions:

A careful review of the various programs [sic] and studies included in this review and their context, however, yields that although overall effects seem to be positive, no single technology program [sic] produced consistent results. These varying results may be explained by attending to context: how m-learning is harnessed (to what end, and for which pedagogic purposes); the particular learning environment (of the school, classroom, community and the particular mathematics topic in question).

We situate this paper as contributing to the literature on the efficacy of m-learning in relation to shifts in Mathematics attainment in a particular South African context. The paper focuses attention on an m-learning intervention, which supports independent learner revision and consolidation of mathematics outside of school time. We aim to provide enough contextual information about the service for the shifts in attainment to be tentatively interpreted. We think this paper may be relevant to m-learning practitioners, theorists and policy makers engaged in mathematics education; as well as mathematics education researchers in South Africa.

Background

From 2008 to 2014 Nokia Cooperation (and thereafter Microsoft), in partnership with the national Department of Science and Technology, developed and funded research into a mobile learning mathematics service for Grade 10 learners in South Africa. The research was conducted with the involvement of the national Department of Edu-cation.ix School selection and ethical approval for participation in the intervention was conducted following the agreed departmental guidelines.

The initial research focused on 10 pilot schools, where several assumptions about the use of mobile learning amongst teachers and learners were challenged (Roberts & Vanska, 2011). These assumptions were:

- Near ubiquitous access to mobile devices (mobiles) among South African youth presents opportunities for m-learning in schools, without significant investment in technology;

- For effective technology use in schools, teachers must be confident and effective users of the tech-nology;x

- Technologies are used most successfully in well-resourced schools;

- Independent use of mathematics revision and extension materials is usually only done by high-achieving learners.

The detailed discussion debunking these assumptions is the substance of the article by Roberts and Vanska (2011). The factors hindering and supporting uptake of the service in the 10 pilot schools were also discussed. In this study, involving 10 pilot schools, the usage patterns of the control to the experimental groups revealed that regular users of the service were mostly using their own mobile telephone (not a school or borrowed device). They were mostly using the service independently, rather than only as a consequence of a directive from their teachers. Four major factors were reported to inhibited use (listed in order of those most frequently mentioned):

- lack of access to a suitable mobile;

- technical problems, including lack of network coverage, lack of compatibility of specific handsets with the service, and technical error messages;

- the lack of fun or that using the service was boring; and

- difficulty using the service, and in particular the perception that the mathematics in the service was too difficult.

The first two factors were far more frequently mentioned than the last two.

One of the key findings of the pilot research project was that "82% of login activity took place outside of school time and that there was continued use of the service on weekends and holidays" (Roberts & Vanska, 2011:252). As a result, the service was enhanced to focus more strongly on supporting independent after-school work on mathematics by learners. Roberts and Vanska (2011:258) called for "further analysis on a bigger selection of learners to reflect on their shifts in attainment in relation to their use of the service after a full academic year". Such further analysis focusing on shifts in attainment as measured in school assessments for the larger population of 30 schools was then undertaken, and is reported on here.

We first briefly describe how and where learners accessed and afforded the service, and then illustrate some of its mathematics content. In particular, we exemplify what is meant by "a mathematics question", as this is our unit for analysing learner usage.

Learners mostly used their own personal mobile devices to access the service outside of school time. There was no licence or subscription fee and the data costs (of traffic to and from the service) were paid for by mobile operators. The content was designed to be light on data (making use of simple text and a few small graphic elements). There was minimal teacher training,xi as use of the service was not integrated into the curriculum management or homework administration processes of the schools.

The content of the service aimed to support active learning and 'learning by doing' mathematics. The content for the service was locally developed, and organised using the topics specified in the South African Curriculum for Grade 10 Mathematics. For each topic, learners could work through short theory and worked example sections, and/or answer questions drawn from a database of approximately 10,000 questions. The questions were presented by degree of difficulty (easy, medium, or difficult). Questions were drawn from a database of possible questions for topic and degree of difficulty in a random sequence (so each entry into the service provided a different experience of the topic). Learners unable to answer a question could request hints, which provided suggestions towards the solution. Learners were given complete freedom as to which topics they attempted, at what times. The questions were all closed questions (with one possible correct response), making use of multiple choice or short answer formats.

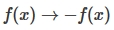

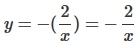

The following is an example of an easy question from the Grade 10 topic on Functions:

True/False:

The reflection of  about the x-axis is

about the x-axis is  Write t for true or f for false.

Write t for true or f for false.

Learners could request hints, which provided suggestions towards the solution. The hint for this particular question reads:

Reflection in the x-axis means  Learners were given immediate feedback (correct or incorrect) to their responses. This included a complete worked solution:

Learners were given immediate feedback (correct or incorrect) to their responses. This included a complete worked solution:

The reflection of  about the x-axis is

about the x-axis is  but the statement says

but the statement says  so this statement is FALSE.

so this statement is FALSE.

The question styles were varied to include multiple-choice questions; spot-the-error; type-in-the-answer; and true/false questions.xii

The service was provided to learners using a mobile delivery platform, which was accessible on all mobile devices (and not only Nokia handsets).

Conceptual Framework

Focusing on m-learning and mathematics, Strigel and Pouezevara (2012) provide a useful conceptual framework on variations in mobile learning configurations, which attends to several dimensions (spectrums) of the way in which mobile technologies might be integrated into learning environments: a learning spectrum, which ranges from formal (in class in school) to informal but school-related (out-of-school but formal learning) to informal (informal learning for pleasure or entertainment); a kinetic spectrum, which ranges from the learners being stationery (not moving with either a portable or fixed device) to being mobile (moving with the portable device); and a collaborative spectrum, from learners working individually (alone) to working collaboratively (in groups). Strigel and Pouezevara (2012:7) depict these spectrums as three dimensions on a diagram labelled "Variations on Mobile Learning Configurations". We used this diagram to situate the Nokia Mobile Mathematics Service in relation to each spectrum (see Figure 1).

This mobile mathematics service was informal (used out-of-school), but supported formal learning (school Mathematics) in terms of learning spectrum (Point A in Figure 1). The service supported the formal school curriculum; however, use of the service took place informally, mostly outside of school time. The service was towards the mobile end of the kinetic spectrum, as the service could be used while the learners were mobile (moving) either at school or outside of school (Point B in Figure 1). The broader evaluation study established that many learners reported spending time using the service while travelling to and from school in taxis. Learners were therefore moving while using the service, although this movement was not a requirement for engaging with the service. Finally, in terms of the collaborative spectrum, the service was nearer to the individual end of the spectrum (Point C in Figure 1). Individual learners typically worked independently on the service. However, the service included a limited collaborative aspect, in that the learners' points (attainment and activity levels) were visible to each other in a community of mathematics learners, and learners could send messages to other learners from within the service.

Methodology

The study adopted a quasi-experimental design, comparing control and experimental groups that were not randomly assigned (Rossi, Lipsey & Freeman, 2003). Data was collected on uptake, use and Mathematics attainment from 30 secondary schools in three South African provinces, which were selected by the national Department of Basic Education.xiii

We collected data on how much the 3,957 targeted learners used the service (from the Moodle learner management system), and what they attained in Mathematics at the end of Grade Nine and the end of Grade 10 (from their teachers). In South Africa at Grade 10 level, learners have a choice between Mathematics and Mathematics Literacy. The service was designed to support the former, specifically. As such we focused only on those learners who selected Mathematics in Grade 10, and did not consider learners who took Mathematics Literacy in Grade 10. This data was then cleaned and the resulting data set comprised 49% of the population (n = 1,950 learners)xiv

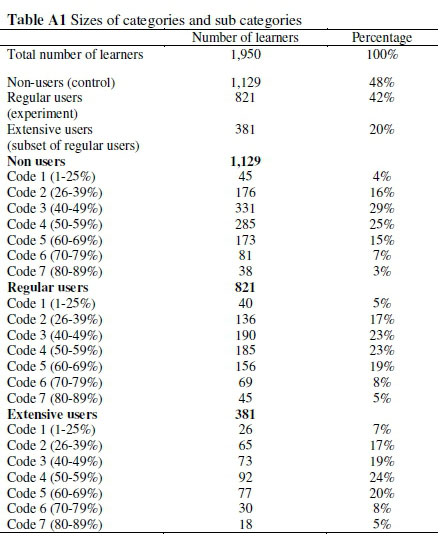

For the activity levels we defined categories of active users, defined by the number of questions they answered (as presented in Table 1).

The learners were split into three main activity level categories:

- Non-users and occasional users (0 - 150 questions);

- Regular users (151+ questions) from which a further subcategory of Extensive users (451+ questions) was identified.

The first category was used as a control group for the second category. The control and experimental groups resembled each other on several key aspects, in that they attended the same mathematics classes at the same schools with the same teachers. Roberts and Vänskä (2011) and the broader evaluation study for the 30 schools established that the primary reason for non-use and occasional use was insufficient (or non) access to the suitable mobile device Both the control and the experimental groups included a diverse range of prior attainment categories (see Appendix A), as well as diverse attitudes towards and beliefs about mathematics (these were reported on the broader evaluation study, and are not in focus in this paper). Under these circumstances, the "program [sic] effects can be assessed with a reasonable degree of confidence" (Rossi et al., 2003:274). However, due to the non-randomisation, the effect estimates may be biased. The group sizes for each category of user are presented in Figure 2.

There were nine learners, who completed more than 10,000 questions each. We referred to these learners as exceptional users (10,000+), and obtained additional data of their school attainment for the first term of Grade 10.

To analyse the data, firstly we correlated the number of questions a learner answered to their shifts in attainment, and secondly we considered shifts in attainment for different categories of users.

We calculated a Pearson Product Moment correlation coefficient (r) to establish whether there was a linear relationship between the number of questions answered by each learner and their shift in Mathematics attainment from Grade Nine to Grade 10. We then conducted independent t-tests on each of the categories of usage in relation to their mean shifts in attainment. T-tests indicate whether the shifts in the means of the various groupings are statistically significant (comparing unrelated groups from the same population). We calculated the Cohen's effect sizes for these shifts to compare the effect sizes for each category of usage.

Research Design Limitations

This study is limited by the absence of standardised data that could be used to compare mathematics learner performance at the Grade Nine and Grade 10 level.xv The study made use of school-assessment data, which differed from one school to another. The effect of variation in school assessments was partially mitigated by comparing learners who used the service, to those who did not use it; in other words, two groups from the same 30 schools, with the same 30 school-assessments.

In addition, this study did not set out to explain the causes for shifts in learning attainment. It only presents our (as yet untested) conjectures as to which factors may have contributed to these findings. Further research is required to investigate the validity of these conjectures. Such research would need to take into account the theoretical framework adopted for the design of the service, the quality and related pedagogical approach of the service, as well as how learners engaged with it to shift their mathematics learning in particular topics.

The study does not systematically examine all the factors (other than attainment and activity level), which may account for differences in the learner populations. Such factors include, for example, the conscientiousness of the learner and the presence, absence and quality of homework administration within particular classes.

This study does not make recommendations of how the service might be improved upon, either pedagogically or technically.xvi It is also limited by the potential corporate biases created by having the research funded by Nokia.xvii

Findings

Findings for Learner Uptake and Use Thirty schools participated in the project in 2010. A total of 3,957 learners were registered on the service, of whom 2,615 visited the service at least once. Two thousand four hundred and seventy-one learners were considered active users, as they completed at least 10 questions on the service. This represented 66% of all the registered learners, and 94% of the learners who visited the service at least once.

Over one year, approximately 1,020,000 questions were answered. For all learners (including non-users) there was a mean of 390 questions answered.xviii Excluding the non-users, there was a mean of 533 questions per active learner.xix Use of the service continued beyond the first six months of the intervention, and use was more extensive in the second half of the year. Increased use may indicate that learners used the service more as examinations approached, or learners used the service more as they became more familiar with it over time. Whatever the reasons, we consider the increase in use over time to be a positive indication of the value of the service, as we had expected that there may have been an initial 'honeymoon period' (of high initial activity) when the service first commenced, followed by a sharp decline in use over time. A final indication of the uptake and use of the service was obtained by considering its continued use, after learners had completed Grade 10. By March 2011, 502 learners (approximately 20% of the 2,471 active learners in 2010) from a variety of schools continued to use the Grade 10 service in 2011, despite now being in Grade 11.xx

Findings for Effect on Learners' Attainment after One Year (n = 1,950)

There were 1,950 learners for whom there was data available on both their final Grade Nine and their final Grade 10 Mathematics attainment. We calculated shifts in attainment by finding the difference between Grade 10 and Grade Nine attainment for each learner. We undertook two approaches to analysing this data. Firstly, we correlated the number of questions a learner answered to their shifts in attainment. Secondly, we considered shifts in attainment for different categories of users.

A Pearson Product Moment correlation coefficient (r) was calculated to establish whether there was a linear relationship between the number of questions answered by each learner using the Mobile Mathematics services and:

- their Grade 10 December (2010) attainment (which indicated a very slight positive correlation (r = 0.13));

- the difference between their Grade 10 December (2010), and their Grade Nine (2009) attainment results (which indicated a very slight positive correlation: (r = 0.10)).

This revealed that the relationship between the use of the service and shift in individual attainment was only very slight (and not clearly linear).

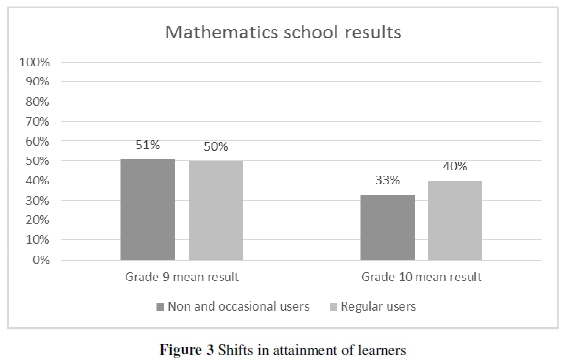

We then considered shifts by different categories of users, and conducted independent t-tests on the data. Across the group of 1,950 learners there was an average decline in Mathematics attainment from Grade Nine to Grade 10 of 15 percentage points. We found that:

- The Mathematics attainment of learners who never, or only occasionally, used the service, dropped by an average of 17 percentage points;

- The Mathematics attainment of learners who used the service regularly dropped by an average of 11.5 percentage points;

- For extensive users of the service, their decline in attainment was even less, with an average drop of nine percentage points.

The mean shifts for occasional and regular users are visually depicted in Figure 3.

The independent t-tests carried out on the data provided a further indication of the likely effect on attainment of the service. There was a significant difference in the percentage point shifts between the group of occasional users (0-150 questions) (M = -17.1, SD = 16.1) compared to the group of regular/extensive users (151+ questions) (M = -11.5, SD = 16.7); t (1948) = 7.6, p = 5.8 x 10-14. The magnitude of Cohen's effect size (d = 0.34) indicates that the effect of the service here was small to medium. There was a significant difference in the percentage point shifts between the group of non-users (zero questions) (M = -19.0, SD = 17.1) compared to the group of regular/ extensive users (151+ questions) (M = -11.5, SD = 16.7); t (1344) = 8.0, p = 2.2 x 10-15. The magnitude of Cohen's effect size (d = 0.45) indicates that the effect of the service here was small to medium. Finally, there was a significant difference in the percentage point shifts between the group of non-users (zero questions) (M = -19.0, SD = 17.1) compared to the group of super users (600+ questions) (M = -9.1, SD = 17.9); t (804) = 7.6, p = 8.3 x 10-14. The magnitude of Cohen's effect size (d = 0.56) indicates that the effect of the service here was medium.

In summary, the service appears to have dampened the trend of declining attainment in Mathematics from Grade Nine to Grade 10 for extensive users of the service (compared to those who did not use it). However, the relationship between shifts in attainment and usage was not linear, as the Pearson Product Moment correlation was only slightly positive.

The calculation of shifts in attainment took into account the learners' prior attainment in Mathematics. However, it was felt that the service may have impacted on learners who had previously (in Grade Nine) failed Mathematics differently to the way in which it impacted on learners who achieved distinctions in Mathematics. As such, the learners' prior attainment levels were considered important categories. Prior attainment levels were defined in relation to a scale of 1-7 'codes'.xxi The learners from each activity category - Occasional, Regular, and Extensive users - were further disaggregated according to their prior attainment code for their Grade Nine final Mathematics attainment (see Appendix A).xxii

The difference in mean results in Grade 10 between learners using the service regularly and non-users, reveals that prior attainment categories code 2 and 7 derived the greatest effect from the service. Table 2 shows that the difference in mean attainment in Grade 10 between regular and non-users of the service was 7 percentage points.

Table 2 reveals that high attaining learners (code 7: 80-100%), who did not use the service regularly, saw their attainment drop by 31 percentage points on average from Grade Nine to Grade 10; while high attaining learners who used the service regularly saw their attainment drop by only 22 percentage points from Grade Nine to Grade 10. Likewise, those learners who were failing Grade Nine Mathematics (code 2: 25-39%), and used the service regularly maintained, their attainment (with a slight improvement evident for the extensive users), while those learners who were failing Grade Nine Mathematics and did not use the service regularly, saw their attainment decline a further 9.5 percentage points on average in Grade 10. It should be born in mind that this finding related to learners who failed Grade Nine Mathematics, but who nevertheless continued with Mathematics (and not Mathematics Literacy) in Grade 10.

Findings for Effect on Exceptional Users (n = 9) Learners who completed more than 10,000 questions in practice exercises and tests, and for whom Mathematics results were available, were identified as exceptional users. There were nine such learners. They were of interest to us, as they seemed to be outliers, completing more than 20 times the mean number of questions.

For each exceptional user, their shift in results from Grade Nine to the first term of Grade 10 and then to their final Grade 10 result, was considered. We did not isolate the specific personal context for each learner. Each learner is unique and was experiencing the service in a particular context, which included their school environment, their Mathematics teacher, their peers, their prior attainment in Mathematics, their home, economic and social context, and so on. Table 3 presents the number of questions answered, the Grade Nine and 10 results and the shifts in results for these exceptional users.

Table 3 reveals that all (except one) of the exceptional users showed shifts in their attainment that were better than the average shift (of -15%) by the group. Only Learner I showed a decline that was more than this average. However, her results in Grade Nine were very high (90%) and may be considered an outlier in the Grade Nine data. Her results in Grade 10 were a marked decline from this very high attainment in Grade Nine, although she still obtained a pass of 51% in Grade 10. The reasons for this are not known.

Discussion

In this section, we answer the research questions before relating these answers to the broader literature on m-learning. We caution, however, that it is problematic to consider this study being general-isable to other Mathematics m-learning services, or to the broader South Africa. We simply seek to situate the findings from this particular study (as a case study of m-learning research on 30 schools in South Africa) as contributing to this broader m-learning literature. Its findings and related discussion may or may not find resonance with m-learning researchers in other mathematics contexts.

Findings on Uptake and Use of the Service This section answers the following research question: What was the magnitude of the uptake and use of the service amongst the learners?

The uptake and use of the service in 2010 was as follows:

- Two thousand four hundred and seventy-one learners (63% of the 3,957 targeted learners) completed at least 10 questions on the service.

- Eight hundred and twenty-one learners (21% of the 3,975 targeted learners) used the service regularly. They completed an average of more than 150 questions each.

- A smaller group of 381 learners (10% of the 3,975 targeted learners) emerged as extensive users, each answering more than 450 maths questions.

- Nine learners, from four different schools, completed more than 10,000 maths questions each. The highest number of exercises and tests completed by a learner was 30,260 questions.xxiii

- Twenty percent of the 2,471 learners that used the Grade 10 service to complete at least 10 questions in 2010 continued using the Grade 10 service in 2011, despite being in Grade 11.

The extent of use varied greatly from learner to learner.

Findings on Shifts in Mathematics Attainment

This section answers the following research question: Were there any shifts in school Mathematics attainment (from Grade Nine to Grade 10) of learners who used the service regularly, compared to those who did not use it regularly?

Analysis of the data from 1,950 learners showed that the Mobile Mathematics service had a positive effect on school attainment in Mathematics. The differences in the mean of their individual shift in attainment from Grade Nine to Grade 10 are presented in Table 4.

In a context where Mathematics attainment tends to decline substantially from Grade Nine to Grade 10 (in these 30 schools), the learners who used the service regularly, saw their attainment decline less than their peers. On average, learners who used the service regularly saw a shift in attainment from Grade Nine to Grade 10, which was seven percentage points less than the shift evident for learners who did not use the service regularly. The learners who benefitted most from the service (who showed the greatest shift in their attainment), were those who had narrowly failed Mathematics (Code 2: 26-39%), or attained distinctions (Code 7: 80-100%) in Grade Nine. This begs the further question of how to better shift attainment for the learners in the middle groups (from Code 3 - Code 6) in Grade Nine, who continue to take Mathematics (and not Mathematics Literacy) in Grade 10.

Interpreting the Findings

In this section, we provide our interpretation of the findings. In doing so, we attend to the question: why do we think that there was smaller decline in attainment from Grade Nine to Grade 10 for regular users of the service, compared to their peers who did not use the service?

We could not apportion the smaller decline in attainment solely to the service. The learner activity levels are entangled with their motivation and their effort to invest their time in independent out-of-school mathematics study. Roberts and Vanska (2011) established that the main factor for non-use was lack of access to technologies, and we found that both control and experimental groups included a spread of prior attainment categories. But we were not able to match experimental learners to control learners to constrain for the time spent on independent out-of-school study. However, given our knowledge of the South African public schooling context, we think it unlikely that the control learners accessed as many mathematics questions outside of school time. Nor do we think they received as much feedback on their solutions through other means (such has homework set and marked by a teacher or by self-marking of textbook exercises). As such, we hypothesise that the experimental group had a far greater exposure to, and feedback on, closed mathematics questions and their solutions, than did the control group. We speculate that this increased exposure provided a richer learning opportunity for them to improve their procedural fluency in mathematics calculations and problem-solving procedures, than their peers who did not make use of the service. We think this may explain their smaller decline in attainment from Grade Nine to Grade 10, compared to their peers, who did not use the service.

M-learning practitioners and educational technology planners may consider this service, and its related evaluative studies, to be an example of stakeholder collaboration in a developing country context, with a focus on delivering resources for schools, and a concurrent commitment to researching its effect on education outcomes (as measured through shifts in school attainment). The study reveals that 21% of Grade 10 Mathematics learners may voluntarily make use of this m-learning resource, with little involvement from their teachers. In so doing, they may show a smaller decline in the school assessment results from Grade Nine to Grade 10, in comparison to their peers, who do not use the service over a one-year period. Their mean shift in attainment masks the substantial shifts that are possible for exceptional users, who used the service far more than the average learner.

Considering the methodology adopted, we caution that it is not yet known whether the effect observed was solely a result of the m-learning service. The observed effects may be a result of an underlying factor distinguishing the control from the experimental groups, and/or of underlying attributes of the design of the service. Further research is required to investigate the reasons for the findings, and to establish whether there will be similar findings when standardised assessments of Mathematics attainment are used.

An additional generic benefit of m-learning emerges from this study: m-learning services provide a possible reliable measure of the effort that individual learners put into using a learning service in relation to their peers. Traditionally measuring uptake, completion and performance rates on homework activity (often set and marked by teachers) has been a time-consuming and expensive process. With m-learning, the electronic tracking facilities relating to uptake, use and attainment within structured learning environments can now be easily and cheaply obtained. The portability of personally owned mobile devices means that m-learning services can be available most of the time and in most out-of-school environments, making it possible to reveal usage patterns in more detail than was previously viable. With this data, it becomes possible to analyse educational attainment in relation to individual effort (in respect of using a particular m-learning service) during and after school hours.

Policy makers and researchers in mathematics education in South Africa may also find this study to be of interest. In this context, there is generally poor attainment in mathematics at the Grades 10, 11 and 12 levels (and the declining results from Grade Nine to Grade 10 evident from these schools). This study includes an exciting auxiliary finding about the out-of-school-time work ethic and motivation for independent learning that is evident amongst one-fifth of this particular group of Grade 10 Mathematics learners. It is not known whether similar findings would be obtained for a larger population. However, this finding begs the question as to whether the informal outside-of-school time of Grade 10 (and by extension, Grade 11 and Grade 12) learners, and their drive to work independently on mathematics, is being optimally utilised in other mathematics-related interventions.

From the theoretical perspective, we found that the Strigel and Pouezevara (2012) provide a useful framework on variations in mobile learning configurations. We were able to use this to locate this m-learning service in this framework, which we hope facilitates some comparison with other interventions. However, we found that there are at least two important dimensions, which are not present in the framework, to which we found ourselves attending.

First, we think that an access and afford-ability spectrum should be included into this framework. In the resource-constrained context of South Africa, where consideration of m-learning interventions should focus on redress and equity; we consider this spectrum to be a fundamental consideration. We think that this ranges from free public access to suitable devices and free broadband data on one end, to Bring Your Own Device (BYOD) access models and private individual data contracts for broadband data on the other. Subsidised data (by government and or operators) and public investments into improved access to mobile devices fall somewhere on this spectrum. This service offered free public access to data, but devices were personally owned or borrowed from family members or friends.

Second, we think that a mathematical pedagogy spectrum is required. We notice that there is very little in the Strigel and Pouezevara (2012) framework that focuses directly on the knowledge, attitudes and beliefs about mathematics, and how it is taught (amongst learners or teachers of the subject). Introducing this spectrum would require m-learning interventions in mathematics to position themselves in relation to contrasting approaches to Mathematics teaching and learning, for particular mathematics topics and particular age groups. This service has not yet situated its pedagogical approach in relation to other services.

Conclusion

In sum, this study confirms that learning remains a messy process: what is put in to the system (be it an m-learning or other intervention) does not lead neatly to an envisaged shift in attainment. Some learners take up the opportunity, others do not; educational interventions appeal to and affect some learners and not others; some learners put in much effort and show improved attainment while others put in the same effort with shifts in attainment less evident. So the complexity of the learning process remains. However, m-learning at least simplifies the measurement of effort (in this case reflected in uptake and use of an m-learning service) as one of many variables to consider in relation to accounting for shifts in educational attainment. The modest investment made in this service, which excluded extensive teacher training or the wide-scale delivery of technologies, has been shown to yield a positive effect in these 30 South African public schools (at least for these learners who owned or could borrow an Internet-enabled mobile device).

Acknowledgements

This research was funded by Nokia Corporation, and undertaken by Neil Butcher and Associates in 2010, where Nicky Roberts was the project manager. At that time, Riitta Vanska was the Nokia project manager overseeing the research contract. Sanna Eskelinen was subsequently employed by Nokia Corporation into this position, and remains responsible for overseeing the research relating to uptake use and impact of the Nokia Mobile Mathematics service. Garth Spencer-Smith, a Kelello Consulting associate, contributed to the writing of this article for journal publication purposes.

Notes

i. By way of example, the formation of the National Education Collaboration Trust (NECT) is an expression of this interest. There is further evidence of this interest in the South African Basic Education Conference (SABEC, 31 March - 1 April 2014) with its focus on "improving education through stake holder collaboration" and where its sub-theme "delivery of resources to schools" showcased numerous technology-enhanced learning initiatives (SABEC Conference, 31 March - 1 April 2014).

ii. The phrase "mobile penetration" is the conceptual term adopted in the mobile industry to indicate the number of mobile devices divided by the given population.

iii. A mobile penetration of more than 100% indicates that there are more mobile devices than there are people in the country.

iv. For more detail on the complexities of using assessment as a measure of effect in mathematics education in South Africa, see Dunne, Long, Craig and Venter (2012).

v. There are nine provinces in South Africa. The schools were selected from three of these provinces.

vi. These electronic devices can be simple or advanced mobile phones, portable media players, pocket PCs, portable game players (e.g. Nintendo DS), tablet computers, or even custom handheld devices.

vii. For recent reviews of m-learning and mathematics see, for example, Strigel and Pouezevara (2012) and Spencer-Smith and Roberts (2014).

viii. This may appear to contradict the earlier claim that research on technology-enhanced learning initiatives in developing countries is relatively well documented. However, we draw the readers' attention to the fact that this latest claim pertains to m-learning (which is a relatively new addition to the ICT in education arena).

ix. This was subsequently split into two departments: the Department of Basic Education (where this project would be located) and the Department of Higher Education (where the teacher training components of this intervention would be located).

x. Note that this assumption was challenged in the context of an intervention designed to allow for minimal involvement by teachers. The service was intended for voluntary uptake and use by learners for independent study and revision (mostly conducted outside of school time).

xi. The teacher training involved a short introduction session, so that teachers were aware of the service and could introduce it to their learners. Teachers were expected to encourage their learners to use the service outside of school time.

xii. To view an updated version of the service - consult https://math.microsoft.com

xiii. All schools were state-funded (public) schools. The Department of Education's involvement in selecting schools was welcomed, as it ensured there was a diversity of schools meeting the criteria agreed upon with the provincial departments. This mitigated against corporate bias in selection of the schools. All public schools are monitored by their districts and the provincial Department of Education.

xiv. Learners for whom there was no data were discarded from the data set. As the return rate was high, data was available from all schools, and the resulting population was large (nearly 2,000 learners), this return rate was considered sufficient for meaningful research.

xv At the time of the study, the national standardised assessment Grade 9 Annual National Assessment (ANA) had not yet been implemented, and the only standardised assessment was the matriculation certificate (at Grade 12 level).

xvi. Such analysis was conducted for the broader grey literature evaluation, but is not focused on in this study.

xvii. This possible bias was mitigated against by the first author being appointed as an independent consultant to undertake the research (as recommended by the national Department of Education). In addition, the second author was not involved in the initial research and reflected critically on its findings for this journal article.

xviii. There was a median of 100 questions per learner.

xix. There was a median of 210 questions per active learner.

xx. At this time, the Grade 11 service was not yet complete or available for use.

xxi. Codes 1-7 is how attainment results are reported to learners and parents in South African public schools.

xxii. With the smallest group comprising 18 learners, it was felt that these were big enough groups for each sub-category to provide meaningful data for analysis.

xxiii. This data was checked against the usage patterns (usage over time) for this exceptional learner, and compared to views and posts data. It was found to be accurate of this user's activity levels.

References

Carnoy M, Chisholm L & Chilisa B (eds.) 2012. The Low Achievement Trap: Comparing Schools in Botswana and South Africa. Pretoria: Human Sciences Research Council (HSRC) Press. [ Links ]

Chigona A, Chigona W & Davids Z 2014. Educators' motivation on integration of ICTs into pedagogy: case of disadvantaged areas. South African Journal of Education, 33(3):Art. # 859, 8 pages. doi: 10.15700/201409161051 [ Links ]

Crompton H 2013. A historical overview of mobile learning: Toward learner-centered education. In ZL Berge & LY Muilenburg (eds). Handbook of mobile learning. Florence, KY: Routledge. [ Links ]

Daher W 2010. Building mathematical knowledge in authentic mobile phone environments. Australasian Journal of Educational Technology, 26(1):85-104. [ Links ]

Deloitte & GSMA 2012. Sub-Saharan Africa mobile observatory 2012. Available at http://www.gsma.com/publicpolicy/wp-content/uploads/2012/03/SSA_FullReport_v6.1_clean.pdf. Accessed 14 December 2012. [ Links ]

Dunne T, Long C, Craig T & Venter E 2012. Meeting the requirements of both classroom-based and systemic assessment of mathematics proficiency: The potential of Rasch measurement theory: original research. Pythagoras, 33(3) Art. #19, 16 pages.http://dx.doi.org/10.4102/pythagoras.v33i3.19 [ Links ]

Ford M & Botha A 2010. Pragmatic framework for Integrating ICT into education in South Africa. IST-Africa, Durban, South Africa, 19-21 May. [ Links ]

Johnson L, Adams S & Cummins M 2012. NMC horizon report: 2012 K-12 edition. Austin, TX: The New Media Consortium. [ Links ]

Koszalka TA & Ntloedibe-Kuswani GS 2010. Literature on the safe and disruptive learning potential of mobile technologies. Distance Education, 31(2):139-157. doi: 10.1080/01587919.2010.498082 [ Links ]

Kreutzer T 2009. Generation mobile: online and digital media usage on mobile phones among low-income urban youth in South Africa. MA thesis. Cape Town: University of Cape Town. [ Links ]

Liu Y, Han S & Li H 2010. Understanding the factors driving m-learning adoption: a literature review. Campus-Wide Information Systems, 27(4):210-226. http://dx.doi.org/10.1108/10650741011073761 [ Links ]

Mishra S (ed.) 2009. Mobile Technologies in Open Schools. Vancouver, BC: Commonwealth of Learning. Available at http://www.col.org/PublicationDocuments/pub_Mobile_Technologies_in_Open_Schools_web.pdf. Accessed 12 July 2013. [ Links ]

National Planning Commission, The Presidency, Republic of South Africa 2011. Diagnostic Overview. Available at http://pmg.org.za/files/docs/110609diagnosticoverview.pdf. Accessed 7 April 2014. [ Links ]

Roberts N & Vanska R 2011. Challenging assumptions: Mobile Learning for Mathematics Project in South Africa. Distance Education, 32(2):243-259. [ Links ]

Rossi P, Lipsey M & Freeman H 2003. Evaluation: A systematic approach (7th ed). London: Sage Publications. [ Links ]

Spencer-Smith G & Roberts N 2014. Landscape Review: Mobile Education for Numeracy. Germany: Deutsche Gesellschaft fur Internationale Zusammenarbeit (GIZ) GmbH. Available at http://www.giz.de/expertise/downloads/bmzgiz2014-en-landscape-review-mobile-education-numeracy.pdf. Accessed 24 March 2015. [ Links ]

Strigel C & Pouezevara S 2012. Mobile learning and numeracy: Filling gaps and expanding opportunities for early grade learning. Durham, NC: RTI International. Available at http://www.rti.org/pubs/mobilelearningnumeracy_rti_final_17dec12_edit.pdf. Accessed 26 June 2014. [ Links ]

Taylor N, Draper K, Muller J & Sithole S 2013. National Education Evaluation and Development Unit (NEEDU) National Report 2012: Summary Report. Pretoria: NEEDU. Available at http://www.saqa.org.za/docs/papers/2013/needu.pdf. Accessed 7 April 2014. [ Links ]

Thomas K & Orthober C 2011. Using text-messaging in the secondary classroom. American Secondary Education, 39(2):55-76. [ Links ]

Thomas KM & McGee CD 2012. The only thing we have to fear is.. .120 characters. TechTrends, 56(1):19-33. doi: 10.1007/s11528-011-0550-4 [ Links ]

Traxler J 2009. Current State of Mobile Learning. In M Ally (ed). Mobile Learning: Transforming the Delivery of Education and Training. Edmonton, AB: Athabasca University (AU) Press. [ Links ]

Trucano M 2005. Knowledge Maps: ICT in Education -What Do We Know about the Effective Uses of Information and Communication Technologies in Developing Countries? Washington, DC: Information for Development Program (infoDev)/World Bank. Available at http://files.eric.ed.gov/fulltext/ED496513.pdf. Accessed 28 March 2015. [ Links ]

United Nations (UN) n.d. We Can End Poverty: Millennium Development Goals and Beyond 2015. Available at http://www.un.org/millenniumgoals/global.shtml. Accessed 16 May 2014. [ Links ]

United Nations Educational, Scientific and Cultural Organization (UNESCO) 2012. Turning on Mobile Learning in Africa and the Middle East: Illustrative Initiatives and Policy Implications. UNESCO Working Paper Series on Mobile Learning. Paris: UNESCO. Available at http://unesdoc.unesco.org/images/0021/002163/216359e.pdf. Accessed 11 September 2014. [ Links ]

Wagner ED 2005. Enabling Mobile Learning. EDUCAUSEReview, 40(3):40-53. [ Links ]

Appendix A: Activity Levels and Prior Attainment Categories

This appendix presents the group sizes for the comparison of activity level categories to prior attainment levels.