Serviços Personalizados

Artigo

Indicadores

Links relacionados

-

Citado por Google

Citado por Google -

Similares em Google

Similares em Google

Compartilhar

South African Journal of Science

versão On-line ISSN 1996-7489

versão impressa ISSN 0038-2353

S. Afr. j. sci. vol.110 no.1-2 Pretoria Jan. 2014

RESEARCH ARTICLE

The first-year augmented programme in Physics: A trend towards improved student performance

Naven Chetty

School of Chemistry and Physics, University of KwaZulu-Natal, Pietermaritzburg, South Africa

ABSTRACT

Amidst a critical national shortage of qualified Black graduates in the pure and applied sciences, the University of KwaZulu-Natal has responded to a call from government for redress by launching the BSc4 Augmented Physics programme. In this paper, the methods employed to foster learning and to encourage student success in the Mechanics module of the Augmented Physics programme are described and discussed. The use of problem-based learning and a holistic learning policy that focuses on the emotional, physical and knowledge development of the student seems to have yielded higher throughput in the first semester of an undergraduate programme in Physics. Furthermore, the results point to an increase in the conceptual understanding of the student with respect to Mechanics. When appraising this success, the results of the 2007-2009 cohorts, with and without teaching interventions in place, were analysed. These initial analyses pave the way for a course designed to benefit the student and improve throughput. These methods are not unique to Physics and can be adapted for any module in any country.

Keywords: problem-based learning; access; extended curriculum; language; study techniques

Introduction

Worldwide, students have difficulty with the language of physics, be it subject-specific terminology or the use of everyday language in a physics context.1 Thus, even definitions may give students trouble.2 This difficulty is compounded when learning in a second or third language,3-7 which is the case in South Africa where only 12% of students applying for tertiary education are mother-tongue speakers of English.8

Further difficulties in South Africa can be attributed to a dysfunctional education system as a result of the apartheid regime's under-development of Black human potential.7 This system gives rise to the problem of talented students being unable to study further in the sciences because of inadequate schooling.7 Furthermore, as a critical national priority, South African universities have been urged to alleviate the problem of 'scarce skills' by increasing the number of Black graduates in the natural and applied sciences.8,9 Universities in South Africa have attempted to redress the inadequate number of Black graduates through a variety of programmes offering alternative access and support.10-13

Hutchings and Garraway13 discuss in great detail the current extended curriculum position in South Africa and highlight the need for such measures in light of the current educational schooling pitfalls. In particular, they discuss the various approaches employed at other South African higher education institutions to implement extended curricula as part of the government's National Development Plan. The University of KwaZulu-Natal's (UKZN's) augmented programme is unique in this realm of extended curricula and thus its description will assist greatly in formulating plans by other institutions for implementation of extended curricula. In particular, at UKZN, the Centre for Science Access (CSA) seeks to address the needs of students from disadvantaged schools who do not meet the normal requirements for entry to the Faculty of Science and Agriculture. In the CSA, students register for a 4-year BSc in either a foundation or an augmented programme. In the BSc-4 (Foundation) programme students engage in learning that is modelled on the Science Foundation Programme which has been described by Kioko12, Grayson14-15 and Barnsley16. The Physics programme for the BSc-4 (Augmented) has not been described previously in the literature, but will be described here later.

Level 1 Physics at tertiary level is highly dependent on problem solving.17,18 Problem solving is often a stumbling block for many students in Physics as they perceive it to be difficult.19,20 Research also shows there is often little or no change in conceptual understanding before and after formal instruction and that students are unable to apply the concepts that they have studied to the task of solving quantitative problems.20

Here, teaching interventions instituted for the Physics module in the BSc-4 (Augmented) programme are described and their effectiveness in addressing students' ability to answer typical Mechanics questions, as given in first-year textbooks, is appraised.21-22 An attempt is also made to investigate the problem-solving ability of three cohorts of students. The results, although preliminary, will be of interest to researchers in the field of extended curricula.

Context of the study

Educational context

The BSc-4 (Augmented) degree is for students from disadvantaged schools who are interested in science degrees but whose matric results are slightly below college entry requirements, although they have a full matriculation exemption or National Senior Certificate (NSC) qualification. The entry requirements for both the augmented and the mainstream programmes are listed in Table 1. Students in the Augmented Physics programme are admitted into first-year BSc but initially take fewer courses with extra tutorials and practicals, and courses in Scientific Communication and Life Skills. The first year of the degree is therefore spread over a maximum of 2 years during which students can also take some second-year modules. Thereafter, students carry the normal load for their degrees. Students thus take 4 years to complete a 3-year BSc degree, doing so more slowly, but being more assured of success.14-16 The sudden withdrawal of support after the first year of study is of concern and its impact needs to be investigated further as it may have direct consequences on throughput and pass rates in subsequent years. One needs to be cognisant of this possibility when deciding on the exact structure of the extended curriculum that is implemented. At UKZN, various support structures have been set in place for all students in second year and above and it has been deemed appropriate to not offer a specific support structure for the students in the augmented programme.23

The BSc-4 (Augmented) degree is based on an integrated programme for students' first year of study.23 In this programme all students register for two compulsory modules to support their studies and two optional modules determined by their choice of degree majors. One compulsory module is Scientific Communication, in which students are coached to improve their English language skills in the context of reading and writing on science topics. They learn the appropriate language, report structures and basic comprehension skills to help them in their chosen degree. Students must pass this module in order to be awarded their degree. This module is, however, the basis of much controversy. Such discrete communication courses have been shown to have very little impact on improving language in a specific discipline such as physics13,24 and indeed this was the case in this Scientific Communication module. This course served to provide the students with generic communication skills that had little impact on their physics communication skills. With this in mind the Augmented Physics module implemented a mechanism of redress which included physics communication and, in particular, communication with respect to understanding, unpacking and answering problems in the problem-based learning (PBL) mode of teaching and learning.

The second compulsory module is Life Skills. This module has an attendance but no assessment requirement. It addresses issues including HIV/AIDS, food security, note taking skills, career prospects and basic study techniques through workshops and discussions conducted by qualified psychologists. These activities are designed to help students adjust to the social and academic requirements of university.10-12,14-16,24,25 Furthermore, all students in the CSA are encouraged to meet campus psychologists for further counselling on personal, academic, career or social issues. This component has been shown to be most effective in improving the confidence of the students as well as in helping them overcome the adversities of their past without feeling victimised or alienated in their new environment.25

The students enrolled in the Augmented Physics module have twice the amount of contact time in Physics (but not double the workload) as those registered for mainstream Physics. Although they attend twice as many Physics lectures, students in Augmented Physics still have a lower workload than students in the mainstream programme as they only take two modules as opposed to the mainstream students who register for four modules. Augmented Physics students attend normal classes for the calculus"based Physics module (four lectures, one tutorial and a 3-h practical per week) alongside their mainstream counterparts. For- these classes, they are expected to do various assignments such as answering tutorial questions. Being previously disadvantaged, students in the augmented programme have had very little exposure to laboratory work and thus they are required to attend an additional 3"h laboratory session a few days before their mainstream practical. These additional sessions differ from the mainstream ones - during these sessions, students are introduced to the apparatus, experimental techniques and analytical skills that are required for the practical later in the week.

To further support their studies in the mainstream, students in the augmented programme attend five other contact periods which the Augmented Physics lecturer uses for explaining or extending the content of the mainstream lectures, rather than simply re"lecturing the same content. However, students tend to struggle with the pace of the mainstream lectures, so when required the Augmented Physics lecturer re"lectures the mainstream content. Over and above the assignments for mainstream classes, the lecturer in Augmented Physics assigns further tutorial questions for students to prepare for the classes. The workload in Augmented Physics is initially less intensive than the mainstream programme to enable the students to adapt to the demands of tertiary physics education, but is incrementally increased until it is on a par with that in mainstream Physics.

Student context

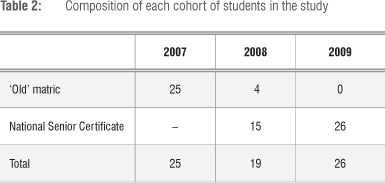

This research was conducted among three student cohorts between 2007 and 2009 who had different secondary school backgrounds. Table 2 shows the composition of the three cohorts of students, according to the examination they wrote in their final school year.

The 2007 cohort of students followed a content"based traditional school curriculum. The curriculum statement for this system outlined clear learning objectives from which teachers should work.26 The final examination included questions across a range of levels on Bloom's taxonomy.26,27 In this way, the exam encouraged teachers to focus on teaching some problem"solving skills to the learners. The learners had no continuous assessment tasks and their final marks were wholly obtained from the final exam.

By contrast, students matriculating in 2008 followed the new NSC curriculum which is purported to be outcomes"based education (OBE). These learners were the guinea pigs in that they had, along with their teachers, constantly pioneered a new system over 12 years of schooling. The curriculum statement for this certificate leaves quite large areas open for teachers to interpret. Furthermore it appears to cover even more content than the curriculum in previous years,28,29 thus creating a situation in which teachers might need to rush quickly through material in order to complete the syllabus superficially. This situation can result in learners simply plugging numbers into an equation. Consequently, there may be little time to teach learners to solve problems that require deeper interrogation and integration of the subject. Integration deals with the extent to which teachers use examples, data and information from a variety of disciplines and cultures to illustrate the key concepts, principles, generalisations and theories in their subject area or discipline.30 The final result for these learners includes a component of continuous assessment. The majority of the 2008 cohort came through the new curriculum, although the cohort also included a few students who matriculated in 2007 but only enrolled for BSc-4 in 2008. All of the students in the 2009 cohort matriculated through the new NSC.

Researcher context

The author taught the Augmented Physics module for the three years under study. He had 8 years experience tutoring physics at high school and tertiary level and completed his PhD during this period. The author taught the module for the first time in 2007. In a mixed modal research study (based on both qualitative and quantitative data) such as this one, the observer is part of the research process.31-35 Therefore, despite attempting to be a disinterested observer, the author's particular perspective has undoubtedly framed this study.32-35 In this paper, the qualitative research in which the author/researcher has been directly involved, is presented. The aim of the study was to gather data in the form of the qualitative assessments and to supplement those research findings with quantitative data in the form of rich content-based descriptions of people, events and situations by using different, especially non-structural, techniques to discover the stakeholders' views, to analyse the gathered data and, finally, to interpret the findings in the form of a concept- or contextually dependent grounding.32-36

Theoretical framework

The effectiveness of a particular educational approach (in this case the Augmented Physics programme) and its teaching effectiveness (as demonstrated by student knowledge of the subject matter and evidenced by performance in the assessments) need to be determined. The aim of this study was to determine the knowledge gained by students in terms of factual knowledge, conceptual understanding and functional proficiency in physics and, in particular, mechanics.32-35

The mixed-modal approach was used as it starkly provides the answers to the question of effective teaching by allowing quantitative analysis of the results obtained by the students to particular questions designed to test various levels of the revised Bloom's taxonomy.27,36 It also relates their performance to follow-up questions and discussions (qualitative analysis). Although very useful, it must be noted that a shortcoming of this method is that it is subject to the specific teaching measures implemented.32-35 A further justification of a quantitative approach arose from the large number of students enrolling in mainstream Physics which made conventional assessment tasks difficult to implement and tedious to mark. These numbers prompted a departmental decision to move away from asking a number of conceptual understanding questions (so called 'long questions' with proofs and derivations) in tests, to more problem-based/quantitative assessments such as multiple-choice questions (MCQs) or short numerical response questions.32-35,37 Given the debate surrounding the use of MCQs,38-41 the results of this research may be debated. However, the questions were designed to meet the specific aim of the researcher, which was to determine the cognitive ability of the students to answer questions at various levels of Bloom's taxonomy27 and the revised Bloom's taxonomy36 and the results should be interpreted in this light.

Bloom contends there are three types of educational activities27:

• Cognitive: mental skills (knowledge)

• Affective: growth in feelings or emotional areas (attitude)

• Psychomotor: manual or physical skills (skills)

Anderson and Krathwohl36 provide a revision of this taxonomy. Changes in terminology between the two versions are the greatest differences. Bloom's six major categories were changed from noun to verb forms. The lowest level of the original - knowledge - was renamed to 'remembering'. The levels of comprehension and synthesis became 'understanding' and 'creating', respectively. In this study, the focus was on Bloom's original cognitive category and the tests were developed as part of our quantitative approach. The first few questions on the test (approximately 20%) appealed to the first level of Bloom's cognitive level, that of knowledge27 or remembering36. If proficient at this level, the students should be able to provide answers to questions that simply test basic definitions and recall.36,42

The next set of questions on the test (approximately 40%) related to the second level of Bloom's taxonomy, that of comprehension27 or understanding36. At this level, a competent student would understand the meaning, translation, interpolation and interpretation of instructions and problems.36,37,42,43

The final set of questions (approximately 40%) appealed to Bloom's third level - application27 or creating36. This level requires students to use a concept in a new situation or, unprompted, to use an abstraction. The three highest levels of analysis, synthesis and evaluation were not considered in this study although the linking of the revised Bloom's taxonomy36 with PBL aims to achieve this as students progress through the module44. Yadav45 alludes to this form of instruction as the transference of higher-order thinking skills. Yadav refers to this skill as the ability of the student to collect, analyse and evaluate information to draw conclusions or make inferences44,45 and his approach45 forms the basis of the principle used in this paper. In preparing for the questions set using the revised Bloom cognitive levels, the method proposed by Serrat46 was used. In this method, PBL is used together with Bloom's taxonomy (or the revised taxonomy) to facilitate higher-order thinking skills. Serrat's46 approach makes provision for problem-solving techniques for use in PBL. Four of these techniques are focused on here:

1. Affinity diagrams encourage students (either in a group or as individuals) to organise ideas into a common theme.

2. Brainstorming encourages students (either in a group or as individuals) to generate a large number of ideas to solve a problem or to find ways of solving the problem.

3. Flowcharts are used to help students identify the aspects they do not understand with respect to content before attempting to teach problem-solving skills.

4. The 'five why's technique' encourages students to ask at least five questions when solving a problem, which helps them to think 'out of the box'.

Fogler and LeBlanc47 were the first to develop the concept of linking Bloom's taxonomy to PBL and as such to a scientific field (engineering in this case). From this impetus, this study was modelled.

Method

In interrogating the successful outcome of any of the teaching interventions employed in the Augmented Physics programme, it is only possible to consider examination pass rates. However, pass rates may give only a superficial evaluation and may not show any of the finer aspects relating to students' improved performance or the challenges faced by a lecturer in facilitating this change. Consequently, analyses of student performance on tests related to their problem solving ability are also included.32,34,35

At the start of the first semester in each year under consideration, the same pre-test was administered to each cohort in the first lecture of the Augmented Physics class, in which students worked alone and without reference material. As a formative assessment instrument, the purpose of the pre-test was to gauge the students' understanding of physics at school-leaving level, and consequently their ability to solve problems. Students were informed beforehand that their performance in the test would not affect their class mark in order to reduce test anxiety among the students which may have adversely affected their performance. Furthermore, time pressure was alleviated by allowing students up to 1 h to answer the 30-mark test which, according to common practice in the Physics Department, would normally have taken 30 min. The lecturer then collected the scripts, analysed the responses and checked the marking and mark allocation before handing the scripts back to the students. A discussion session with the students then occurred, either on a one-to-one basis or in a classroom group, to obtain data for the qualitative aspect of the research.

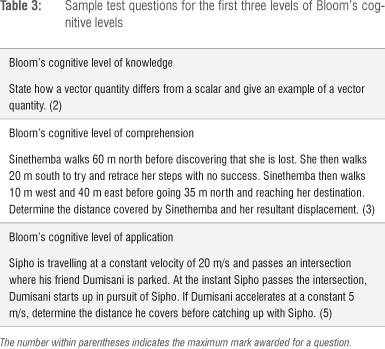

The test consisted of three sections with each section evaluating student performance on the first three levels of cognitive understanding according to the revised Bloom's taxonomy as described previously.36 The first level of knowledge simply required that a student recall basic definitions. The second section, at the second level of understanding, entailed interpreting a straightforward 'story' to extract information concerning a sequence of actions. The final section was at the third level of creating36 and required students to use a concept in a new situation or to use an abstraction unprompted, that is the student had to apply what was learnt in the classroom into novel situations. Examples of all these questions are given in Table 3.42

Results and discussion of interventions

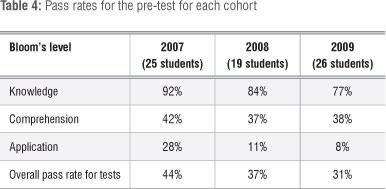

The results given in the tables and figures that follow indicate the percentage pass rate for a particular level of the revised Bloom's taxonomy. A pass is deemed to be 50%.

Table 4 illustrates the results of the pre-test. It is interesting to note that in the mixed 2008 cohort three out of the four 'old' matric students achieved an overall pass in the pre-test. The results represented in Table 4, although poor, were not unexpected. It had been predicted by various educational sources that the new NSC would not adequately prepare students for the rigors of tertiary study, where emphasis was placed on the higher levels of Bloom's taxonomy.48-50 Jansen51-53 was critical of OBE even before its launch and his prediction was predicated by the decision of the education ministry to scrap OBE.54 However, a single pre-test could not be used to conclusively judge this system because the test may have been influenced by anxiety on the part of the student, despite the precautions taken to avoid such anxiety. The students were then given advance notification that they would be writing a test a week- after the pre-test. The students were also explicitly informed of the material that the test would cover. No interventions were made in the week between the tests and the lecturer conducted traditional lectures with no advanced support.

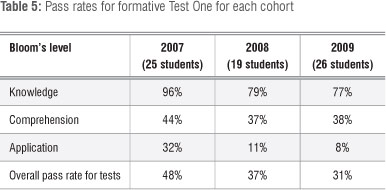

The result of the first formative test in the Augmented Physics module, which again was not used to contribute to the students' year mark, is shown in Table 5. This test was very similar in nature to the pre-test and tested similar concepts. The results closely resembled those of the pre-test. This time all four of the 'old' matrics in the 2008 cohort passed overall. Table 5 further shows that a vast majority of the students in all three years answered questions in the knowledge section correctly. This result is not surprising as the schooling system prepares students to memorise material so that their ability to simply recall definitions is well developed. Noticeable, however, is the appreciable drop in the percentage of students who responded correctly to the question over the years. This finding may be a consequence of the inordinate amount of group work associated with OBE in which learners are not required to take ownership of their work and studying. Instead, learners are given more opportunity to simply rely on the efforts of the more active learners in a group which can curb their own learning substantially.48-50,52-54 It must also be noted that students were not marked on the correct use of language and grammar in this section. The researcher inferred what was conveyed by the students even though the written expressions may not have made conventional linguistic sense.

The results for the understanding section were in stark contrast to the researcher's perception that the questions were easy, and proved to be an immense challenge to the vast majority of students across all three years. A qualitative investigation was conducted by the researcher which involved interviewing all the students who had failed to obtain 50% or more in this section to identify the reasons for their poor performance. In all three years the students battled to interpret the questions because of the perceived complexity of the language used. This perception resulted in the students being unable to separate relevant from irrelevant information provided in the question; this finding tallies strongly with reports in the field55-57 which highlight that student responses and incorrect answers are often linked to language difficulties as opposed to subject or content difficulties. It was clear from this part of the study that the language problem facing the students would need to be addressed in conjunction with the physics interventions and that the discrete language module failed.58,59

The results in Table 5 also show that many of the students were unable to solve questions in section three, the creation level. The qualitative investigation again revealed that this inability may have been attributed to the complexity of the language - by their own admission, the students were unable to dissect a question into smaller, more manageable parts. In all three years the students also admitted to attempting to solve the problem without first determining the nature of the question posed. They could, by and large, readily identify that they needed to use the kinematical equations; however, they could not see the link between two scenarios, such as the distance covered and the time of the motion.

The results of this formative test then set in motion a series of interventions for the Augmented Physics module. The first two interventions sought to address informally the language problem encountered by the student. As time was limited, a more formal approach could not be taken to address the complexities surrounding language and its impact on formal learning as it was clearly apparent that the scientific communication module was not adequately preparing the students for the language of physics. The first intervention was the introduction of dictionaries into lectures. The lecturer would often take dictionaries to lectures and students were encouraged to look up the meaning of words if they did not understand them. They were also asked to start their own physics dictionary by writing down the meanings of difficult words they encountered in the module. Across the three years this intervention varied slightly, but the main thrust remained consistent. In all three years almost 95% of the students had cellular phones with WAP capabilities, and so they were introduced to the mobile Internet, more especially to online dictionaries and e-learning sites, as a way of introducing them to technological learning, an ambit of the PBL method.60-64 The introduction of the technological ambit of the PBL approach helps to determine what factors contribute to integration or non-integration of those constructs into the curriculum.

The second measure was the implementation of an English-only policy for lectures. Students were not allowed to communicate in their home languages during any lectures.59,64-70 Even if the communication was not physics related the students were still required to communicate in English. This policy was initially quite difficult to implement because the students reacted quite negatively to this measure. They believed that this policy belittled their language and discriminated against their cultural beliefs. The students were then counselled on the reason behind this policy and it gradually became more acceptable. The lecturer observed on many occasions that if a student did try to stray from this policy, the other students in the class or group would simply respond to his or her question in English.61,71 The language problem is often compounded by the fact that the educators at the students' former schools (usually semi-rural and rural schools) would often 'code-switch' - that is, switch between English and the vernacular language. Often the educator would start the lesson in English and then revert to the vernacular when a concept with a relatively high degree of difficulty was encountered. Students were also given an in-depth explanation into the usage of words such as 'describe', 'explain', 'calculate', 'determine' and 'solve'. These words had to be recorded in their dictionaries and they were then randomly asked to explain the meaning of these words to the lecturer or their colleagues during lectures.

The final measure, and probably the most influential, was the PBL approach. PBL is a student-centred instructional strategy in which students collaboratively solve problems and reflect on their experiences. Characteristics of PBL72-74 are that:

• Learning is driven by challenging, open-ended problems

• Students work in small collaborative groups

• Lecturers take on the role as 'facilitators' of learning.

Advocates of PBL claim it can be used to enhance content knowledge and foster the development of communication, problem solving and self-directed learning skill.47 The PBL approach should encourage students to be responsible for their own learning and understanding. In other words, PBL should discourage students from memorising content or copying solutions to assigned problems because they should see that tutorial questions were assigned to develop conceptual understanding and critical or lateral thinking rather than simply to supply a final answer. Consequently, ownership of the academic experience lies with the students as they have to demonstrate understanding of the content- through PBL. PBL often forces students to study their materials (notes, textbooks, readings) from lectures intensively and to make their own notes to help with solving the problems. By splitting the class into smaller groups and assigning the groups problems to attempt using the PBL approach, students are forced to learn the associated theory before attempting the problem. Ownership of the academic experience further encourages them to think laterally and critically - a consequence of the reflection part of the PBL approach. Thus PBL is an attempt to help students to think 'out of the box'44-47,75 while OBE is an approach to education in which decisions about the curriculum are driven by the exit learning outcomes that the students should display at the end of the course.76

The students were split into small groups of two or three individuals. Problems were assigned to these groups and they were required to solve them without consulting the other groups. Each group was allocated different problems with the same level of difficulty. To solve the problem, students had to define a structured, step-by-step approach to the solution. In some common traditional instruction scenarios, students are handed a cookbook list of steps to solve a given problem (e.g. Part A: solve for velocity; Part B: solve for Ax).44-47,74,75 In such situations, students may struggle with performing some of the steps or with the implicit meaning of these steps or, worse yet, may fail to recognise how each of these steps leads to a global solution to the problem. However, in a PBL approach, students must generate their own step-by-step method to solve each problem. Thus, although difficulties will arise in carrying out a given step, no confusion exists as to the sequence or meaning of each step required. Furthermore, as students work to solve the problem, various solution paths emerge among groups. In this way, students begin to view problem solving as a creative process that can take many forms within a given set of constraints.60 To paraphrase the late Nobel laureate Richard Feynman: Good scientists always know at least three ways to solve the same problem. Contrary to traditional problem-solving activities in which one preferred solution is usually presented, many solutions are possible to any given problem. PBL activities enable students to appreciate that problem solving is not a uniform one-size-fits-all kind of activity and that many solution paths are possible.60

The assigned questions often required the same conceptual understanding but of different scenarios. During these sessions students were also taught to link subjects such as Physics and Mathematics, which they often place into isolated 'mental boxes'5,32,35,72 without accessing the concepts taught in one module to relate to the other. This method also facilitated active learning5,32,35,72 in the sense that the students had to discover and work with content that they determined to be necessary to solve the problem.43,74 It must be noted that the full PBL approach was not implemented because of the cognitive demand that it would have placed on the students. Fade effect73 scaffolding was implemented in this module of PBL to reduce the cognitive load of the students. In fade effect PBL, guidance is provided by the lecturer in the early stages and later, as the students gain expertise and become more confident, this guidance is gradually reduced.77 The students are first introduced to simple problems and are then gradually given more complex problems in which elements are added to model real-life problems or situations.77,78 This process helps students to slowly transit from studying examples to solving problems.79 Another modification to the PBL approach was the combining of mini-lectures on some days with in-class, small group work on problem sets later in the week.

After they had finished their problem work the students assessed themselves and each other in order to develop skills in self-assessment and the constructive assessment of peers. Self-assessment is a skill essential to effective independent learning. The objectives of the PBL approach is to produce students who will43,44,46,77,78:

• Engage the problems they face in their life and career with initiative and enthusiasm

• Problem solve effectively using an integrated, flexible and usable knowledge base

• Employ effective self-directed learning skills to continue learning as a lifetime habit

• Continuously monitor and assess the adequacy of their knowledge, problem solving and self-directed learning skills

• Collaborate effectively as a member of a group.

The PBL method of transference of learning using the techniques described above ensured that mutual learning took place and that the knowledge was active.35,37,44,46 Constant evaluation took place to effectively determine the level of transference. Evaluation in the Augmented Physics module included all mainstream evaluations such as tests, assignments and practical reports, as well as specific tasks for Augmented Physics. These assessments took the form of unseen quizzes, seen quizzes, mini tests and formal tests. All assessments used the first three cognitive levels of Bloom's taxonomy as previously discussed.27,36

The results of the practical sessions, quizzes and mini-tests that occurred in the month leading up to the first formal assessment are not considered here as they had a very narrow focus in terms of curriculum content in order to verify the transfer of initial learnings.20,26,35,37,42 These frequent assessments also ensured that the students attempted the prescribed tutorials and homework and kept up to date with the material being taught. The first formal assessment test was structured similarly to the pre-test and formative Test One to enable direct comparisons.

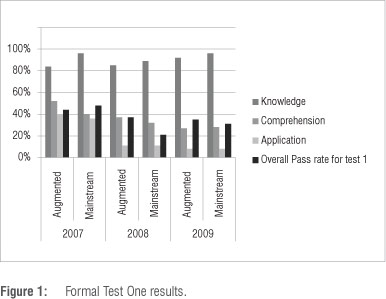

Figure 1 shows the results of these tests. They show that the student performance in the Augmented Physics test was similar to that of the formative assessment before any of the interventions were implemented. The performance of the 2008 and 2009 cohorts of Augmented Physics students in the mainstream test was, however, much worse than their collective performance in either the augmented formal Test One or the first formative test while the results for the performance of the 2007 cohort in the mainstream Test One seems consistent with their performance in the augmented assessments. A qualitative interrogation of the student performance in these years revealed that in 2008 the lecturer in the mainstream introduced a new section a few days before the scheduled test was to be written with the indication to students that it would not be tested. However, this material was included within the application component of the mainstream formal test. Students were quick to associate this with their poor performance. However, considering that this question accounted for only 5 out of a possible 40 marks (12.5%) and their equally poor performance in the comprehension portion of the test, this may well have not been the reason, although it does warrant further investigation and possible intervention.

The 2009 cohort investigation revealed that, for various reasons beyond the control of the students, most notably industrial action, the students had to write two mainstream tests on the same day. A Mathematics Level 1 test was written in the morning and the Physics test was written in the afternoon. Students in all three years cited a lack of time allocated to answer the questions as another major reason for their poor performance. They were not used to such stringent time demands as they often received some extra time during the augmented assessments.

Considering the poor results in all three years, the researcher implemented a further two interventions and continued re-enforcing the ones introduced previously. The first new intervention was that all the students were interviewed and monitored to determine if any external factors, such as financial problems, poor living conditions at home, HIV/AIDS, alcoholism, drug addiction or teenage pregnancy, were contributing to their poor academic performance. If any such problems were identified, the student was referred to the appropriate centre for further assistance. Coupled with this intervention came the development of self-awareness in the student.65 Part of this development occurred during the Life Skills component of the programme and was continued by the lecturer with constant pep talks, the screening of motivational video clips, and the invitation of people with similar backgrounds who were academic successes to give motivational talks.65 Workshops on proper study techniques, both general and specific to the Physics module, were also held during the augmented practical sessions. During these workshops the students were taught how to draw up study timetables and how to plan for success. Proper time management during tests or exams was also discussed. To this end the 'mark a minute' philosophy was explained and implemented for all augmented assessments to keep in line with the mainstream. Students were at first alarmed by this idea but gradually came to accept it as something they could not change but would have to adopt. Students often asked for permission to use their cellular phones to monitor the time while attempting tutorial questions during the designated tutorial times, as some did not have watches. To further reinforce this notion all assigned questions were accompanied by a maximum mark and a clock was provided for tutorials or tests at which they could not use their cellular phones.

The Augmented Physics test was always scheduled a week before the mainstream test. It was designed to be formative and to help students evaluate their understanding of the material taught. The tests were pitched either at the same level or were harder than the mainstream tests. These tests were marked and returned to the students a few days before their mainstream tests and were discussed in detail along with the provision of model solutions. In general, the augmented test aimed to cover at least 85% of the content in the mainstream test. The Augmented Physics lecturer did not have access to the mainstream test beforehand, so as to not favour the Augmented Physics students. The students could use the test to establish areas of learning in which they had difficulty. They could then consult with the lecturer or student assistants for help in these and other areas before the mainstream test. It was hoped that these tests would provide critical feedback to the researcher regarding the success or failures of the implemented interventions.

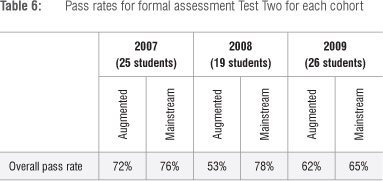

The results of the final formal assessment for the augmented and mainstream module are shown in Table 6. These tests were heavily focused on the comprehension and application levels of Bloom's taxonomy, with the knowledge section almost entirely covered in the latter two levels. Thus the results were not broken down into three levels as before. A clear improvement was noted in all three cohorts. The glaring disparity in pass levels among cohorts from formal Test One was not evident in this comparison. In the 2008 and 2009 cohorts, the Augmented Physics students performed better in the mainstream test than they did in their augmented module assessments. In all cases their performance in the mainstream was well beyond that of the first formal assessment.

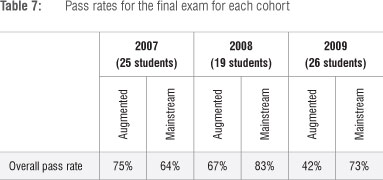

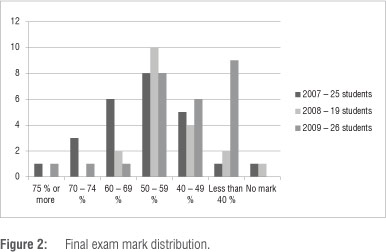

As a final means of comparison and to appraise critically the value of the interventions outlined above, student performance in the final exam is provided in Table 7. The students wrote a single theory exam and the Augmented Physics lecturer set approximately 25% of the exam in all three years under study. The mainstream marks provided in Table 7 are the results for the students registered in the mainstream programme and are provided for comparison of the Augmented Physics students' performance against mainstream norms. The mark breakdown showing the performance of the students at the various percentiles is given in Figure 2. It is evident from these results that the Augmented Physics students in the 2007 and 2008 cohorts seemed to perform on par with their mainstream counterparts. The 2009 cohort performed relatively poorly in comparison with the mainstream students. Figure 2 also clearly shows that not only did the Augmented Physics students pass, they passed well. Some of the students were able to achieve first-class passes (marks higher than 75%) and others obtained second-class passes (60-74%). Students who obtained a mark of between 40% and 49% were given the chance to write a second exam, for which they were required to pay. In all three years, no such student passed the second exam even though its level of difficulty was equivalent to that of the first exam, both in terms of content and time allocation.

Conclusion

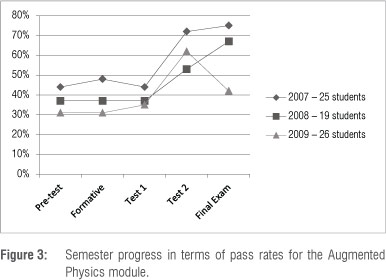

The improvement (as indicated by Figure 3 in which an upward trend is clearly evidenced) in the test results for all the cohorts and the subsequent exam performance can be linked in part to the interventions set in place within the augmented module. The initial disparity between the results of the 'old' matric and the NSC cohorts, as evidenced by the pre-test and formative Test One results, is not so markedly evident in the subsequent formal Test Two results. Further, the exam performance of the 2007 and 2008 cohorts is in line with their mainstream counterparts. Taken at first impression, the exam results of the 2009 cohort are poorer than those of the mainstream students, which leaves one to conclude that the interventions may not have been enough to assist these students.

Further investigation into the performance of these students revealed that they had done relatively well in all sections taught by the researcher, achieving a pass rate of 63%. However, they performed poorly in sections taught by a replacement Augmented Physics lecturer when the researcher was away for a period during the latter half of the first semester in 2009. The incumbent had been trained by the researcher to implement all of the interventions in the style and method of instruction- suitable for the Augmented Physics students; however, this training may not have translated into the classroom, which may have influenced the final exam pass rate. The cohort may have also experienced 'transitional hiccups' associated with having a new lecturer very close to their final exam. However, their performance in the tests may be used to appraise the effectiveness of the interventions. The improved performance of all the cohorts seems to indicate the effectiveness of the interventions in assisting the students to overcome their initial circumstances and lack of preparedness to perform as expected at tertiary level. We see that the interventions, such as the use of a dictionary (determined by interrogating the pass rates of the tests with qualitative analysis such as interviews with the students), English language policy and PBL, are effective tools to use during lectures, tutorials and practical sessions and greatly influence the problem-solving ability of the students, as evidenced by their higher results in the subsequent tests at the higher cognitive levels of Bloom's taxonomy.

A difference in the pass rates between the NSC and 'old' matric cohorts, although evident at the outset of the study, has not been clearly demonstrated by this work as this study is too narrow to judge whether the schooling system had any impact on the problem-solving ability of the students. A further study is necessary to make this distinction. These results do, however, show that, regardless of the initial school shortcomings faced by the students, a comprehensive programme such as the Augmented Physics one at UKZN does enable some students to achieve success and to move beyond their previous disadvantages.

The data suggest that in order to perform successfully in their academic endeavours, students need to be provided with more than just academic mentoring. To fully develop their cognitive skills it is imperative that the academic support provided be coupled with basic skills such as time management, language interpretation and life skills. Although not covered here, issues such as food insecurity, domestic violence, lack of accommodation and no funding are also factors that influence academic performance. However, much of these are catered to by the Life Skills module and thus no direct interventions are implemented in the Augmented Physics module itself.

The sound model of the CSA's Augmented programme contributes to improve the skills development of students within the realm of the natural and applied sciences. Clearly, the augmented programme and its approach to educational outcomes may be the answer to addressing the critical shortage of qualified Black scientists in South Africa, as outlined by government. The methods employed as described in this paper are not unique to physics or even to the natural sciences, and may be tailored to assist students to foster learning and encourage ownership of their learning.

This preliminary work will, in the near future, be extended to determine the effectiveness of the initial interventions for the students as they move into higher levels of study. Further work will also analyse possible models for interactive teaching that address the language barrier faced by many non-English mother-tongue students.

Author's note: In 2011, the Physics honours class comprised six students, four of whom were part of the 2007 cohort in the Augmented Physics module. All these students successfully passed their honours degrees and are now reading for MSc degrees at various institutions in South Africa. The four NATED (old) matric students from the 2008 cohort finished their degrees in the minimum specified time of 4 years in 2011 and two of these students have subsequently registered for honours degrees in Physics at UKZN while the other two have decided to pursue honours degrees in other fields.

Acknowledgement

I thank Mrs Sheelagh Halstead for the many hours of helpful discussions and guidance in preparing this manuscript.

References

1. Johnstone AH. Chemical education, facts, findings and consequences (Nyholm Lecture). Chem Soc Rev. 1980;9(3):365-380. http://dx.doi.org/10.1039/cs9800900365 [ Links ]

2. Galili I, Lehavi Y Definitions of physical concepts: A study of physics teachers' knowledge and views. Int J Sci Educ. 2006;28(5):521-541. http://dx.doi.org/10.1080/09500690500338847 [ Links ]

3. Rollnick M, Rutherford M. The use of mother tongue and English in the learning and expression of science concepts: A classroom based study. Int J Sci Educ. 1996;18(1):91-104. http://dx.doi.org/10.1080/0950069960180108 [ Links ]

4. Inglis M, Grayson DJ. An approach to the development of communication skills for science students: Some ideas from the Science Foundation Programme. In: Sharwood DW, editor. Proceedings of the 7th Conference of the South African Association for Academic Development; 1992 Dec 3-5; Port Elizabeth, South Africa. p. 192-200 [ Links ]

5. Logan PF. Language and physics. Physics Educ. 1981;6:74-77. http://dx.doi.org/10.1088/0031-9120/16/2/303 [ Links ]

6. Logan PF. Physics in paradise. Phys Teach. 1976;14:81-85. http://dx.doi.org/10.1119/1.2339315 [ Links ]

7. Mji A, Makgato M. Factors associated with high school learners' poor performance: A spotlight on mathematics and physical science. S Afr J Educ. 2006;26(2):253-266. [ Links ]

8. Uys M, Van der Walt J, Van den Berg R, Botha S. S Afr J Educ. 2007;27(1):69-82. [ Links ]

9. University of KwaZulu-Natal (UKZN). Mission and vision statement of the University of KwaZulu-Natal as well as information for prospective students. Durban: UKZN; 2013. Available from: http://www.ukzn.ac.za [ Links ]

10. Rollnick M. Identifying potential for equitable access to tertiary level science: Digging for gold. Dordrecht: Springer; 2010. http://dx.doi.org/10.1007/978-90-481-3224-9 [ Links ]

11. University of KwaZulu-Natal (UKZN). Constitution of the Centre for Science Access (CSA), University of KwaZulu-Natal. Durban: UKZN; 2013. Available from: http://csa.ukzn.ac.za [ Links ]

12. Kioko J. Foundation provision in South African higher education: A social justice perspective. In: Hutchings C, Garraway J, editors. Proceedings of the Rhodes Seminar; 2009; Grahamstown, South Africa.. Grahamstown Rhodes University Press; 2010. p. 40-49. [ Links ]

13. Hutchings C, Garraway J. Beyond the university gates: Provision of extended curriculum programmes in South Africa. Grahamstown Rhodes University Press; 2010. [ Links ]

14. Grayson DJ. A holistic approach to preparing disadvantaged students to succeed in tertiary science studies. Part I: Design of the Science Foundation Programme. Int J Sci Educ. 1996;18(8):993-1013. http://dx.doi.org/10.1080/0950069970190108 [ Links ]

15. Grayson DJ. A holistic approach to preparing disadvantaged students to succeed in tertiary science studies. Part II: Outcomes of the Science Foundation Programme. Int J Sci Educ.1997;19(1):107-123. http://dx.doi.org/10.1080/0950069970190108 [ Links ]

16. Barnsley S. Thoughts on the psychological processes underlying difficulties commonly experienced by African science students at university. In: Shar-wood DW, editor. Proceedings of the 7th Conference of the South African Association for Academic Development; 1992 Dec 3-5; Port Elizabeth, South Africa. [ Links ]

17. Leigh G. Developing multi-representational problem solving skills in large, mixed-ability physics classes [unpublished thesis]. Cape Town: UCT; 2004. [ Links ]

18. Leigh G, Buffler A. Benchmarking the numeracy and expectations of first year physics technikon students as part of a new research-based teaching intervention. In: Buffler A, Laugksch RC, editors. 12th annual meeting of the Southern African Association for Research in Mathematics, Science and Technology Education; 2004 Jan 13-17; Durban, South Africa. Durban: Taylor and Francis; 2004. p. 528-535. [ Links ]

19. Soong Y Handheld educational applications: A review of the research. In: Ryu H, Parsons D, editors. Innovative mobile learning: Techniques and technologies information. London: Hershey Science Reference (an imprint of IGIGlobal); 2009. p. 302-323. [ Links ]

20. Walsh L, Howard R, Bowe B. An investigation of introductory Physics students' approaches to problem solving. Level 3. 2007;5:1. [ Links ]

21. Young HD, Freedman RA. University physics. 12th ed. New Jersey: Addison-Wesley; 2011. [ Links ]

22. Halliday D, Resnick R, Walker J. Fundamentals of physics. 9th ed. New Jersey: Wiley; 2011. [ Links ]

23. University of KwaZulu-Natal (UKZN). BSc-4 orientation handbook. Durban: UKZN; 2013. Available from: http://csa.ukzn.ac.za [ Links ]

24. Salomon G, Perkins DN. Rocky roads to transfer: Rethinking mechanisms of a neglected phenomenon. Educ Psyc. 2007;24(2):113-142. http://dx.doi.org/10.1207/s15326985ep2402_1 [ Links ]

25. Bassok M, Holyoak KJ. Pragmatic knowledge and conceptual structure: Determinants of transfer between quantitative domains. In: Detterman DK, Sternberg RJ, editors. Transfer on trial: Intelligence, cognition and construction. New York: Ablex; 1993. p. 68-98. [ Links ]

26. National Curriculum Statement: Physical sciences. Pretoria: Department of Education; 2003. [ Links ]

27. Bloom BS. Taxonomy of educational objectives, handbook I: The cognitive domain. New York: David McKay; 1956. [ Links ]

28. Grussendorf SJ. Umalusi maintaining standards report. Pretoria: Umalu-si; 2009. [ Links ]

29. Edwards N. An analysis of the alignment of the Grade 12 Physical Sciences examination and the core curriculum in South Africa. S Afr J Educ. 2010;30(4):571-590. [ Links ]

30. Davison DM, Miller KW, Metheny DL. What does integration of science and mathematics really mean? School Science and Mathematics. 1995;95(5):226-230. http://dx.doi.org/10.1111/j.1949-8594.1995.tb15771.x [ Links ]

31. Lincoln YS, Guba EG. Naturalistic inquiry. Newbury Park: Sage; 1985. [ Links ]

32. Hillel J. Physics education research - a comprehensive study [unpublished thesis]. Toronto: University of Toronto; 2005. [ Links ]

33. Maxwell JA. Qualitative research design: An interactive approach. Thousand Oaks: Sage; 2005. [ Links ]

34. Redish EF. A theoretical framework for physics education research: Modelling student thinking. In: Redish EF, Vicentini M, editors. Proceedings of the Enrico Fermi Summer School in Physics; 2004 Jan; Varenna, Italy. p. 1-63. [ Links ]

35. White R, Gunstone R, Elterman E, Macdonald I, McKittric B, Mills D. et al. Students' perceptions of teaching and learning in first-year university physics. Research in Science Education. 1995;25(4):465. http://dx.doi.org/10.1007/BF02357388 [ Links ]

36. Anderson LW, Krathwohl DR. A taxonomy for learning, teaching and assessing: A revision of Bloom's taxonomy of educational objectives: Complete edition. New York: Longman; 2001. [ Links ]

37. Dave RH. Psychomotor levels. In: Armstrong RJ, editor. Developing and writing behavioural objectives. Tucson, Arizona: Educational Innovators Press; 1975. [ Links ]

38. Considine J, Botti M, Thomas S. Design, format, validity and reliability of multiple choice questions for use in nursing research and education. J Royal College. 2005;12(1):19-24 [ Links ]

39. Higgins E, Tatham L. Are your students cheating or guessing on tests? Consider implementing alternate multiple-test formats such as DOMC and NRET. Learning and Teaching in Action. 2003;2(1):1-12. [ Links ]

40. Sanderson PJ. Multiple-choice questions: A linguistic investigation of difficulty for first-language and second-language students [PhD thesis]. Pretoria: Unisa; 2010. [ Links ]

41. Bradbury J, Miller R. A failure by any other name: The phenomenon of under-preparedness. S Afr J Sci. 2011;107(3-4), Art. #294, 8 pages. http://dx.doi.org/10.4102/sajs.v107i3/4.294 [ Links ]

42. Harrow A, A taxonomy of psychomotor domain: A guide for developing behavioral objectives. New York: David McKay; 1972. [ Links ]

43. Hmelo-Silver CE, Duncan RG, Chinn CA. Scaffolding and achievement in problem-based and inquiry learning: A response to Kirschner, Sweller, and Clark (2006). Educ Psyc. 2007;42(2):99. http://dx.doi.org/10.1080/00461520701263368 [ Links ]

44. Narayanan S, Munirathnam AM. Application of Bloom's taxonomy of educational objectives as a problem solving tool in the teaching-learning process in an 'electrical engineering technology' course. Linguistics, Culture and Education. 2012;1(1):117-140. [ Links ]

45. Yadav A. Problem-based learning: Influence on students' learning in an electrical engineering course. J Eng Educ. 2011;100(2):253-280. http://dx.doi.org/10.1002/j.2168-9830.2011.tb00013.x [ Links ]

46. Serrat O. Learning in teams - Facilitator's guide. Asian Development Bank Report. 2009;1:81-83. [ Links ]

47. Fogler S, LeBlanc SE. Strategies for creative problem solving. New Jersey: Prentice-Hall; 1995. [ Links ]

48. Mokhaba MB. Outcomes based education in SA since 1994 [Phd thesis]. Pretoria: University of Pretoria; 2004. [ Links ]

49. Malan SPT. The 'new paradigm' of outcomes-based education in perspective. Tydskrif vir Gesinsekologie en Verbruikerswetenskappe. 2000;28:22-28. [ Links ]

50. Spady WG. Outcomes based education: Critical issues and answers. Arlington: American Association of School Administrators; 1994. [ Links ]

51. Jansen JD. Understanding social transition through the lens of curriculum policy: Namibia/South Africa. J Curriculum Stud. 1995;27:245-261. http://dx.doi.org/10.1080/0305764980280305 [ Links ]

52. Jansen JD. Essential alterations? A critical analysis of the states syllabus revision process. Perspect Educ 1997;17(2):1-11. [ Links ]

53. Jansen JD. Curriculum reform in South Africa: A critical analysis of outcomes-based education. Cambridge J. Educ. 1998;28(3):321-331. http://dx.doi.org/10.1080/0305764980280305 [ Links ]

54. Mahlangu D. Outcomes-based education to be scrapped. TimesLIVE online edition. 2010 July 04. [ Links ]

55. Clerk DPP Rutherford M. Language as a confounding variable in the diagnosis of misconceptions. Int J Sci Educ. 1998;22(7):707-717. [ Links ]

56. Clerk DPP. Language as a confounding variable [MSc thesis]. Johannesburg: Wits University; 1998. [ Links ]

57. Bharuthram S, Mcenna S. Students' navigation of the uncharted territories of academic writing. Afr Educ Rev. 2012;9(3):581-594. http://dx.doi.org/10.1080/18146627.2012.742651 [ Links ]

58. Bharuthram S. Developing reading strategies in higher education through the use of integrated reading/writing activities: A study at a university of technology in South Africa [PhD thesis]. Durban: University of KwaZulu-Natal; 2007. [ Links ]

59. Bharuthram S. Making a case for the teaching of reading across the curriculum in higher education. S Afr J Educ. 2012;32(2):205-214. [ Links ]

60. Lasry N. Problem-based learning for college physics [homepage on the Internet]. c2013 [cited 2013 Mar 22]. Available from: http://rea.ccdmd.qc.ca/en/pbl/resultat.asp?action=aboutPBL&endroitRetour=9&he=600 [ Links ]

61. Levinson SC, Wilkins DP! Grammars of space: Explorations in cognitive diversity. New York: Cambridge University Press; 2006. http://dx.doi.org/10.1017/CBO9780511486753 [ Links ]

62. Boroditsky L. Does language shape thought? English and Mandarin speakers' conceptions of time. Cognitive Psychol. 2001;43(1):1-22. http://dx.doi.org/10.1006/cogp.2001.0748 [ Links ]

63. Tversky B, Kugelmass B, Winter A. Crosscultural and developmental trends in graphic productions. Cognitive Psychol. 1991;23:515-557. http://dx.doi.org/10.1016/0010-0285(91)90005-9 [ Links ]

64. So H-J, Kim B. Learning about problem-based learning: Student teachers integrating technology, pedagogy and content knowledge. Australas J Educ Technol. 2009;25(1):101-116. [ Links ]

65. Inglis M. Proceedings of the First Annual Meeting of the South African Association for Research in Mathematics and Science Education; 1993; Gra-hamstown, South Africa. [ Links ]

66. Ferrer V. The mother tongue in the classroom: Cross-linguistic comparisons, noticing and explicit knowledge. Teaching English Worldwide. 2002;10:1-7. [ Links ]

67. Khati AR. When and why of mother tongue use in English classrooms. NELTA. 2011;16(12):42-51. [ Links ]

68. Chetty N. Student responses to being taught physics in isiZulu. S Afr J Sci. 2013;109(9/10), Art. #2012-0016, 6 pages. http://dx.doi.org/10.1590/sajs.2013/20120016. [ Links ]

69. Ngidi SA. The attitudes of learners, educators and parents towards English as a language of teaching and learning [MSc thesis]. KwaDlangezwa: University of Zululand; 2007. [ Links ]

70. Dempster E, Zuma S. Reasoning used by isiZulu-speaking children when answering science questions in English. J Educ. 2010;50:35-58. [ Links ]

71. Casasanto D, Boroditsky L, Phillips W, Greene J, Goswami S, Bocanegra-Thi-el I. How deep are the effects of language on thought? In: Forbus K, Gentner D, Regier T, editors. Proceedings of the 26th Annual Conference of the Cognitive Science Society; 2004 Aug 5-7; Chicago, USA. Hillsdale, NJ: Lawrence Erlbaum and Associates; 2004. p. 575-580. [ Links ]

72. Armstrong E. A hybrid model of problem-based learning. In: Boud D, Feletti G, editors. The challenge of problem-based learning. London: Kogan; 1991. p. 137. [ Links ]

73. Atkinson RK, Renkl A, Merrill MM. Transitioning from studying examples to solving problems: Effects of self-explanation prompts and fading worked-out steps. J Educ Psyc. 2003;95:774-783. http://dx.doi.org/10.1037/0022-0663.95.4.774 [ Links ]

74. Hurren H, Klegeris A. Impact of the problem based learning in a large classroom setting: Student perception and problem-solving skills. Adv Physiol Educ. 2011;35:408-415. http://dx.doi.org/10.1152/advan.00046.2011 [ Links ]

75. Felder R. How about a quick one. Chem Eng Educ. 1992;26(1):18-19. [ Links ]

76. Davis MH. Outcome-based education. J Vet Med Educ. 2003;30(3):227-232. http://dX!doLorg/10!3138/jvme!30!3!258 [ Links ]

77. Merrill MD. A pebble-in-the-pond model for instructional design. Performance Improvement. 2002;41(7):39-44. http://dx.doi.org/10.1002/pfi.4140410709 [ Links ]

78. Sweller J. Instructional implications of David C. Geary's evolutionary educational psychology. Educ Psychol. 2008;43:214-216. [ Links ]

79. Sweller J. Cognitive load during problem solving: Effects on learning. Cognitive Sci. 1988;12(2):257-285. http://dx.doi.org/10.1080/00461520802392208 [ Links ]

Correspondence:

Correspondence:

Naven Chetty

School of Chemistry and Physics

University of KwaZulu-Natal

PO Box X01, Scottsville 3209, South Africa

Email: chettyn3@ukzn.ac.za

Received: 06 Dec. 2012

Revised: 22 Mar. 2013

Accepted: 09 Oct. 2013

© 2014. The Authors. Published under a Creative Commons Attribution Licence.