Services on Demand

Journal

Article

Indicators

Related links

-

Cited by Google

Cited by Google -

Similars in Google

Similars in Google

Share

South African Journal of Education

On-line version ISSN 2076-3433Print version ISSN 0256-0100

S. Afr. j. educ. vol.30 n.4 Pretoria Jan. 2010

ARTICLES

An analysis of the alignment of the grade 12 physical sciences examination and the core curriculum in South Africa

Nazeem Edwards*

ABSTRACT

I report on an analysis of the alignment between the South African Grade 12 Physical Sciences core curriculum content and the exemplar papers of 2008, and the final examination papers of 2008 and 2009. A two-dimensional table was used for both the curriculum and the examination in order to calculate the Porter alignment index, which indicates the degree of match between the two. Alignment indices of 0.8 and 0.6 for Physics and Chemistry, respectively, were computed and remained constant for Physics, but fluctuated initially for Chemistry before levelling off. Using the revised Bloom's taxonomy, discrepancies were found in terms of cognitive levels as well as content areas in both Physics and Chemistry. The cognitive level Remember is under-represented in the Chemistry and Physics examinations, whereas the cognitive levels Understand and Apply were over-represented in Chemistry. It is argued that the shift to higher cognitive levels is in line with the reported increase in cognitive complexity of the Physical Sciences curriculum. The significance of the study for Physical Science teachers is highlighted, and the potential for further research is also indicated.

Keywords: alignment; assessment; Chemistry; cognitive level; curriculum content; Physics; revised Bloom's taxonomy

Introduction

South African Grade 12 learners wrote their National Senior Certificate (NSC) examination based on the outcomes-based education (OBE) system for the first time in November 2008. This was also the first time that a nationally set public examination was written in all subjects. Only 62.5% of all the candidates obtained their NSC at the first attempt, while the results of the 2009 cohort of learners showed a decline to 60.6% (DoBE, 2010:41). The new curriculum is designed to embody the values, knowledge and skills envisaged in the constitution of the new democratic South Africa. It provides learners with the opportunity to perform at the maximum level of their potential and focuses on high levels of knowledge and skills, while promoting positive values and attitudes (DoE, 2008a:2).

The introduction of OBE in South Africa was intended to redress the legacy of apartheid by promoting the development of skills throughout the school-leaving population in order to prepare South Africa's workforce for participation in an increasingly competitive global economy (Le Grange, 2007: 79). The move towards standards-based assessment practices internationally has been incorporated into the OBE system in South Africa, with learning outcomes and assessment standards being specified for each school subject. The adoption of a radical change of policy on the curriculum was contested and underwent a review in 2000. The current curriculum for Grades R to 9 is a revised version that has been in place since 2002 (OECD, 2008:131). The new curriculum for Grades 10 to 12 was implemented in 2006 and culminated in the NSC examination in 2008.

A Learning Outcome is a statement of an intended result of learning and teaching. It describes knowledge, skills and values that learners should acquire by the end of the Further Education and Training band (Grades 10 to 12). Assessment Standards are criteria that collectively describe what a learner should know and be able to demonstrate at the end of a specific grade. Such standards embody the knowledge, skills and values required to achieve the Learning Outcomes. Assessment Standards within each Learning Outcome collectively show how conceptual progression occurs from grade to grade (DoE, 2003:7).

The Physical Sciences subject area has been divided into six knowledge areas consisting of physics and chemistry components - one of these is an integrated knowledge area spanning both components. Approximately forty-five percent (45%) of the Grade 12 learners who wrote the Physical Sciences NSC examination in 2008 did not achieve the required pass level (DoE, 2008a: 13). This figure increased to 63.2% in 2009 (DoBE, 2010:49). This presents a huge challenge to all science teachers in the country if we are to reach adequate levels of scientific literacy in South Africa. The performance of South Africa's Grade 8 learners in both the 1999 and 2003 Trends in International Mathematics and Science Study (TIMSS) was disappointing (OECD, 2008:54). The TIMSS study showed that learners attained the lowest average test scores in both mathematics and science than all other participating countries. The poor performance of Grade 12 learners in science is perhaps unsurprising if viewed against the TIMSS study. These international comparison studies have played a major role in identifying critical factors impacting on student achievement and have contributed to some extent to the current standards-based science education reforms in the United States and many other countries (Liu, Zhang, Liang, Fulmer, Kim & Yuan, 2009:780).

In an era of accountability and the potential for schools to receive monetary rewards for good achievements in mathematics and science, exit examinations that are not aligned to the assessment standards of the curriculum could have serious consequences for those schools. The learners could be assigned grades that are not indicative of their true abilities, or instruction may be misguided when inferences are drawn about the extent to which learners have mastered the standards when the test has not adequately covered the content standards (Liu et al., 2009:780). Glatthorn (1999:27) has argued for the reconciliation between advocates and dissenters of alignment to see it as a tool that should be used wisely in a time of high-stakes testing by making it teacher-friendly and teacher-directed.

This study is important as South Africa is a developing country with disparities of educational access that learners throughout the educational system experience as a result of inequitable policies of the past. Mapping the alignment of the assessment standards of the curriculum with the assessment in the Grade 12 Physical Sciences exit examination could provide examiners with a tool with which to look at shortcomings of the examination question papers or the assessment standards. Herman and Webb (2007:3) argued that it is only when assessment is aligned with both standards and classroom instruction that assessment results can provide sound information about both how well learners are doing and how well schools and their teachers are doing in helping students to attain the standards. Olson (2003:2) underscores this point by stating that "when a test is used to measure the achievement of curriculum standards, it is essential to evaluate and document both the relevance of a test to the standards and the extent to which it represents those standards. Studies of alignment measure the match, or the quality of the relationship, between a state's standards and its tests. That match can be improved by changing the standards, the tests, or both."

Curriculum change in South Africa

Changes in the educational system in South Africa have been driven by constitutional imperatives and were characterised by policy changes influenced by international perspectives and global economic trends (OECD, 2008:75). Curriculum policy changes were followed by an implementation phase and currently revisions are taking place to address problems that have arisen. Curriculum 2005 (C2005) was launched in 1997 and was informed by principles of OBE as the foundation of the post-apartheid schools' curriculum (Chisholm, 2005:193). C2005 was revised and in 2002 the Revised National Curriculum Statement (RNCS) became policy to be implemented in 2004, and culminated in the phasing in of the National Curriculum Statement (NCS) in Grade 12 in 2008 (OECD, 2008:81). The DoE also published content frameworks in each subject as well as work schedules and subject assessment guidelines in response to Grade 10 to 12 teachers' concerns about the content to be taught (ibid., p.177). Rogan (2007:457) has argued that it is not enough to merely publish a new curriculum and assessment standards, particularly in a developing country. Detailed attention must be given in terms of how things will unfold in practice.

Green and Naidoo (2006:79) studied the content of the Physical Sciences NCS and found that:

• It reconceptualises valid science knowledge.

• It values the academic, utilitarian and social-reconstructionist purpose of science.

• It is based on a range of competences, from the metacognitive level through to the simple level.

• There is a shift to greater competence complexity, and thus to corresponding higher expectations for teachers and learners.

The introduction of OBE in South Africa purportedly brought about a move away from norm-referenced testing to criterion-referenced testing. Less emphasis on summative assessment practices (assessment of learning) to more formative assessment (assessment for learning) was envisaged. In reality the final examination in Grade 12 constitutes 75% of the pass requirement in most subjects and thus represents a summative assessment. Baker, Freeman and Clayton (1991) contended that the underlying assumptions regarding the assessments, such as norm-referenced tests and normally distributed achievement, can result in misalignment with standards that are targeted for all students (cited by Webb, 1999:1).

Alignment Studies

Alignment studies are important in the context of a changed curriculum as it may give an indication of the reform efforts when the assessment results are published. Schools also use the performance results to reflect on areas that need improvement. If there is no alignment between what is taught as specified in the curriculum and what is tested, then schools may well teach to the test and ignore the desired assessment standards. On the other hand if schools teach according to the desired assessment standards and the tests are not aligned, it may well give a false impression of the students' performance relative to those tests. The negative impact on remedial action is great because the real cause of the problem may not be addressed. La Marca (2001: 1) maintained that it is unlikely that perfect alignment between the test and content standards will occur and this is sufficient reason to have multiple accountability measures. In supporting alignment measures he concludes that "it would be a disservice to students and schools to judge achievement of academic expectations based on a poorly aligned system of assessment".

Alignment in the literature has been defined as the degree to which standards and assessments are in agreement and serve in conjunction with one another to guide the system towards students learning what they are expected to know and do (Webb, 1999:2). The emphasis is on the quality of the relationship between the two. A team of reviewers analysed the degree of alignment of assessments and standards in mathematics and science from four states in the United States using four criteria: categorical concurrence (or content consistency), depth-of-knowledge consistency (or cognitive demands), range-of-knowledge correspondence (or content coverage), and balance of representation (or distribution of test items). The alignment was found to vary across grade levels, content areas and states without any discernable pattern (Webb, 1999:vii). The study developed a valid and reliable process for analysing the alignment between standards and assessments.

Systematic studies of alignment based on Webb's procedures have been completed across many states in the United States (Bhola, Impara & Buckendahl, 2003; Olson, 2003; Porter, 2002; Porter, Smithson, Blank & Zeidner, 2007; Webb, 1997; 2007). Essentially it involves panels of subject experts analysing assessment items against a matrix comprising a subject area domain and levels of cognitive demand. Martone and Sireci (2009:1337) reviewed three methods for evaluating alignment - the Webb, Achieve, and Surveys of Enacted Curriculum (SEC) methods. They focused on the use of alignment methodologies to facilitate strong links between curriculum standards, instruction, and assessment. The authors conclude that all three methods start with the evaluation of the content cognitive complexity of standards and assessments with the SEC methodology including an instructional component. The latter method is useful to understand both the content and cognitive emphases whereas the Webb and Achieve methods help to better understand the breadth and range of comparison between the standards and assessment (ibid., p.1356).

Porter, Smithson, Blank and Zeidner (2007:28) have shown that several researchers have modified Webb's procedures to measure alignment. They outline a quantitative index of alignment, which is a two-dimensional language for describing content. The one dimension is topics and the other cognitive demand. The two content dimensions are analysed by experts in the subject field. The cognitive demand was categorized into five levels, ranging from the lowest to the highest: memorize (A), perform procedures (B), communicate understanding (C), solve non-routine problems (D), and conjecture/ generalize/prove (E). An alignment index is produced by comparing the level of agreement in cell values of the two matrices, both of which were standardized by converting all cell values into proportions of the grand total (Liang & Yuan, 2008:1825). Porter (2002:10) also contended that content analysis tools provide information that may be useful in developing more powerful programmes for teacher professional development. The proposed continuing professional development of in-service teachers in South Africa could potentially benefit from an analysis of alignment of the curriculum and examinations. Areas of over- or under-representation either way could be addressed.

Purpose of the study

Following the recommendations of Liang and Yuan (2008:1824) that international studies be done of different countries' curriculum standards and assessment systems, in this paper I analyse the extent to which the Grade 12 Physical Sciences exemplars of 2008 (DoE, 2008c) and the examination papers of 2008 (DoE, 2008d) and 2009 (DoE, 2009) are aligned with the core knowledge and concepts embodied in the NCS for Physical Sciences (DoE, 2003). The DoE published exemplar papers in 2008 to give an idea of what standard and format learners can expect in the final examination. This initiative followed widespread concern that the Grade 12 learners would not be adequately prepared for the final examination in 2008.

In this study the following research questions and sub-questions are investigated:

(a) What is the overall alignment between the Physical Sciences core curriculum and the Grade 12 national Physics and Chemistry examination?

i. How does the alignment between the Chemistry and Physics examination differ?

ii. How does the alignment of the exemplars of 2008 differ from the final examination of 2008 and 2009?

(b) How do the Chemistry and Physics examinations differ from the core curriculum in terms of cognitive levels?

(c) How do the Chemistry and Physics examinations differ from the core curriculum in terms of content areas?

Method

This study employs document analysis as a research method as it involves a systematic and critical examination, rather than a mere description, of an instructional document such as the National Curriculum Statement (IAR, 2010:1). Document analysis is an analytical method that is used in qualitative research to gain an understanding of the trends and patterns that emerge from the data. Creswell and Plano Clark (2007:114) have argued that the researcher must determine the source of data that will best answer the research question or hypotheses by considering what types of data are possible. Merriam (1988) also pointed out that "documents of all types can help the researcher uncover meaning, develop understanding, and discover insights relevant to the research problem" (cited by Bowen, 2009:29). The justification for using document analysis also stems from its efficiency in terms of time, accessibility within the public domain and cost effectiveness (ibid., p.31).

A slightly modified version of Porter's method is used to analyse the core knowledge and concepts of the curriculum by employing a revised Bloom's taxonomy (RBT) which includes the cognitive level categories Remember, Understand, Apply, Analyse, Evaluate and Create (Liang & Yuan, 2008:1826). To facilitate the process, keywords were used to classify items and learning outcomes into the different cognitive levels. This was done for the curriculum as well as all the question papers in Chemistry and Physics.

Rationale for using the revised Bloom's taxonomy

The use of the RBT, which is a two-dimensional table, was a move away from the restrictive hierarchical original taxonomy. The notion of a cumulative hierarchy has been removed so that a student may use a higher-order cognitive skill without a lower-order one (Anderson, 2005:106). For example, a student may be applying a law (say Newton's first law) without necessarily understanding the law. The cognitive complexity at a lower level may be greater than at a higher level. These points are emphasised by Krathwohl (2002:215) when he states:

However, because the revision gives much greater weight to teacher usage, the requirement of a strict hierarchy has been relaxed to allow the categories to overlap one another. This is most clearly illustrated in the case of the category Understand. Because its scope has been considerably broadened over Comprehend in the original framework, some cognitive processes associated with Understand (e.g. Explaining) are more cognitively complex than at least one of the cognitive processes associated with Apply (e.g. Executing).

Mayer (2002:226) posited the idea that the revised taxonomy is aimed at broadening the range of cognitive processes so that meaningful learning can occur. This can be achieved by not only promoting retention of material but transfer as well which entails the ability to use what was learned to solve new problems. This is particularly relevant in Physics where the learner must solve problems that they have not encountered before by applying their prior knowledge. Näsström (2009:40) has also shown that the revised taxonomy is useful as a categorisation tool of the standards for the following reasons:

1. It is designed for analysing and developing standards, teaching and assessment as well as of emphasising alignment among these main components of an educational system.

2. It has general stated content categories which allow comparisons of standards from different subjects.

3. In a study where standards in chemistry were categorised with two different types of models, Bloom's revised taxonomy was found to interpret the standards more unambiguously than a model with topics-based categories.

This particular alignment study focuses on the range of competences per content area within Physical Sciences using the RBT. The two-dimensional structure of the RBT allows for teachers to increase the cognitive complexity of their teaching which may lead to meaningful learning (Amer, 2006:224-225).

Data collection procedures

Analysis of core knowledge and concepts in Physical Sciences In South Africa the NCS for Physical Sciences (Grades 10-12) has been divided into six core knowledge areas:

• two with a chemistry focus - Systems; Change;

• three with a physics focus - Mechanics; Waves, Sound and Light; Electricity and Magnetism; and

• one with an integrated focus - Matter and Materials. (DoE, 2003:11)

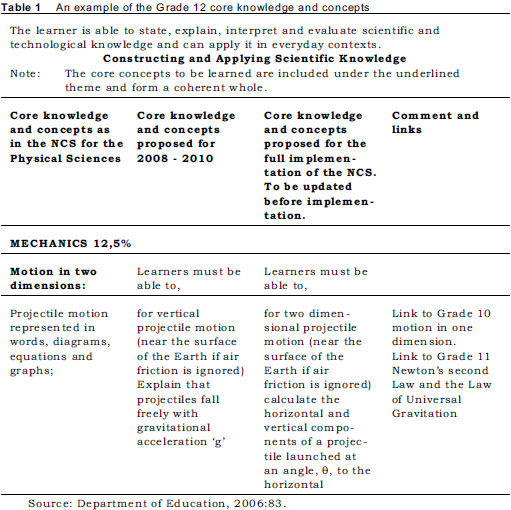

A Physical Sciences content document was published in 2006 to give depth to the NCS (DoE, 2006). Table 1 is an extract from this document which shows that column one corresponds to the NCS and column two gives the depth to the concepts.

The Physical Sciences Grade 12 examination guidelines give specific details regarding the themes to be tested in each of the knowledge areas (DoE, 2008b:14). It outlines the format of the question papers in Chemistry and Physics, knowledge that is required from content in Grades 10 and 11 and the core content that will be assessed in the final examination. The latter corresponds to the first two columns which show the core knowledge and concepts in Table 1. There are 32 themes in Physics including force, momentum, projectile motion, work, energy, power, Doppler Effect, electrostatics, electric circuits, optical phenomena, etc. Chemistry has 20 themes including organic chemistry, electrochemistry, rates of reactions, chemical industries, etc.

The themes for Physics and Chemistry resulted in a 32 × 6 table and a 20 × 6 table, respectively, for the core knowledge and the 6 cognitive levels within the revised Bloom's taxonomy. The themes were then collapsed into the knowledge areas for ease of comparison with the examination later. The examination does not necessarily include all the themes, but all the knowledge areas are covered. Liu et al. (2009:782) motivated similarly in their study that "in order for standardised tests to properly guide instruction, it was necessary to focus attention on broader topics or big ideas instead of isolated and specific topics". Two coders with a combined experience of more than 30 years in teaching Physical Sciences independently classified the curriculum content using the tables to achieve an inter-coder reliability of 0.97 and 0.98 for Physics and Chemistry, respectively. These relatively high coefficients can be ascribed to the use of the keywords to classify the curriculum content. The assessment statements ("the learner must be able to ...") were unambiguous and straightforward to classify. The differences were resolved through discussion.

Analysis of the Chemistry and Physics exemplar and examination

In 2008 the DoE published exemplars and preparatory examination papers for many subjects in anticipation of the final examination in November. In this article the Chemistry and Physics examination papers of the exemplar of 2008 and the final examinations of 2008 and 2009 have been analysed. Each examination paper is worth 150 marks and together they contribute 75% of the pass mark in the subject. A variety of questions including multiple-choice questions, one-word answers, matching items, true-false items and problem-solving questions were included. The same two coders analysed the examinations along with the marking memoranda using a matrix identical to the one used for the core curriculum. An average inter-coder reliability of 0.88 and 0.92 for the Physics and Chemistry examination respectively was obtained. Differences were again resolved through discussion.

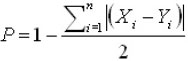

Calculating the Porter alignment index

The cell values in the curriculum table and examination table are standardised to make them comparable, that is, converted into ratios totalling to 1 (Liu et al., 2009:781). The Porter alignment index is defined as:

where n is the total number of cells in the table and i refers to a specific table cell, ranging from 1 to n. For example, for a 3 × 4 table, there are 12 cells, thus n = 12. Xi refers to the ith cell of Table X (e.g. the standardized test table) and Yi refers to the corresponding cell (ith cell) in Table Y (e.g. the content standard table). Both Xi and Yi are ratios with a value from 0 to 1. The sum of X1 to Xn is equal to 1, so is the sum of Y to Y . The discrepancy between the ith cells of the test table and the standard table can be calculated as

The total absolute discrepancy is then calculated by summing the absolute discrepancies over all cells (Liu et al., 2009:781-782).

The values of the Porter alignment index ranges from 0 to 1, which indicates no alignment or perfect alignment respectively (Liang & Yuan, 2008: 1829). The alignment indices as well as the discrepancies between corresponding cells, by cognitive levels and knowledge area, were all computed using Microsoft Excel. Liu and Fulmer (2008:375) have also argued that Porter's alignment model has two advantages over other models: (a) it adopts a common language to describe curriculum, instruction and assessment; and (b) it produces a single number as the alignment index.

Results

A. Core knowledge and concepts in Physical Sciences

Table 2 presents the core knowledge and concepts of the Grade 12 Physics curriculum according to knowledge themes and cognitive levels within the revised Bloom's taxonomy. Within the cognitive level categories Remember constitutes the largest proportion (38.9%), followed by Understand (29.9%) and Apply (26.4%). The last three categories have by far the lowest percentages as is evident from the table. The knowledge area Electricity and Magnetism contributes 40.3% of the total cognitive categories while Mechanics makes up 27.1%. The knowledge theme that spans both Physics and Chemistry (i.e. Matter & Materials) contributes 13.2% of the total.

Table 3 presents the core knowledge and concepts of the Grade 12 Chemistry curriculum according to knowledge themes and cognitive levels. The cognitive level Remember again has the greatest emphasis (48.8%) compared with Understand (29.9%). Apply contributes 26.4% with the other three cognitive levels making insignificant contributions.

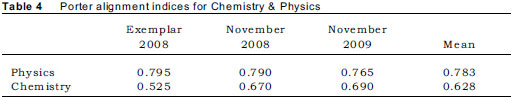

B. Overall Porter alignment index

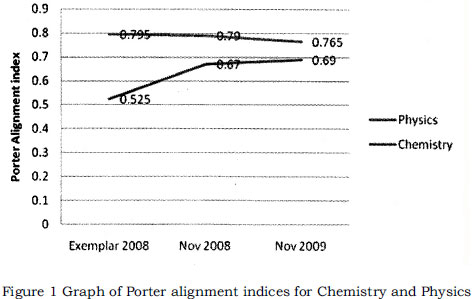

The overall Porter alignment indices are shown in Table 4. The alignments between the Physics curriculum and the exemplar as well as the 2008 and 2009 final examinations are statistically significant, with a mean of 0.783. The mean alignment index for Chemistry is lower (0.628) and not significant at the 0.05 level. Liu et al. (2009:781) have shown that an alignment of 0.78 is significant at the 0.05 level.

Figure 1 shows the difference in alignment between the Chemistry and Physics paper and the change from the exemplar to the two final examinations. Physics had consistently higher alignments than Chemistry and remained constant from the exemplar to the final examination in November 2009. The increase in alignment for Chemistry from the exemplar to the final examination in 2008 is steep. There appears to be a levelling off from 2008 to 2009.

C. Cognitive level differences

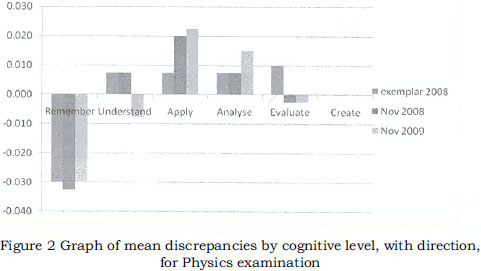

Table 5 presents the mean discrepancies bycognitive level for the Physics exemplar and November examinations. These represent the differences between the ratios in the curriculum table and the examination table. Negative numbers indicate that the curriculum is under-represented. The discrepancies ranged from +0.8% (Understand, Apply, Analyse) to -3% (Remember) for the Exemplar; from +0.8% (Understand & Analyse) to -3.3% (Remember) for the November 2008 examination; and ranged from -0.3% (Evaluate) to -3% (Remember) for the November 2009 examination. The largest discrepancy is in the Remember category for all three examinations. There appears to be no overall discrepancy in terms of cognitive complexity amongst the three examinations.

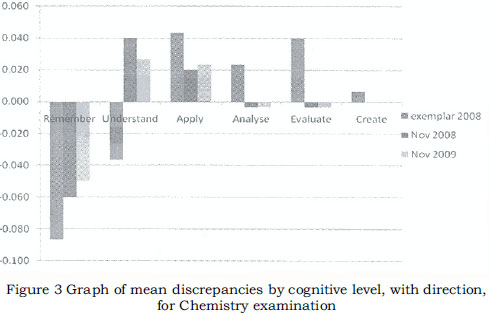

Table 6 presents the cognitive-level differences in Chemistry. Remember as a cognitive level is again under-represented (-6.6%), while the Apply level is over-emphasised (+2.9%). The cognitive level Evaluate is also over-emphasised (+1.1%).

The discrepancies within the Exemplar ranges from +0.7% (Create) to -8.7% (Remember); within the November 2008 examination it ranges from -0.3% (Analyse & Evaluate) to -6% (Remember); and it ranges from -0.3% (Analyse & Evaluate) to -5% (Remember) for the November 2009 examination. The exemplar and two final examinations across all levels only marginally under-represent the curriculum in terms of cognitive demand.

Figure 2 graphically illustrates how the curriculum in Physics is underrepresented in the Remember category, but over-represented in almost all the other categories.

The same scenario obtains when looking at Figure 3 which presents a graph of the discrepancies in Chemistry. The Remember cognitive level is again under-emphasised, while in most of the other categories there is an over-emphasis.

D. Discrepancies by content areas

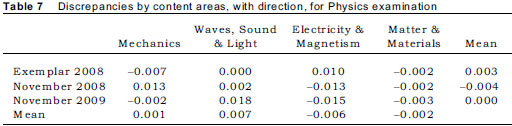

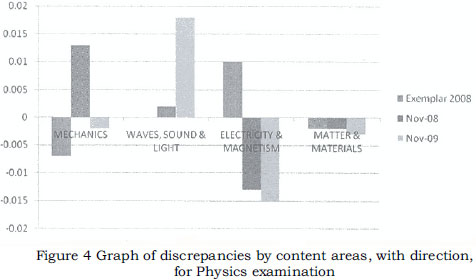

Table 7 shows the discrepancies by content areas for the Physics examinations, while Figure 4 illustrates this graphically. In Mechanics the biggest discrepancy was +1.3% for the November 2008 examination while in the November 2009 examination it was marginally under-represented. The knowledge area Waves, Sound and Light had a discrepancy of +1.8% in the November 2009 examination. Electricity and Magnetism was slightly underrepresented in both the final examinations. Marginal under-representation also occurred in the Matter and Materials knowledge area in all three Physics papers.

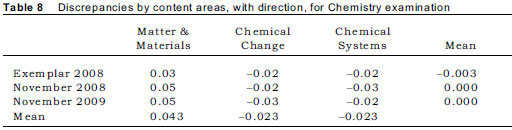

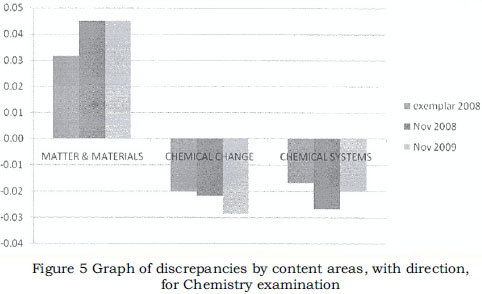

Table 8 presents the discrepancies by content areas for Chemistry. In the knowledge area Matter and Materials there is an over-emphasis (mean = +4.3%) in all the examination papers. Chemical Change and Chemical Systems are both under-represented (mean = -2.3%) in all the Chemistry examination papers. Figure 5 graphically shows this under-representation for Chemical Change and Chemical Systems.

Discussion and implications

The classification of the core curriculum content in this paper has been relatively straightforward in that verbs are used to describe what a learner must be able to do. By using a list of keywords in each cognitive level, it is a matter of classifying according to the verb. Hence, the relatively high inter-rater correlations achieved by the coders can be understood, and any differences are minor and easily resolved through discussion. Classifying the exemplar and examination items for Physics and Chemistry is a more tedious process. Having experienced teachers also helped to achieve good inter-rater correlations. Differences of opinion do arise and so the resolution through discussion takes much longer.

The Porter alignment indices for Physics and Chemistry have been shown to be about 0.8 and 0.6, respectively. This is similar to what Liu and Fulmer (2008:379) found in their study. There is little variation in Physics and an initial fluctuation in the index for Chemistry which then becomes constant between the final examinations. The Physics alignment index is also consistently higher than the Chemistry alignment index. The degree of alignment depends on the table matrix, because it would be more difficult to find exact matching in the corresponding cells if the table is larger, but having more specific categories helps. The tables that are used for Physics and Chemistry have specific themes under each knowledge area, which also helped with the coding of items. An insignificant alignment index is not necessarily a bad thing if this is due to the examination including more cognitively demanding items. There has been a differentiated impact of testing on teaching due to the relative emphases of the topics and cognitive demands (Liu et al., 2009:792). Teachers may use the items to adopt more student-centred pedagogies or they will use it to drive their lessons in a teacher-centred way.

The adoption of a two-dimensional table to calculate the Porter alignment index is in line with the revised Bloom's taxonomy. This allows for a non-hierarchical approach so that cognitive complexity can occur across levels. Science concepts may be mastered at different cognitive levels, but recall (Remember) is essential for problem-solving since it taps into long-term memory. The higher-order cognitive levels promote transfer of knowledge as opposed to formulaic methods where learners are adept at substituting numbers into formulae. This study has found that the Remember category was under-emphasised in the exemplar and final examinations for both Physics and Chemistry. In the Physics final examinations of 2008 and 2009, Apply as a cognitive level is over-represented. This could simply be an indication that there is a shift away from simple recall to more demanding skills in Physics. The final examinations of 2008 and 2009 in Chemistry emphasised the cognitive levels Understand and Apply. Krathwohl (2002:215) highlighted that some cognitive processes associated with Understand are more complex than those associated with Apply. Green and Naidoo (2006:79) also showed the shift in cognitive complexity of the Physical Sciences curriculum overall. There is clearly a need for Physical Science teachers to improve the skills of learners to handle more complex items in the examination if the Grade 12 final examination results are anything to go by.

A look at the examiners' reports (WCED, 2008a:1-2; WCED, 2008b:1-2) for the final examination in Physics and Chemistry highlights the following:

• candidates are unable to explain phenomena by applying principles;

• new work in the curriculum is poorly answered;

• candidates struggle with concepts requiring higher-order reasoning to solve problems;

• candidates lack basic understanding (comprehension); and

• higher-order questions that also occurred in the old curriculum are well answered.

The lack of emphasis on higher-order cognitive skills could possibly lead to teachers not preparing Grade 12 learners adequately for the examination. Where teachers appear to be familiar with the work from the old curriculum, the learners are doing well. However, it would seem that a lack of development of teachers in the new materials is hampering the progress of learners. The learners are also doing worse in the Chemistry paper overall and a lower alignment index is one possible explanation for this. The exemplar and examinations in Physics maintain a balanced emphasis across the knowledge areas, whereas for Chemistry instances of over- and under-representation occur. Within the knowledge area Matter and Materials in Chemistry there is a 5% over-representation in the final examination. This contains a lot of new material in Organic Chemistry that learners struggle to understand. Perhaps there is also a need to develop teachers in this area as many of them do not have an adequate background in Organic Chemistry.

The 2009 Grade 12 Physical Sciences results that were released in January 2010 showed a drastic increase in the failure rate. One possible explanation for this is that no new exemplars for Physical Sciences were made available in 2009. This study has shown that on the whole the final examinations of 2008 and 2009, and the exemplars of 2008 were fairly consistent across cognitive levels and content areas. What needs to be researched is the issue of test familiarity, since other preparatory examinations were also released in 2008. Umalusi (2009:121) compared the NCS with the old curriculum and concluded that it is far more difficult in terms of breadth of content than is the old curriculum, but is midway between the Higher and Standard Grade levels of the old curriculum in terms of the levels of difficulty of the content topics. The Physical Sciences panel also estimated that up to 35% more classroom time is needed for the NCS content compared with the old curriculum.

There are huge gaps inherent in the South African education system as far as implementing the new curriculum is concerned. Teachers utilise the published policy documents to give direction to their teaching. The curriculum documents themselves should be clear and give a balanced weighting across cognitive levels. If this situation obtains, then teachers can have a reasonable expectation that the examination should be aligned to the curriculum content. Alignment studies would thus be important to elucidate the differences in the intended curriculum and the enacted curriculum. They could also be used as a tool for the professional development of teachers to analyse their internally-set examinations. This study makes a first contribution towards establishing the quality of the relationship between the Physical Sciences curriculum and the examination in the South African context. It also has potential to be utilised in other subjects such as Mathematics and Life Sciences. A further area of research, which has been beyond the scope of this study, would be to examine the cognitive complexity within each knowledge area in Physics and Chemistry.

Acknowledgements

I thank Lucille Phillips and Andrew Fair for their participation in this study, the teachers for their contribution at a workshop on the revised Bloom's taxonomy, Prof Lesley Le Grange for useful comments on an earlier draft, and the reviewers for constructive feedback.

References

Amer A 2006. Reflections on Bloom's revised taxonomy. Electronic Journal of Research in Educational Psychology, 4:213-230. [ Links ]

Anderson LW 2005. Objectives, Evaluation, and the improvement of education. Studies in Educational Evaluation, 31:102-113. [ Links ]

Bhola DS, Impara JC & Buckendahl CW 2003. Aligning tests with states' content standards: Methods and issues. Educational Measurement: Issues and Practice, 22:21-29. [ Links ]

Bowen GA 2009. Document analysis as a qualitative research method. Qualitative Research Journal, 9:27-40. [ Links ]

Chisholm L 2005. The making of South Africa's National Curriculum Statement.Journal of Curriculum Studies, 37:193-208. [ Links ]

Creswell J & Plano Clark VL 2007. Designing and conducting mixed methods research. Thousand Oaks: Sage. [ Links ]

Department of Education 2003. National Curriculum Statement Grades 10-12 (General) Policy: Physical Sciences. Pretoria: Government Printer. [ Links ]

Department of Education 2006. National Curriculum Statement Grades 10-12 (General): Physical Sciences Content. Pretoria: Government Printer. [ Links ]

Department of Education 2008a. Abridged Report: 2008 National Senior Certificate Examination Results. Pretoria: Department of Education. [ Links ]

Department of Education 2008b. Examination Guidelines: Physical Sciences Grade 12 2008. Pretoria: Department of Education. [ Links ]

Department of Education 2008c. Physical Sciences Exemplars 2008. Available at http://www.education.gov.za/Curriculum/grade12_examplers.asp. Accessed 24 October 2008. [ Links ]

Department of Education 2008d. Physical Sciences Examination 2008. Available at http://www.education.gov.za/Curriculum/NSC%20Nov%202008%20Examination%20Papers.asp. Accessed 8 July 2009. [ Links ]

Department of Education 2009. Physical Sciences Examination 2009. Available at http://www.education.gov.za/Curriculum/NSC%20Nov%202009%20Examination%20Papers.asp. Accessed 3 March 2010. [ Links ]

Department of Basic Education 2010. National Examinations and Assessment Report on the National Senior Certificate Examination Results Part 2 2009. Pretoria: Department of Education. [ Links ]

Glatthorn A 1999. Curriculum alignment revisited. Journal of Curriculum and Supervision, 15:26-34. [ Links ]

Green W & Naidoo D 2006. Knowledge contents reflected in post-apartheid South African Physical Science curriculum documents. African Journal of Research in SMT Education, 10:71-80. [ Links ]

Herman JL & Webb NM 2007. Alignment methodologies. Applied measurement in education, 20:1-5. [ Links ]

Instructional Assessment Resources (IAR) 2010. Document analysis. Available at http://www.utexas.edu/academic/diia/assessment/iar/teaching/plan/method/doc-analysis.php. Accessed 24 August 2010. [ Links ]

Krathwohl DL 2002. A revision of Bloom's taxonomy: An overview. Theory into Practice, 41:212-218. [ Links ]

La Marca PM 2001. Alignment of standards and assessments as an accountability criterion. Practical Assessment, Research & Evaluation, 7. Available from http://PAREonline.net/getvn.asp?v=7&n=21. Accessed 15 August 2010. [ Links ]

Le Grange L 2007. (Re)thinking outcomes-based education: From arborescent to rhizomatic conceptions of outcomes (based-education). Perspectives in Education, 25:79-85 [ Links ]

Liang L & Yuan H 2008. Examining the alignment of Chinese national Physics curriculum guidelines and 12th-grade exit examinations: A case study. International Journal of Science Education, 30:1823-1835. [ Links ]

Liu X & Fulmer G 2008. Alignment between the Science curriculum and assessment in selected NY State Regents exams. Journal of Science Education and Technology, 4:373-383. [ Links ]

Liu X, Zhang B, Liang L, Fulmer G, Kim B & Yuan H 2009. Alignment between the Physics content standard and the standardized test: A comparison among the United States-New York State, Singapore, and China-Jiangsu. Science Education, 93:777-797. [ Links ]

Martone A & Sireci SG 2009. Evaluating alignment between curriculum, assessment, and Instruction. Review of Educational Research, 79:1332-1361. [ Links ]

Mayer RE 2002. Rote versus meaningful learning. Theory into Practice, 41:226-232. [ Links ]

Näsström G 2009. Interpretation of standards with Bloom's revised taxonomy: a comparison of teachers and assessment experts. International Journal of Research & Method in Education, 32:39-51. [ Links ]

Olson L 2003. Standards and tests: Keeping them aligned. Research Points: Essential Information for Education Policy, 1:1-4. [ Links ]

Organisation for Economic Co-Operation and Development 2008. Reviews of National Policies for Education: South Africa. OECD, Paris. [ Links ]

Porter AC 2002. Measuring the content of instruction: Uses in research and practice. Educational Researcher, 31:3-14. [ Links ]

Porter AC, Smithson J, Blank R & Zeidner T 2007. Alignment as a teacher variable. Applied Measurement in Education, 20:27-51. [ Links ]

Umalusi 2009. From NATED 550 to the new National Curriculum: maintaining standards in 2008. Part 2: Curriculum Evaluation. Pretoria: Umalusi. [ Links ]

Rogan JM 2007. How much curriculum change is appropriate? Defining a zone of feasible innovation. Science Education, 91:439-460. [ Links ]

Webb NL 1997. Criteria for alignment of expectations and assessments in mathematics and science education (Research monograph No. 6). Washington, DC: Council of Chief State School Officers. [ Links ]

Webb NL 1999. Alignment of science and mathematics standards and assessments in four states (Research monograph No. 18). Washington, DC: Council of Chief State School Officers. [ Links ]

Webb NL 2007. Issues related to judging the alignment of curriculum standards and assessments. Applied Measurement in Education, 20:7-25. [ Links ]

Western Cape Education Department 2008a. Physical Sciences -Paper 1. Available at http://wced.wcape.gov.za/documents/2008-exam-reports/reports/physical_science_p1.pdf. Accessed 26 February 2010. [ Links ]

Western Cape Education Department 2008b. Physical Sciences -Paper 2. Available at http://wced.wcape.gov.za/documents/2008-exam-reports/reports/physical_science_p2.pdf. Accessed 26 February 2010. [ Links ]

Author

Nazeem Edwards is a science education Lecturer in the Department of Curriculum Studies at Stellenbosch University and has 22 years teaching experience. His research interests are in developing pre-service science teachers' conceptual understanding, science teachers' pedagogical content knowledge, argumentation in science education and alignment studies.